1 Introduction

Although the spread of misinformation is not a recent topic, there has been a significant increase in this practice with the advent of the internet [Wang, McKee, Torbica & Stuckler, 2019; Wang & Liu, 2022]. The increase in the amount and speed of dissemination of misinformation has been the focus of concern for researchers from multiple fields of study [Cook, 2022; van der Linden, Leiserowitz, Rosenthal & Maibach, 2017; Wang et al., 2019]. The health area is one of the most affected by misinformation, mainly due to its contribution to promoting anti-vaccine movements that contributed to a reduction in vaccination coverage [Wang et al., 2019; Wang & Liu, 2022] and recent diseases outbreaks in several countries [Datta et al., 2018; Wang et al., 2019; Wang & Liu, 2022]. Environmental sciences are another area deeply impacted by misinformation [Cook, 2022; van der Linden et al., 2017; Yang & Wu, 2021], and which has some thematic overlap with the health area, for example, in the health impacts generated by pollution [Yang & Wu, 2021] and/or climate change [Paavola, 2017]. Although there is consensus among the scientific community that humans are responsible for global warming, there is no consensus among the general population [Cook, 2022; van der Linden et al., 2017]. It is common for many people not to believe that humans are responsible for global warming and to share misinformation that support their beliefs even without any kind of scientific basis [van der Linden et al., 2017]. This is a major problem since combating global warming requires changes in the behavior of the world’s population, what won’t happen if people believe that their behavior doesn’t influence climate change or increase pollution levels [Cook, 2022; van der Linden et al., 2017].

2 Misinformation, disinformation, and fake news definitions

In recent years, the fight against misinformation, disinformation and fake news has been one of the great challenges faced by science, especially in health and environmental fields [Ecker et al., 2022; Whitehead, French, Caldwell, Letley & Mounier-Jack, 2023]. Despite close concepts, some academic efforts have been dedicated to conceptually differentiate them. According to the American Psychological Association [APA], misinformation can be defined as a “false, inaccurate, or misleading information that is communicated regardless of an intent to deceive” [American Psychological Association, 2022]. Other authors corroborate this definition and add that disinformation involves deliberately spreading false information to cause harm [Ecker et al., 2022; Kapantai, Christopoulou, Berberidis & Peristeras, 2021; Whitehead et al., 2023]. Furthermore, scholarly research has defined fake news as a form of falsehood intended to primarily deceive people by mimicking the look and feel of real news, often emphasizing the importance of facticity [Tandoc, 2019; Pennycook, McPhetres, Zhang, Lu & Rand, 2020]. Despite the term fake news is not new, it has been used in academic research to refer also to a range of content, including political satire, news parodies, state propaganda, and false advertising [Tandoc, Lim & Ling, 2018]. Fake news is unique from other forms of misinformation because it mimics the traditional news format [Tandoc, 2019].

Throughout this review, we use the term ‘misinformation’ to refer to false information, myths/beliefs, and misperceptions, as the focus of this review is on interventions rather than assessing the source and intent of false information. This approach has been used by previous authors to synthesize and organize the presentation of the results of systematic reviews in the field of misinformation [Wang et al., 2019; Whitehead et al., 2023].

3 Health and environment related misinformation

Misinformation related to scientific topics, especially on controversial topics about the environment [e.g., global warming and climate change — Ecker et al., 2022; Lubchenco, 2017; Kolmes, 2011] and health [e.g., vaccination — Gostin, 2014; Whitehead et al., 2023], are considered major global concerns. Given the urgency to understand the phenomenon, research focused on the analysis of misinformation were conducted, with the aim of understanding patterns of language [Nsoesie & Oladeji, 2020], typologies [Kapantai et al., 2021], means of circulation and dissemination in digital environments [Almaliki, 2019; Malik, Bashir & Mahmood, 2023; Wang et al., 2019], psychological aspects of belief systems of people who trust in misinformation [Tsamakis et al., 2022; Balakrishnan, 2022; Wang & Liu, 2022], perception of the public, health professionals and scientists on the topic [Lei et al., 2015; Cioe et al., 2020].

Furthermore, some of the literature focuses only on one or two methods of combating misinformation [Blank & Launay, 2014; Chan, Jones, Hall Jamieson & Albarracín, 2017; Walter & Tukachinsky, 2020; Lazić & Žeželj, 2021] or address a specific context (e.g., COVID-19, autism, vaccines). For example, in a context of vaccine hesitation, some authors conducted a systematic review and identified that certain communication strategies (e.g., scare tactics), have been shown to be ineffective and may even increase endorsement of misinformation [Whitehead et al., 2023]. Other authors searched for studies on institutional health communication and concluded that there is no one-size-fits-all strategy for web-based healthcare communication [Ceretti et al., 2022]. Another systematic review with a focus on mitigating misinformation related to COVID-19, found that the evaluated interventions had a positive mean effect size, but this effect was not statistically significant. The study also found that interventions were more effective when participants were engaged with the topic and when text-only mitigation was utilized [Janmohamed et al., 2022].

Other systematic reviews were conducted focusing on tackling disinformation in general, not limited to health and environment issues. For example, Walter and Murphy [2018] conducted a systematic review on misinformation, revealing that corrective messages have a moderate effect on belief in misinformation. However, the study found that it is more challenging to correct misinformation in political and marketing contexts compared to health. Rebuttals and appeals to coherence, based narrative correction, were found to be more effective than information correction as forewarnings, fact-checking and appeals to credibility. Similar results are also found in another general misinformation systematic review. The authors discovered that corrective messages are more effective when they are coherent and consistent with the audience’s worldview, and when they are delivered by the source of the misinformation itself. However, corrections are less successful if the misinformation was attributed to a credible source, repeated multiple times before correction, or there was a delay between the delivery of the misinformation and the correction [Walter & Tukachinsky, 2020].

Based on this state of art it is possible to observe that there are few systematic reviews dedicated to map the most used interventions to face misinformation related to health and the environment. In fact, the few existent reviews on interventions address health issues [Janmohamed et al., 2021; Steffens et al., 2021; Whitehead et al., 2023]. To the best of our knowledge, there are no systematic reviews that have investigated which are the most used environment-related interventions and if they are effective. This is concerning, as the prevalence of misinformation on climate issues and their negative impacts has been extensively documented by previous studies [Cook, 2022; van der Linden et al., 2017].

Furthermore, previous reviews and meta-analyses have a limited focus on specific interventions (e.g., inoculation) for a specific issue [e.g., COVID-19, vaccines — Janmohamed et al., 2021; Whitehead et al., 2023]. To the best of our knowledge, there are no reviews that have encompassed multiple types of interventions and health topics. Therefore, considering previous findings indicating that the same type of intervention may be effective on one topic (e.g., yellow fever misinformation) but not on another similar one [e.g. zika virus misinformation — Carey, Chi, Flynn, Nyhan & Zeitzoff, 2020], in our review, no topic or intervention-specific restrictions will be used in order to address if the same type of intervention is effective in different themes.

4 Strategies for confronting health and environment related misinformation

Recently, there has been a rising interest in developing strategies to combat misinformation spread and impact in society [Sharma et al., 2019; Thaler & Shiffman, 2015]. In general, there are three distinct intervention strategies: pre-emptive intervention (prebunking), during (nudging), and after exposure intervention (debunking). Prebunking aims to assist individuals in recognizing and resisting misinformation they may encounter in the future. Debunking involves responding to specific misinformation after it has been encountered to demonstrate why it is false [Bruns, Dessart & Pantazi, 2022; Ecker et al., 2022]. Nudges aim to change people’s behavior without changing their incentives or restricting their choices. Nudges are employed when people encounter misinformation [Bruns et al., 2022].

A clear example of nudging strategies is the use of deliberation prompts where individuals are asked to think about a particular theme and decide whether they believe in the information presented. These deliberation suggestions are normally presented as accuracy tasks (i.e., to assess whether an information or news are true) and flagging [i.e., flag an information or a source as false — Bago, Rand & Pennycook, 2020; Bruns et al., 2022; Tsipursky, Votta & Roose, 2018]. Furthermore, inoculation is one of the most common ways to correct misinformation before exposure (i.e., prebunking). This intervention strategy warns people of the potential existence of misinformation and/or explains the flaws in the strategies used to spread misinformation [Bruns et al., 2022; Ecker et al., 2022].

On the other hand, media and information literacy actions have also been presented as pre-emptive strategies to address misinformation [Bruns et al., 2022; Balakrishnan, 2022; Ecker et al., 2022; Nascimento et al., 2022]. This intervention strategy may be defined as the ability to effectively find, understand, evaluate, and use information [Ecker et al., 2022]. Although media and information literacy have garnered significant attention, there is a scarcity of large-scale evidence on the efficacy of the endeavors to combat online misinformation. Previous academic research on digital and media literacy is often qualitative in character or concentrates on specific subgroups or topics. There is a predominance of observational research [Vraga & Tully, 2021; Jones-Jang, Mortensen & Liu, 2021], and randomized controlled trials are infrequent [Guess, Nagler & Tucker, 2019]. Nevertheless, the limits of Media and Information Literacy are noted by research that shows that the fact that citizens believe or share misinformation is not a matter of lack of education or knowledge but is also related with their beliefs [Pennycook et al., 2020]. They also show that people feel as the owners of privileged news, with the need to share it to increase social capital in their network of relationships [Grabner-Kräuter & Bitter, 2015].

Furthermore, the debunking strategy commonly uses credible information from reliable sources to refute false information and replace it with correct information [Bruns et al., 2022; Crozier & Strange, 2019; Lewandowsky et al., 2020]. Several studies have been directed towards comprehending the impact of individuals’ trust and credibility in epistemic authorities [Carr, Barnidge, Lee & Tsang, 2014; Hardy, Tallapragada, Besley & Yuan, 2019]. For example, Freeman, Caldwell and Scott [2023] address that healthcare organizations and pediatricians creating health-related resources on social media platforms must consider trust-related factors to ensure that accurate and reliable information reaches adolescents. van Dijk and Caraballo-Arias [2021] defend the importance of encouraging evidence-based practice in occupational health and safety, which should rely mainly on credible online sources. Pluviano, Watt, Ragazzini and Della Sala [2019] defend the relevance of the trustworthiness of the source rather than their level of expertise. Another debunking strategy is the exposure and correction with or without a rhetorical explanation of the misinformation [Bruns et al., 2022; Lewandowsky et al., 2020]. This intervention strategy is commonly used against widely spread misinformation, especially when a fast response is needed [Bruns et al., 2022]. The exposure and correction intervention can be delivered in multiple formats, such as narrative corrections, infographics, or videos [Bruns et al., 2022; Ecker et al., 2022; Lewandowsky et al., 2020; Yousuf et al., 2021].

Therefore, there are multiple forms of interventions to combat misinformation, being imperative to map which intervention strategies are most used and if they present efficacy evidence, especially considering the global concern surrounding health and environment topics [Bruns et al., 2022; Carey et al., 2020; Cook, 2022; van der Linden et al., 2017], and evidence indicating that the same intervention strategy may be effective in one health theme, but not in another [Carey et al., 2020]. However, despite the profusion of scientific literature on misinformation, there are few experimental studies that present empirical results capable of providing accurate information on the effectiveness of methods used to address misinformation. Furthermore, to the best of our knowledge there is no systematic review that has investigated the effectiveness of interventions that address environment topics or that has evaluated interventions used across multiple health themes. The lack of such systematic reviews in these fields highlights a gap in the current research on misinformation, which this study aims to address. Therefore, this study seeks to identify which are the most used strategies to confront misinformation related to health, and the environment. As a secondary aim, we presented effect sizes to demonstrate whether the proposed interventions were effective.

5 Method

A systematic review of scientific literature was conducted following the steps proposed by the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) protocol [Page et al., 2021]. Queries for scientific articles were performed in December 2021 in the Web of Science, Scopus, PsycINFO, Science Direct, IEEE Xplore databases. The descriptor key used was: (“fake news” or misinformation or disinformation). All descriptors were consulted and selected according to the Medical Subject Headings (MeSH/PubMed) and TheSaurus (PsycINFO) tools. The only filters used in the databases were document type (i.e., articles, journals) and year of publication (i.e., 2010–20211). This review was not registered, and a protocol was not prepared because during 2020–2022 all protocols that did not specifically address the COVID-19 topic were being automatically published exactly as submitted in PROSPERO without eligibility check.

The articles retrieved from the databases were imported into the Rayyan online platform, which allows authors to exclude duplicate articles and carry out the article selection process in the blind mode. After the selection is complete, it is possible to compare the rate of agreement between the evaluators and discuss possible disagreements [Ouzzani, Hammady, Fedorowicz & Elmagarmid, 2016]. After excluding duplicate articles, two raters (TO and RA) started the reading process of the title and abstract of the remaining studies. Any disagreements between raters were solved by a third researcher (NOC). Then, four raters (TO, RA, RQ and NOC) participated during the full text reading. Any disagreements between raters were solved by a fifth senior researcher (WLM). The following inclusion and exclusion criteria are applied during selection process:

6 Eligibility criteria (PICOS)

6.1 Participants

Studies that met the following criteria were included: 1) adults of legal age ( 18). Studies that had 1) a heterogeneous sample in the same intervention group (i.e., adolescents and adults in the same group), with a mean age <18 were excluded.

6.2 Interventions and comparisons

Studies that met at least one of the following criteria were included: 1) at least one group received some type of intervention related to the prevention, confrontation/mitigation of misinformation in the areas of health, or the environment; 2) comparison of the intervention with a control group and/or other type of intervention.

6.3 Outcomes

Studies that measured at least one of the following were included: 1) changes in the levels of influence/belief in misinformation and/or changes in perceptions/opinions regarding a given topic (e.g., pro-vaccine vs. anti-vaccine); 2) assessment of beliefs and perceptions regarding misinformation on at least two time points (e.g., pre-test and post-test).

It is noteworthy that we understand health-related misinformation as any kind of disinformation, fake news, or misleading information related to any health topic (e.g., vaccines, infectious, cardiovascular, and viral diseases, cancer, smoking, nutrition). On the other hand, we understand environment-related misinformation as any disinformation, fake news, or misleading information related to global warming, climate change or pollution.

6.4 Study design

The following studies were included: 1) randomized controlled trials (RCT) or single-group pre-test or any experimental and quasi-experimental designs; 2) providing statistical data on the effectiveness of the intervention (e.g., Cohen’s , Hedges’ , odds-ratio, eta-squared, Glass’s , Relative Risk or Risk Ratio, standardized beta). Articles that 1) did not report a standardized effect size (e.g., unstandardized beta) and appropriate statistics for effect size calculation (e.g., t values, means and standard deviations) were excluded.

All eligibility criteria were applied by two independent researchers during the selection of articles carried out on the Rayyan platform. The final decision to include the manuscripts was made by reading the full text to verify that the pre-established inclusion criteria were fulfilled. Any disagreement between the two independent investigators were resolved by a third senior investigator.

7 Data extraction

After selecting the articles, the following data were extracted from the included articles: 1) identification of the article (authors’ last name, design, year of publication, Country); 2) sample size and mean age; 3) sample distribution by sex; 4) study population; 5) education level; 6) type (i.e. Debunking, Prebunking or Nudging) and duration of interventions used; 7) risk of bias (control for difference in prior belief scores); and 8) main outcomes related to health or environment misinformation belief or trust/belief in true facts/information. A single effect size was extracted per group/comparison condition. When studies reported on several relevant outcomes (e.g., belief that vaccines cause autism and belief in propolis as protection for zika virus), the extracted outcome was always the one with the largest effect size. The data was extracted by four reviewers (NOC, EZS, RA and RQ).

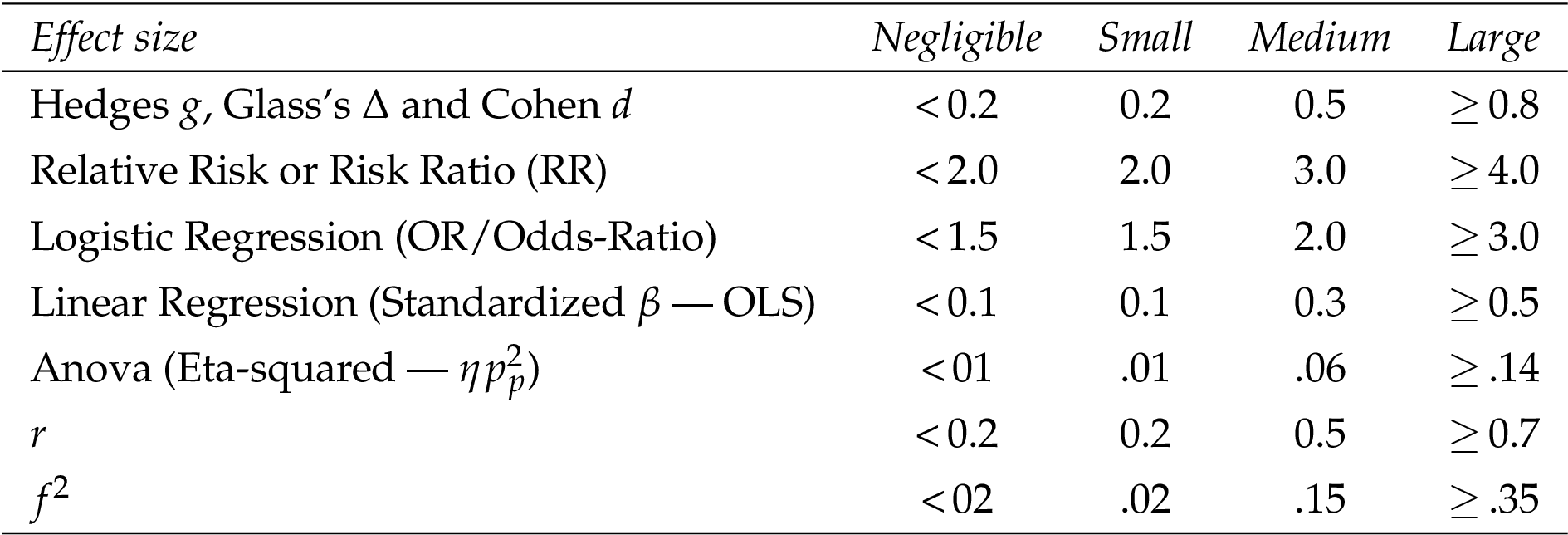

Data were analyzed descriptively with the aim of producing a synthesis of the evidence found. Continuous variables are presented in tables including the mean and standard deviation, with categorical variables in tables including percentages or frequencies. For each study included, the type of effect size used to report the results was identified (e.g., Cohen’s , Hedges’ , odds-ratio), these effect sizes being then classified into negligible (N), small (S), medium (M), and large (L). Table 1 shows the values used to interpret each type of effect size. These values were based on the recommendations of Sullivan and Feinn [2012] and Cohen [2007].

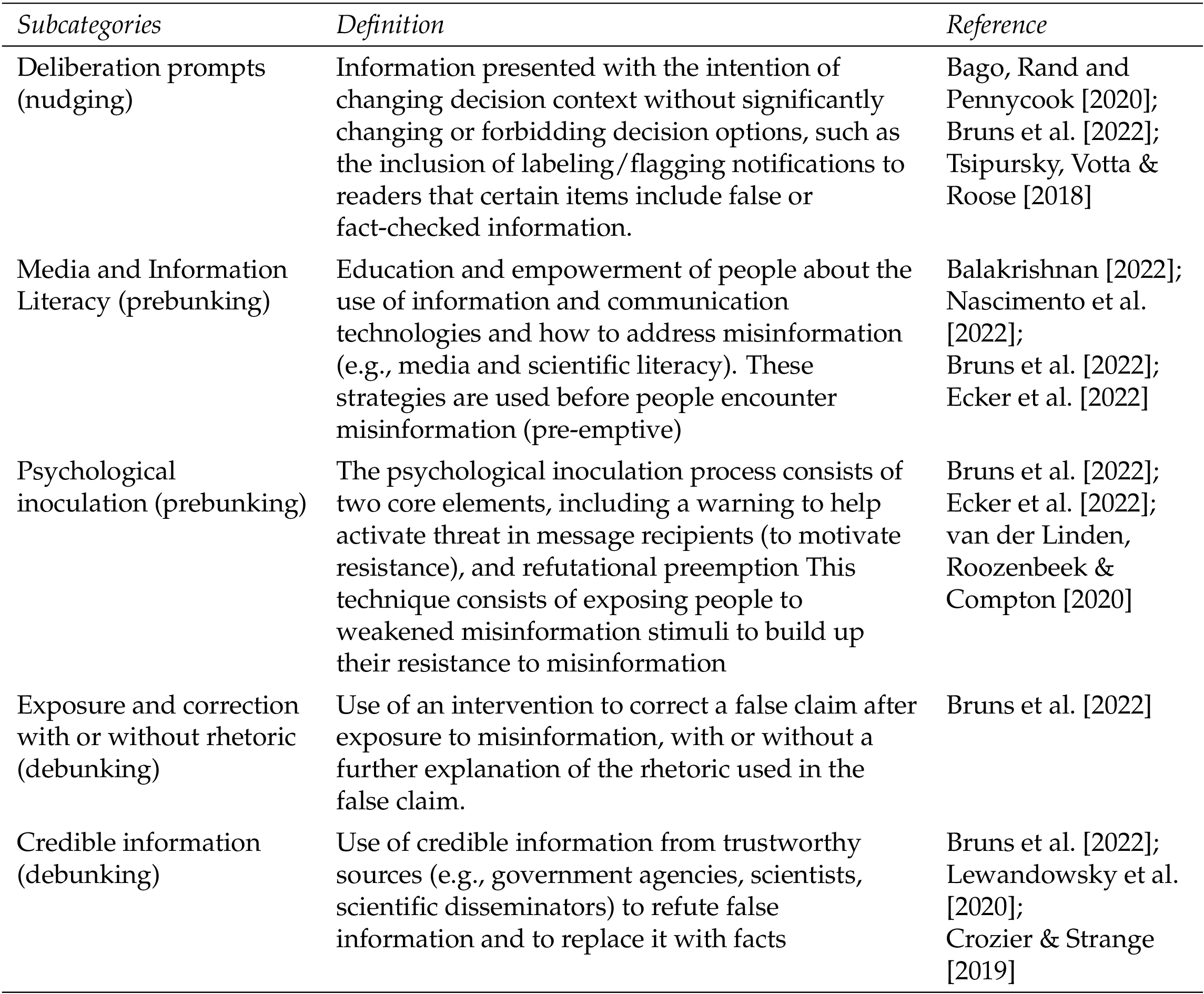

Furthermore, based on the previous literature [Bruns et al., 2022; Ecker et al., 2022], we divided all interventions used to combat misinformation into three macro categories: Prebunking, Nudging and Debunking. Within these macro categories, there are 5 commonly applied strategies (i.e., subcategories): 1) Deliberation prompts; 2) Credible information; 3) Psychological inoculation; 4) Exposure and correction with or without rhetoric; 5) Media and Information Literacy. Subcategories are detailed in Table 2.

The categories and subcategories classification are conducted by two raters (RA and RQ) and revised by a third senior researcher (TO). It is noteworthy that as stated by Bruns et al. [2022] “there are other ways to differentiate these interventions, and that the boundaries are not always clear”. Therefore, the classification carried out in this review is one of the possible ways of grouping these interventions, being based on the understanding of classical authors [Bruns et al., 2022; Ecker et al., 2022].

8 Results

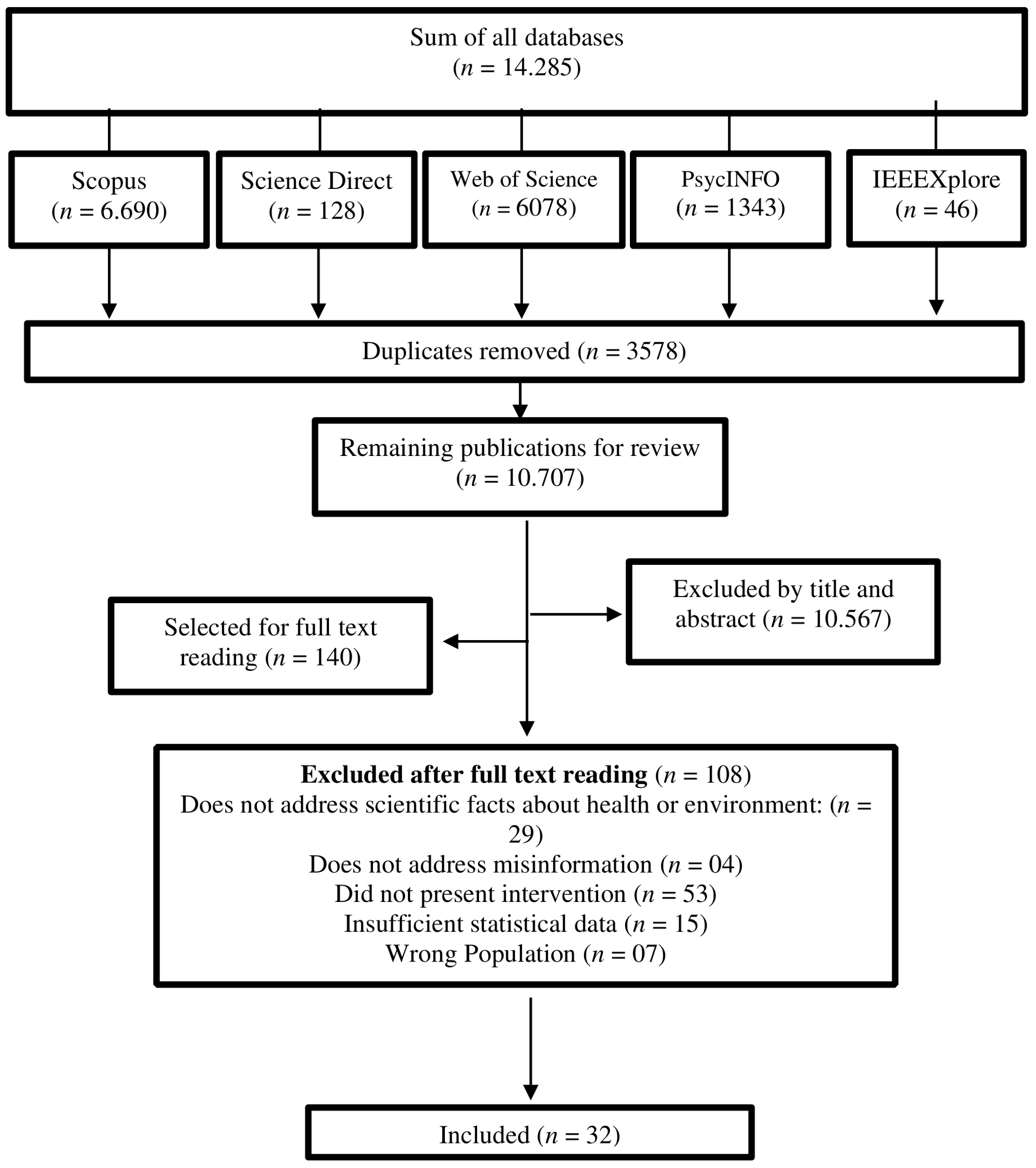

From the results of all the databases, 14.285 articles were initially found. After duplicates were removed the total number of records screened was 10.707. The agreement rate between TO and RA (who screened articles titles and abstracts) was IRR = 0.87 (i.e., there was an agreement in 9323 out of 10707 records screened). Disagreements between raters were solved through group discussion with a third reviewer (NOC). There was total agreement between raters regarding the selection of 140 papers for full text review. During the full text review, there was disagreement among the raters regarding the inclusion/exclusion decisions of 17 of 140 studies (IRR = 0.88) and the opinion of a fifth senior researcher (WLM) was consulted, those inconsistencies were resolved by discussion. There was total agreement between all raters regarding the final inclusion of 32 papers. Figure 1 presents the steps followed during the stages of identification and selection of articles in accordance with PRISMA [Page et al., 2021]. The database file with 14.285 articles is available as supplementary material. All data extracted from the included studies were directly transposed to the Tables presented through the results and no meta-analysis was performed.

Table 3 presents the main characteristics of the articles included and the participants. Each article received a number in ascending order (1, 2, 3…). This numbering will be used to mention the studies throughout the results and discussion.

Most of the included studies were conducted in the United States (; 56.3%) and the United Kingdom (; 22%). Among the 32 experimental papers included, 31 were randomized controlled trials (97%). Additionally, approximately half of the articles conducted and presented the results of two or more experiments (; 43.8%), totaling 54 experiments. The sample of participants used in the experiments ranged from 90 to 7079. When including the samples from all studies, a total of 58.489 participants were obtained. A third of the studies (; 33.4%) did not report the mean age and/or standard deviation of all experiments, and therefore, it was not possible to estimate the global mean age of the analyzed papers. The sex of the participants was equally divided in most studies (; 59.4%). Nevertheless, 11 papers (34.4%) used samples composed mostly of women, while two others of men 60%. Education was not reported by almost half of the studies (; 40.7%) Among those who reported education, most were composed of samples with 50% higher education (; 63.2%).

9 Types of methods for combating disinformation

Most of the included articles use the term misinformation (), followed by the term’s misperception (; studies 1, 10, 11, 16, 18, 21, 23), fake or false news (; studies 3, 4, 8, 13, 25, 29, 30), and disinformation (, studies 6, 13). The sum is greater than 32 manuscripts (total number of articles included) because some authors used more than one concept in the same article. Considering that all the included articles used the term misinformation or misperception, during the presentation and discussion of our results we will keep the use of the term “misinformation” to clarify and standardize the text. Table 4 demonstrates the main characteristics of the interventions used and their effect sizes.

Our findings demonstrated that the most used intervention (macro categories) used by the included studies are those based in debunking (; 62.5%), followed by prebunking (; 25%) and nudging strategies (; 12.5%). Among the subcategories, the credible information was the most used intervention among the included studies (; 34.4%) followed by exposure and correction (; 31.3%), inoculation (; 18.8%), deliberation prompts (; 12.5%) and media and information literacy (; 9.4%). It should be noted that some studies used more than one type of intervention and compared their effects between groups. Therefore, some of the articles included were counted multiple times.

Moreover, it was found that the focus of the interventions was information and news about health (; 65.7%), followed by studies that investigated multiple facts (e.g., about health, environment, security, food — ; 18.8%), and the environment (; 12.5%). Regarding interventions delay, most studies did not perform a follow-up (; 84.5%). Only five of the studies investigated whether the effect size of interventions remained stable after a delay (Studies 1–3, 25 and 29). Only the intervention effect of study 29 remained stable after 3 months.

Regarding the possible bias associated with the magnitude of pre-intervention beliefs (e.g., in science or conspiracy theories), it is observed that 22 studies (68.9%) use some mechanism to control this effect. Among these articles, only one (Study 1) found no significant difference between pre-intervention scientific knowledge (i.e., magnitude of belief in science) and the effect size of applied interventions. In this sense, most of the analyzed interventions showed evidence of effectiveness in reducing both conspiracy theories and anti-conspiracy theories beliefs. However, it should be noted that the interventions tend to be less effective among both individuals with higher scores for conspiratorial beliefs, as well as in individuals with lower scores in scientific knowledge or in the Cognitive Reflection Test (CRT). This finding suggests that it is more difficult to modify the opinion/belief in misinformation of individuals who have been previously exposed to conspiracy theories (Studies 4, 6, 8, 12, 13, 15–17, 20, 21, 23). Furthermore, study 16 suggests that the relationship between pre-intervention beliefs and the effect size of inoculation interventions may be moderated by the type of subject (e.g., health, politics, environment) of the news and/or information, since the intervention was effective to reduce misinformation regarding HPV vaccine, but not for environment misinformation. The same happens in study 1, where intervention was effective to reduce misinformation regarding yellow fever, but not zika virus, with evidence of backfire effect in this last topic.

Regarding the type of outcome evaluated by the included studies, it was observed that 5 (15.7%) evaluated only the effectiveness of interventions in increasing beliefs in correct information, while 13 (40.7%) evaluated it only for misinformation. Nevertheless, only 14 papers (43.8%) analyzed the impacts of interventions on both correct information and misinformation. The exclusive measurement of the intervention’s effect on misinformation may be a research bias, as there is evidence of interventions that, despite reducing belief in misinformation, also end up undermining belief in correct information (i.e., backfire effect; Studies 1, 20, 30 and 31).

Regarding the evidence of efficacy, all but one (study 24) of the included studies were effective in reducing misinformation belief and/or increasing belief in correct information in some of their interventions; however, the size of such effects tends to vary between the types of intervention. Among the studies that used exposure and correction, six reported small effect sizes (Studies 1, 2, 5, 14, 15 and 22), five reported medium effect sizes (Studies 1, 5, 12, 22 and 32), and two reported large effect sizes (Studies 4 and 11). Regarding the articles that used credible information, nine had a small effect size (Studies 10, 15, 18, 19, 23, 25, 27, 28 and 31), five had a medium effect size (Studies 9, 10, 17, 19, 21), and one had a large effect size (Studies 9). All studies that used deliberation prompted interventions, found at least a small effect size (Studies 6–8 and 13), and one identified a medium effect size (Study 6). For inoculation interventions, five studies found a small effect size (Studies 16, 20, 25, 26 and 29), three had a medium effect size (Studies 20, 25 and 30) and one had a large effect size (Study 29). Additionally, media and information literacy interventions had two small and two medium effect sizes (Studies 3 and 25), and one negligible (Study 24).

Therefore, only interventions focusing on credible information, inoculation, exposure, and correction had a large effect size (Studies 4, 9, 11, and 29). These results, however, should be analyzed with caution, because it was not possible to perform a metanalysis to compare the average effect size and risk of bias between the experiments. Mainly because, each one of the studies uses different intervention strategies (e.g., bad news game, fact-check), with different types of content (e.g., vignettes, pictures, text). Moreover, the largest effect sizes were observed in groups that had a high pre-intervention scientific knowledge/belief score (Study 4), which did not analyze or report the possible effect of pre-existing beliefs on the results (Study 9), or which designed an intervention specific for each type of public (sensitivity to needles vs. vaccines without needles; moral purity vs. impacts of measles on the skin — Study 11). Thus, it is observed that there is no gold standard intervention that can be replicated and applied indiscriminately for different audiences and themes. Furthermore, most interventions were effective in reducing misinformation for specific themes, populations, and conditions, therefore, they can be cross-culturally adapted to other countries and used under similar circumstances.

10 Discussion

This systematic review aimed to investigate which are the most used strategies to confront misinformation in the fields of health and environment, as well as their effectiveness. Our results indicated that most used intervention types (macro categories) are those based in debunking, followed by prebunking and nudging. We also found five commonly used strategies (subcategories): 1) Credible information; 2) Exposure and correction; 3) Psychological inoculation; 4) Deliberation prompts; 5) Media and Information Literacy. Although all interventions presented some evidence of efficacy, only 15 studies had medium effect sizes (Studies 1, 3, 5, 6, 9, 10, 12, 17, 19–22, 25, 30 and 32) and four had large effect sizes (Studies 4, 9, 11, and 29).

Therefore, most of the available intervention strategies used to confront misinformation about health and the environment have an effect size between small and medium. Moreover, it was observed that all interventions were designed for specific audiences, themes, and situations. Therefore, further studies are needed to test the effectiveness of these interventions with different audiences and/or themes.

11 Most used strategies to confront misinformation related to health, and the environment

In our review, credible information and exposure correction are the most used interventions among included studies (; 65.7%). This finding is corroborated by previous authors which pointed out that interventions based on debunking are commonly used by studies seeking to combat misinformation [Bruns et al., 2022; Ecker et al., 2022]. One possible explanation for this is the fact that interventions based on debunking are focused on confronting specific misinformation. However, while this is useful for quickly combating widespread misinformation, it also turns out to be a limitation of debunking, because due to the specific focus there is no increase in resistance against multiple forms and topics of misinformation that may arise in the future. Only interventions based on prebunking and nudging provide such resistance [Bruns et al., 2022]. Despite that, most studies that found large effect sizes in our review used debunking interventions (Studies 4, 9, 11), only one was based on prebunking (study 29). However, these effect sizes should be interpreted with caution considering the limitations, possible biases of each of the studies and lack of metanalysis. Our findings may suggest that prebunking and nudging interventions are less used, but this does not necessarily indicate that they are less effective, especially considering that these interventions are underrepresented in our review. Therefore, we corroborate previous authors’ conclusions that more experimental studies testing interventions based on prebunking and nudging are necessary [Bruns et al., 2022; Ecker et al., 2022].

Furthermore, study 11 showed the importance of understanding behavior and belief systems for the formulation of more effective strategies. The authors identified that individuals with higher levels of moral purity are more likely to see vaccines as contaminants of the body, but messages that highlight the illness caused by under-vaccination can use their higher moral purity to push them towards vaccine support. This finding is aligned with the results of Chan et al. [2017] which discusses the factors that contribute to effective messages for countering attitudes and beliefs based on misinformation. The authors indicated large effects for presenting misinformation, debunking, and the persistence of misinformation in the face of debunking. The persistence effect was stronger, and the debunking effect was weaker when the audience generated reasons to support the initial misinformation. The results are akin to those discovered in other systematic reviews, indicating the importance of examining the motives behind the spread of false information, which should be determined beforehand [Celliers & Hattingh, 2020]. Other reviews have shown that correcting misinformation through fact-checking is not necessarily effective for all subjects, and that corrections coming from family and friends and people with a mutual relationship tend to be more effective [Arcos, Gertrudix, Arribas & Cardarilli, 2022]. Similar results were identified in this systematic review. Despite credible information being a more recurrent theme among experimental studies of combating misinformation, the results suggests that the effect size may be larger when information is conveyed by family and friends. Information conveyed by celebrities and healthcare professionals showed a moderate effect size, while information conveyed on television programs had a negligible effect (study 9).

Other authors also corroborate the results of our review, stating that evidence of the effectiveness of debunking corrections is often inconsistent, with differences between studies effect sizes and several cases of backfire effect [Walter & Murphy, 2018; Walter & Tukachinsky, 2020]. A systematic review on general disinformation discovered that corrective messages are more effective when they are coherent and consistent with the audience’s worldview, and when they are delivered by the source of the misinformation itself. However, corrections are less successful if the misinformation was attributed to an external credible source, repeated multiple times before correction, or there was a delay between the delivery of the misinformation and the correction [Walter & Tukachinsky, 2020]. It may explain the large effect size of the study 7 intervention (i.e., in the family and friends’ condition) in misinformation belief reduction.

Another point to be considered concerns pre-existing beliefs, and it is common for the effects of interventions to be greater in individuals who already believe in certain scientific facts (Studies 4, 6, 8, 12, 13, 15–17, 20, 21, 23). Such findings are similar to other studies or systematic reviews that note that the effects of misinformation rely on several factors, with pre-existing attitudes and beliefs playing a very important role in the acceptance of misinformation content by individuals [Bruns et al., 2022; Ecker et al., 2022; Walter & Tukachinsky, 2020].

Along the same lines, another topic that appears to be little explored is the possible thematic moderation in the association between previous beliefs and the effect of interventions, especially considering that only one of the articles included (Study 16) analyzed this effect and showed that, in the health theme (i.e., HPV), individuals with less belief in scientific facts assigned greater credibility to the correction presented. Conversely, the same individuals assigned less credibility to corrections on issues related to the environment and to gun control when compared to individuals with greater belief in scientific facts. Other literature reviews have highlighted the inclusion of variables such as awareness, ethnicity, and religion, based on a cultural relativism perspective, can be useful to increase the percentages of health-related misinformation, with a focus on vaccination [Khan, Hussain & Naz, 2022]. The authors suggest that interventions using culturally approved methods, may raise awareness of credible relationships while considering ethnic origins through their leadership.

It is also important to emphasize the presence of a geographic bias. Most studies used U.S.-based populations. Other countries studied include the United Kingdom, the Netherlands, India, China, Brazil, and Israel. Systematic examination of differences in fact-checking effectiveness among these countries is not yet possible, as the main results have not been widely replicated in similar experiments across countries. Another highlight is the need to carry out studies that experiment with multimodalities. Hameleers, Powell, Van Der Meer and Bos [2020] found that multimodal misinformation was perceived as slightly more reliable than textual misinformation and that the presence of fact-checkers results in lower levels of credibility.

According to the study by Swire-Thompson et al. [2021], the correct presentation of information is fundamental, regardless of the format or subcategory used. They argue that if the key ingredients of a correction are presented, the format does not make a difference. This suggests that providing corrective information, regardless of format, is much more important than how the correction is presented. When analyzing our findings, we can observe that they are partially aligned with this hypothesis. Our results indicate that the five subcategories studied — and the three macro categories — were effective in correcting misinformation. However, it is important to note that some of these subcategories are underrepresented, with only five or fewer studies available. Nonetheless, our findings corroborate the importance of correction in promoting accurate information, regardless of the subcategory or format used.

12 Limitations and further studies directions

The current review is valuable because it maps the available interventions and their effectiveness in reducing misinformation belief or increasing belief in correct information. Despite that, our results must be interpreted considering some limitations. First, although we used five of the largest scientific databases, pubmed/medline were not searched. Therefore, although most publications indexed in PsycInfo, Scopus and Web of Science are also indexed in Pubmed, it is possible that some experiments were not found during our searches.

Second, the PRISMA protocol has not been fully implemented, as we did not perform a metanalysis. This decision was taken after verifying the heterogeneity of interventions (e.g., app video game, fact-checks, texts, imagens), outcomes (e.g., belief in conspiracy theories, vaccines cause autism, global warming does not exist), outcome measurements (e.g., ad hoc instruments) and study design (e.g. RCT/NRCT, control/no control group) used in the analyzed experiments. Our decision is aligned with the Cochrane Handbook for Systematic Reviews of Interventions [Higgins et al., 2019], which states that too diverse outcomes should not be compared in a meta-analysis.

Third, all included studies reported multiple outcomes (e.g., misinformation beliefs, behaviors, emotions), however, due to the large number of outcomes, we opted to extract a single effect size per group/comparison condition related to misinformation correct information beliefs. That is to say, when studies reported on several relevant outcomes (e.g., belief that vaccines cause autism and belief in propolis as protection for zika virus), the extracted outcome was always the one with the largest effect size. For example, in Study 1, the largest effect size was evidenced in reducing the mistaken belief that propolis provides protection against yellow fever (; ). Nevertheless, the effect size for reducing the belief that vaccines are ineffective was negligible (). Such evidence suggests that interventions designed to combat misinformation may be effective for specific facts/myths. Furthermore, interventions may also cause a reduction in beliefs in correct information (i.e., backfire effect), such as the belief that the Zika virus causes neurological problems (; ) and increases the risk of microcephaly (; ).

Therefore, it is possible that some interventions may have a different effect size for behavioral and emotional outcomes. Future reviews should address this gap. Considering that a previous random effects metanalysis concludes that most interventions designed to mitigate COVID-19 misinformation belief have little or no effect and that most publications have high risk of bias [Janmohamed et al., 2021]. In our review, we try to demonstrate that misinformation interventions in health and environment may be effective under specific circumstances (e.g., specific populations, belief outcomes). Therefore, it is advisable that this review be used mainly as a list of most used interventions and its effectiveness be appreciated with caution (since we did not perform a metanalysis and do not intend to compare which intervention is more effective) and only under the specific context in which they were tested (e.g., health or environment topic). That is to say, each researcher or practitioner should assess which intervention is the most appropriate for the reality of their target audience and consider their limitations.

Furthermore, considering that this review was conducted during the pandemic and given the increasing concern in the scientific literature on confronting misinformation, it is crucial that an update is pursued based on experimental studies that have been carried out after the pandemic. Lastly, the experimental replication and interventions cross-cultural adaptation to other countries are also recommended, especially considering that most interventions are tested only in the U.S.A.

Acknowledgments

This research is supported by the Foundation for Research Support of the State of Rio de Janeiro — Faperj (Program for Support to Thematic Projects [E-26/211.347/2021], Fluminense Young Researcher [E-26/210.336/2021], PPSUS [E-26/211.299/2021] Young Scientist of Our State [26.201.299/2021]), National Council for Scientific and Technological Development — CNPq (Productivity Fellow PQ-2 and [309110/2022-0][311258/2019-0] and Emerging Research Groups in Climate Change [406599/2022-0], Academic Doctorate for Innovation, and Coordination for the Improvement of Higher Education Personnel — Capes, Finance Code 001.

References

-

Almaliki, M. (2019). Online misinformation spread: a systematic literature map. In ICISDM ’19: Proceedings of the 2019 3rd International Conference on Information System and Data Mining. doi:10.1145/3325917.3325938

-

American Psychological Association (2022). Misinformation and disinformation. Retrieved from https://www.apa.org/topics/journalism-facts/misinformation-disinformation

-

Arcos, R., Gertrudix, M., Arribas, C. & Cardarilli, M. (2022). Responses to digital disinformation as part of hybrid threats: a systematic review on the effects of disinformation and the effectiveness of fact-checking/debunking. Open Research Europe 2, 8. doi:10.12688/openreseurope.14088.1

-

Bago, B., Rand, D. G. & Pennycook, G. (2020). Fake news, fast and slow: deliberation reduces belief in false (but not true) news headlines. Journal of Experimental Psychology: General 149 (8), 1608–1613. doi:10.1037/xge0000729

-

Balakrishnan, V. (2022). Socio-demographic predictors for misinformation sharing and authenticating amidst the COVID-19 pandemic among Malaysian young adults. Information Development. doi:10.1177/02666669221118922

-

Blank, H. & Launay, C. (2014). How to protect eyewitness memory against the misinformation effect: a meta-analysis of post-warning studies. Journal of Applied Research in Memory and Cognition 3 (2), 77–88. doi:10.1037/h0101798

-

Bruns, H., Dessart, F. J. & Pantazi, M. (2022). Covid-19 misinformation: preparing for future crises. An overview of the early behavioural sciences literature. Publications Office of the European Union. doi:10.2760/41905

-

Carey, J. M., Chi, V., Flynn, D. J., Nyhan, B. & Zeitzoff, T. (2020). The effects of corrective information about disease epidemics and outbreaks: evidence from Zika and yellow fever in Brazil. Science Advances 6 (5), eaaw7449. doi:10.1126/sciadv.aaw7449

-

Carr, D. J., Barnidge, M., Lee, B. G. & Tsang, S. J. (2014). Cynics and skeptics: evaluating the credibility of mainstream and citizen journalism. Journalism & Mass Communication Quarterly 91 (3), 452–470. doi:10.1177/1077699014538828

-

Celliers, M. & Hattingh, M. (2020). A systematic review on fake news themes reported in literature. In M. Hattingh, M. Matthee, H. Smuts, I. Pappas, Y. K. Dwivedi & M. Mäntymäki (Eds.), Responsible design, implementation and use of information and communication technology: 19th IFIP WG 6.11 Conference on e-Business, e-Services, and e-Society, I3E 2020 (pp. 223–234). doi:10.1007/978-3-030-45002-1_19

-

Ceretti, E., Covolo, L., Cappellini, F., Nanni, A., Sorosina, S., Taranto, M., … Gelatti, U. (2022). Assessing the state of Web-based communication for public health: a systematic review. European Journal of Public Health 32 (Supplement_3), ckac130.056. doi:10.1093/eurpub/ckac130.056

-

Chan, M.-p. S., Jones, C. R., Hall Jamieson, K. & Albarracín, D. (2017). Debunking: a meta-analysis of the psychological efficacy of messages countering misinformation. Psychological Science 28 (11), 1531–1546. doi:10.1177/0956797617714579

-

Cioe, K., Biondi, B. E., Easly, R., Simard, A., Zheng, X. & Springer, S. A. (2020). A systematic review of patients’ and providers’ perspectives of medications for treatment of opioid use disorder. Journal of Substance Abuse Treatment 119, 108146. doi:10.1016/j.jsat.2020.108146

-

Cohen, J. (2007). A power primer. Tutorials in Quantitative Methods for Psychology 3 (2), 79. doi:10.20982/tqmp.03.2.p079

-

Cook, J. (2022). Understanding and countering misinformation about climate change. In Research anthology on environmental and societal impacts of climate change (pp. 1633–1658). doi:10.4018/978-1-6684-3686-8.ch081

-

Crozier, W. E. & Strange, D. (2019). Correcting the misinformation effect. Applied Cognitive Psychology 33 (4), 585–595. doi:10.1002/acp.3499

-

Datta, S. S., O’Connor, P. M., Jankovic, D., Muscat, M., Ben Mamou, M. C., Singh, S., … Butler, R. (2018). Progress and challenges in measles and rubella elimination in the WHO European region. Vaccine 36 (36), 5408–5415. doi:10.1016/j.vaccine.2017.06.042

-

Ecker, U. K. H., Lewandowsky, S., Cook, J., Schmid, P., Fazio, L. K., Brashier, N., … Amazeen, M. A. (2022). The psychological drivers of misinformation belief and its resistance to correction. Nature Reviews Psychology 1 (1), 13–29. doi:10.1038/s44159-021-00006-y

-

Freeman, J. L., Caldwell, P. H. Y. & Scott, K. M. (2023). How adolescents trust health information on social media: a systematic review. Academic Pediatrics 23 (4), 703–719. doi:10.1016/j.acap.2022.12.011

-

Gostin, L. O. (2014). Global polio eradication: espionage, disinformation, and the politics of vaccination. The Milbank Quarterly 92 (3), 413–417. doi:10.1111/1468-0009.12065

-

Grabner-Kräuter, S. & Bitter, S. (2015). Trust in online social networks: a multifaceted perspective. Forum for Social Economics 44 (1), 48–68. doi:10.1080/07360932.2013.781517

-

Guess, A., Nagler, J. & Tucker, J. (2019). Less than you think: prevalence and predictors of fake news dissemination on Facebook. Science Advances 5 (1), eaau4586. doi:10.1126/sciadv.aau4586

-

Hameleers, M., Powell, T. E., Van Der Meer, T. G. L. A. & Bos, L. (2020). A picture paints a thousand lies? The effects and mechanisms of multimodal disinformation and rebuttals disseminated via social media. Political Communication 37 (2), 281–301. doi:10.1080/10584609.2019.1674979

-

Hardy, B. W., Tallapragada, M., Besley, J. C. & Yuan, S. (2019). The effects of the “war on science” frame on scientists’ credibility. Science Communication 41 (1), 90–112. doi:10.1177/1075547018822081

-

Higgins, J. P. T., Thomas, J., Chandler, J., Cumpston, M., Li, T., Page, M. J. & Welch, V. A. (Eds.) (2019). Cochrane handbook for systematic reviews of interventions. doi:10.1002/9781119536604

-

Janmohamed, K., Walter, N., Nyhan, K., Khoshnood, K., Tucker, J. D., Sangngam, N., … Kumar, N. (2021). Interventions to mitigate COVID-19 misinformation: a systematic review and meta-analysis. Journal of Health Communication 26 (12), 846–857. doi:10.1080/10810730.2021.2021460

-

Janmohamed, K., Walter, N., Sangngam, N., Hampsher, S., Nyhan, K., De Choudhury, M. & Kumar, N. (2022). Interventions to mitigate vaping misinformation: a meta-analysis. Journal of Health Communication 27 (2), 84–92. doi:10.1080/10810730.2022.2044941

-

Jones-Jang, S. M., Mortensen, T. & Liu, J. (2021). Does media literacy help identification of fake news? Information literacy helps, but other literacies don’t. American Behavioral Scientist 65 (2), 371–388. doi:10.1177/0002764219869406

-

Kapantai, E., Christopoulou, A., Berberidis, C. & Peristeras, V. (2021). A systematic literature review on disinformation: toward a unified taxonomical framework. New Media & Society 23 (5), 1301–1326. doi:10.1177/1461444820959296

-

Khan, N., Hussain, N. & Naz, A. (2022). Awareness, social media, ethnicity and religion: are they responsible for vaccination hesitancy? A systematic review with annotated bibliography. Clinical Social Work and Health Intervention 13 (4), 18–23. doi:10.22359/cswhi_13_4_04

-

Kolmes, S. A. (2011). Climate change: a disinformation campaign. Environment: Science and Policy for Sustainable Development 53 (4), 33–37. doi:10.1080/00139157.2011.588553

-

Lazer, D. M. J., Baum, M. A., Benkler, Y., Berinsky, A. J., Greenhill, K. M., Menczer, F., … Zittrain, J. L. (2018). The science of fake news. Science 359 (6380), 1094–1096. doi:10.1126/science.aao2998

-

Lazić, A. & Žeželj, I. (2021). A systematic review of narrative interventions: lessons for countering anti-vaccination conspiracy theories and misinformation. Public Understanding of Science 30 (6), 644–670. doi:10.1177/09636625211011881

-

Lei, Y., Pereira, J. A., Quach, S., Bettinger, J. A., Kwong, J. C., Corace, K., … Guay, M. (2015). Examining perceptions about mandatory influenza vaccination of healthcare workers through online comments on news stories. PLoS ONE 10 (6), e0129993. doi:10.1371/journal.pone.0129993

-

Lewandowsky, S., Cook, J., Ecker, U., Albarracín, D., Kendeou, P., Newman, E. J., … Zaragoza, M. S. (2020). The debunking handbook 2020. doi:10.17910/b7.1182

-

Lubchenco, J. (2017). Environmental science in a post-truth world. Frontiers in Ecology and the Environment 15 (1), 3. doi:10.1002/fee.1454

-

Malik, A., Bashir, F. & Mahmood, K. (2023). Antecedents and consequences of misinformation sharing behavior among adults on social media during COVID-19. SAGE Open 13 (1). doi:10.1177/21582440221147022

-

Nascimento, I. J. B., Pizarro, A. B., Almeida, J. M., Azzopardi-Muscat, N., Gonçalves, M. A., Björklund, M. & Novillo-Ortiz, D. (2022). Infodemics and health misinformation: a systematic review of reviews. Bulletin of the World Health Organization 100 (9), 544–561. doi:10.2471/blt.21.287654

-

Nsoesie, E. O. & Oladeji, O. (2020). Identifying patterns to prevent the spread of misinformation during epidemics. Harvard Kennedy School Misinformation Review 1 (3). doi:10.37016/mr-2020-014

-

Ortiz-Ospina, E. (2019, September 18). The rise of social media. Our World in Data. Retrieved from https://ourworldindata.org/rise-of-social-media

-

Ouzzani, M., Hammady, H., Fedorowicz, Z. & Elmagarmid, A. (2016). Rayyan — a web and mobile app for systematic reviews. Systematic Reviews 5, 210. doi:10.1186/s13643-016-0384-4

-

Paavola, J. (2017). Health impacts of climate change and health and social inequalities in the UK. Environmental Health 16 (S1), 113. doi:10.1186/s12940-017-0328-z

-

Page, M. J., McKenzie, J. E., Bossuyt, P. M., Boutron, I., Hoffmann, T. C., Mulrow, C. D., … Moher, D. (2021). Updating guidance for reporting systematic reviews: development of the PRISMA 2020 statement. Journal of Clinical Epidemiology 134, 103–112. doi:10.1016/j.jclinepi.2021.02.003

-

Pennycook, G., McPhetres, J., Zhang, Y., Lu, J. G. & Rand, D. G. (2020). Fighting COVID-19 misinformation on social media: experimental evidence for a scalable accuracy-nudge intervention. Psychological Science 31 (7), 770–780. doi:10.1177/0956797620939054

-

Pluviano, S., Watt, C., Ragazzini, G. & Della Sala, S. (2019). Parents’ beliefs in misinformation about vaccines are strengthened by pro-vaccine campaigns. Cognitive Processing 20 (3), 325–331. doi:10.1007/s10339-019-00919-w

-

Sharma, A., Mishra, M., Shukla, A. K., Kumar, R., Abdin, M. Z. & Kar Chowdhuri, D. (2019). Corrigendum to “Organochlorine pesticide, endosulfan induced cellular and organismal response in Drosophila melanogaster” [J. Hazard. Mater. 221–222 (2012) 275–287 https://doi.org/10.1016/j.jhazmat.2012.04.045]. Journal of Hazardous Materials 379, 120907. doi:10.1016/j.jhazmat.2019.120907

-

Steffens, N. K., LaRue, C. J., Haslam, C., Walter, Z. C., Cruwys, T., Munt, K. A., … Tarrant, M. (2021). Social identification-building interventions to improve health: a systematic review and meta-analysis. Health Psychology Review 15 (1), 85–112. doi:10.1080/17437199.2019.1669481

-

Sullivan, G. M. & Feinn, R. (2012). Using effect size — or why the value is not enough. Journal of Graduate Medical Education 4 (3), 279–282. doi:10.4300/jgme-d-12-00156.1

-

Swire-Thompson, B., Cook, J., Butler, L. H., Sanderson, J. A., Lewandowsky, S. & Ecker, U. K. H. (2021). Correction format has a limited role when debunking misinformation. Cognitive Research: Principles and Implications 6, 83. doi:10.1186/s41235-021-00346-6

-

Tandoc, E. C. (2019). The facts of fake news: a research review. Sociology Compass 13 (9), e12724. doi:10.1111/soc4.12724

-

Tandoc, E. C., Lim, Z. W. & Ling, R. (2018). Defining “fake news”: a typology of scholarly definitions. Digital Journalism 6 (2), 137–153. doi:10.1080/21670811.2017.1360143

-

Thaler, A. D. & Shiffman, D. (2015). Fish tales: combating fake science in popular media. Ocean & Coastal Management 115, 88–91. doi:10.1016/j.ocecoaman.2015.04.005

-

Tsamakis, K., Tsiptsios, D., Stubbs, B., Ma, R., Romano, E., Mueller, C., … Dragioti, E. (2022). Summarising data and factors associated with COVID-19 related conspiracy theories in the first year of the pandemic: a systematic review and narrative synthesis. BMC Psychology 10, 244. doi:10.1186/s40359-022-00959-6

-

Tsipursky, G., Votta, F. & Roose, K. M. (2018). Fighting fake news and post-truth politics with behavioral science: the Pro-Truth Pledge. Behavior and Social Issues 27, 47–70. doi:10.5210/bsi.v27i0.9127

-

van der Linden, S., Leiserowitz, A., Rosenthal, S. & Maibach, E. (2017). Inoculating the public against misinformation about climate change. Global Challenges 1 (2), 1600008. doi:10.1002/gch2.201600008

-

van der Linden, S., Roozenbeek, J. & Compton, J. (2020). Inoculating against fake news about COVID-19. Frontiers in Psychology 11, 566790. doi:10.3389/fpsyg.2020.566790

-

van Dijk, F. & Caraballo-Arias, Y. (2021). Where to find evidence-based information on occupational safety and health? Annals of Global Health 87 (1), 6. doi:10.5334/aogh.3131

-

Vraga, E. K. & Tully, M. (2021). News literacy, social media behaviors, and skepticism toward information on social media. Information, Communication & Society 24 (2), 150–166. doi:10.1080/1369118x.2019.1637445

-

Walter, N. & Murphy, S. T. (2018). How to unring the bell: a meta-analytic approach to correction of misinformation. Communication Monographs 85 (3), 423–441. doi:10.1080/03637751.2018.1467564

-

Walter, N. & Tukachinsky, R. (2020). A meta-analytic examination of the continued influence of misinformation in the face of correction: how powerful is it, why does it happen, and how to stop it? Communication Research 47 (2), 155–177. doi:10.1177/0093650219854600

-

Wang, Y. & Liu, Y. (2022). Multilevel determinants of COVID-19 vaccination hesitancy in the United States: a rapid systematic review. Preventive Medicine Reports 25, 101673. doi:10.1016/j.pmedr.2021.101673

-

Wang, Y., McKee, M., Torbica, A. & Stuckler, D. (2019). Systematic literature review on the spread of health-related misinformation on social media. Social Science & Medicine 240, 112552. doi:10.1016/j.socscimed.2019.112552

-

Whitehead, H. S., French, C. E., Caldwell, D. M., Letley, L. & Mounier-Jack, S. (2023). A systematic review of communication interventions for countering vaccine misinformation. Vaccine 41 (5), 1018–1034. doi:10.1016/j.vaccine.2022.12.059

-

Yang, Q. & Wu, S. (2021). How social media exposure to health information influences Chinese people’s health protective behavior during air pollution: a theory of planned behavior perspective. Health Communication 36 (3), 324–333. doi:10.1080/10410236.2019.1692486

-

Yousuf, H., van der Linden, S., Bredius, L., van Essen, G. A., Sweep, G., Preminger, Z., … Hofstra, L. (2021). A media intervention applying debunking versus non-debunking content to combat vaccine misinformation in elderly in the Netherlands: a digital randomised trial. eClinicalMedicine 35, 100881. doi:10.1016/j.eclinm.2021.100881

Authors

Thaiane Oliveira. Professor of Communication Graduate Program from Federal

Fluminense University.

@ThaianeOliveira E-mail: thaianeoliveira@id.uff.br

Nicolas de Oliveira Cardoso. Pos-doc of Psychology from Pontificia Universidade

Católica of Rio Grande do Sul.

E-mail: nicolas.deoliveira@hotmail.com

Wagner de Lara Machado. Professor Psychology from Pontificia Universidade

Católica of Rio Grande do Sul.

E-mail: wagner.machado@pucrs.br

Reynaldo Aragon Gonçalves. Doctorate Student of Communication Graduate

Program from Federal Fluminense University.

@ReyGoncalves E-mail: reynaldogoncalve@id.uff.br

Rodrigo Quinan. Doctorate Student of Communication Graduate Program from

Federal Fluminense University.

E-mail: rodrigoquinan@id.uff.br

Eduarda Zorgi Salvador. Graduate Student of Psychology from Pontificia

Universidade Católica of Rio Grande do Sul.

E-mail: eduarda.salvador@edu.pucrs.br

Camila Almeida. Graduate Student of Media Studies from Federal Fluminense

University.

E-mail: almeidacamila@id.uff.br

Aline Paes. Professor of Computer Science Graduate Program from Federal

Fluminense University.

@AlinempaesPaes E-mail: alinepaes@ic.uff.br

Supplementary material

Available at https://doi.org/10.22323/2.23010901

Reference of the 32 included studies

Endnotes

1Although digital social networks cannot be solely blamed for the production of misinformation, several studies [Lazer et al., 2018] have shown that misinformation has been present since the 15th century. However, it is important to acknowledge that social networks have played a critical role in the widespread circulation and dissemination of information to populations worldwide. Therefore, we use 2010 as a starting point since it marked a significant increase in the use of social media globally [Ortiz-Ospina, 2019].