1 Context

The Internet has transformed the public sphere. The physical space occupied by the public has, to a large extent, been replaced by multiple virtual spaces that promote conversation and participation, and encourage citizens to be more active [Coleman, 2001 ]. Social scientists [Pitrelli, 2017 ; Boccia Artieri, 2015 ; Rogers, 2015 ; Lovejoy, Waters and Saxton, 2012 ; Waters et al., 2009 ] have demonstrated its value as a new medium for civil and political change and its capacity for revolutionising the collective behaviour of human beings [Watts, 2007 ].

Science communication is one of the academic areas where scientific interest in the digital dimension begins to gain importance in areas from the analysis of scientific controversies or techniques, citizen science and the definition of new forms and practices for science journalism, to open science [Su et al., 2017 ; Pitrelli, 2017 ; Rigutto, 2017 ; Grand et al., 2016 ; Olsson, 2016 ; López-Pérez and Olvera-Lobo, 2016b ; López-Pérez and Olvera-Lobo, 2016a ; López-Pérez and Olvera-Lobo, 2015 ; Olvera-Lobo and López-Pérez, 2014 ; Olvera-Lobo and López-Pérez, 2013a ; Olvera-Lobo and López-Pérez, 2013b ; Mahrt and Puschmann, 2014 ; Colson, 2011 ; Kouper, 2010 ].

In the last decade and, coinciding with the generalisation of Web 2.0 usage, the conceptualisation of science communication and its study focus have undergone changes brought about by the transformation of the relationship between science and society, generated to a large extent by the new conversational space offered by the Internet [Su et al., 2017 ; Pitrelli, 2017 ; Brown, 2016 ; Grand et al., 2016 ; Saffer, Sommerfeldt and Taylor, 2013 ; Yang, Kang and Johnson, 2010 ; Weigold and Treise, 2004 ].

This new impetus on dialogue between scientists and citizens has been thus reflected in the evolution of academic interest, from the public awareness of science to citizen involvement; from communication to dialogue; from science and society to science with and for society [Bucchi and Neresini, 1995 ].

This new situation has been mirrored in scientific literature with a paradigm shift from the deficit model — in which the general public is defined negatively due to its lack of knowledge — to the participative model — in which the general public is invited to form part of the scientific endeavour.

Thus, public engagement in science is the latest paradigm to have been consolidated within the academic sphere. This model, which goes beyond one-directional communication, involving citizens in the development of R&D+i, has taken on new dimensions upon integrating itself as one of the key elements within responsible research and innovation (RRI), a concept that is having an effect on European scientific policies via the Horizon 2020 programme [Owen, Macnaghten and Stilgoe, 2012 ].

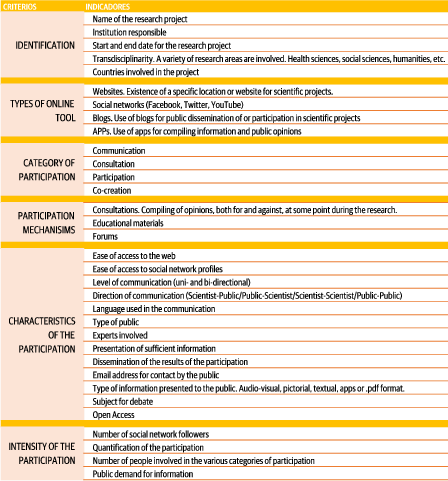

Despite the study and definition of public engagement in science being tackled in the last decade, scientific studies on the evaluation of the online sphere are still scarce. This is due to the fact that the model of digital public engagement in science is still young, and to the changing nature of the Internet itself. In this regard, this study contributes towards the generation of an academic line with the design of an analysis methodology validated by international experts in science communication, responsible research and innovation, science education and social networks, via the Delphi method. A total of 34 integrated indicators were identified and structured into six interrelated criteria, conceived for compiling data that aids in analysing and explaining how interactions between science and society are generated in this new digital scenario.

Furthermore, this article deals with the characteristics of the new public engagement in science model from the perspective of responsible research and innovation set out in the scientific literature that has been taken into account for the design of the indicators evaluated by the experts. The adaptation of the Delphi method to the specific nature of this investigation is described. Lastly, it presents the final proposal of indicators and evaluation criteria agreed by the experts following three rounds of consultation.

The questions on which the study is based are: a) do the experts on different facets of science communication and Web 20.0 consider the Internet as an effective stage for developing the public engagement in science model? and b) which criteria and indicators facilitate the understanding and analysis of public engagement in science via digital tools?

1.1 The public engagement in science model from the dimension of RRI

To understand the model of public engagement in science from the dimension of responsible research and innovation (RRI), it is necessary to go over the epistemological approaches covered in the literature on this concept. What is beneficial for society is inherent to the objectives of science [Glerup and Horst, 2014 ]. This assertion serves as a starting premise for understanding the main characteristic, which refers to the “new governance of science” [Guston and Sarewit, 2002 ; Irwin, 2006 ], in which scientists should produce contributions of value for society that respond to preferences expressed by citizens and which are subject to their scrutiny [Cho and Relman, 2010 ; Bubela et al., 2009 ; Abraham and Davis, 2005 ].

The concept is evolving, and the definition of Von Schomberg [ 2011 , p. 9] is one that has seen the most academic acceptance. “Responsible Research and Innovation is a transparent, interactive process by which societal actors and innovators become mutually responsive to each other with a view to the (ethical) acceptability, sustainability and societal desirability of the innovation process and its marketable products(in order to allow a proper embedding of scientific and technological advances in our society)”

Kupper et al. [ 2015 ] look at this perspective in more detail and state that responsible research and innovation should be described as that which requires the implication of a wide range of social partners throughout the whole research process, in order to orientate it towards results that are ethically acceptable, sustainable and desired by society. It is a form of anticipatory research and innovation [Guston, 2014 ; Sutcliffe, 2011 ; Barben et al., 2008 ] that aims to ensure that the research results are positive for society in the future. This is an idea expanded on by Stilgoe, Owen and Macnaghten [ 2013 , p. 1570]. who specify that “responsible innovation means taking care of the future through collective stewardship of science and innovation in the present”.

This line considers the 2012 European Union proposal that defines responsible research and innovation as that in which “Societal actors work together during the whole research and innovation process in order to better align both the process and its outcomes, with the values, needs and expectations of European society”.

The EC [European Union, 2014 ] has identified six policy agendas for the RRI framework [European Union, 2014 ]: Governance (as the umbrella for all the other dimensions [European Union, 2014 ]; science education; ethics, open access, gender, and public engagement in science

Public engagement in science is considered to be the core feature of RRI [Marschalek, 2017 ; Grand et al., 2016 ; FraunhoferISI and TechnopolisGroup, 2012 ; European Union, 2014 ].

Although this concept appeared in scientific literature over a decade ago there is still no consensus on what encompasses public engagement in science.

Rowe and Frewer [ 2005 ] consider public engagement in science as a combination between public communication, consultation and intervention within the framework of research and innovation. For their part, Rarn, Mejlgaard and Rask [ 2014 ] begin from the categorisation of Rowe and Frewer [ 2005 ], and pose a classification that covers different public engagement initiatives, including public communication, public activism, public consultation and public deliberation.

In turn, Bucchi and Neresini [ 1995 ] categorise public engagement in science into normalised (public surveys, participative evaluation of technology and democratic initiatives of consensus) and non-standardised or spontaneous (local protests, social movements, research carried out by the community and patient associations),

Other academics such as Bonney et al. [ 2009 ] define public engagement adhering to the different stages of the research and innovation process in which citizens can participate: I) choose or define question(s) for study; II) gather information and resources; III) develop explanations (hypotheses); IV) collect samples and/or record data; V) analyze data; VI) interpret data and draw conclusions; VII) disseminate conclusions/translate results into action VIII) discuss results and ask new questions. Thus, depending on the level of public implication, they describe three ways in which citizens can become involved in the I+D+i process, namely, contributed projects, collaborative projects and co-created projects.

For Klüver et al. [ 2014 ], the majority of public engagement activities are based on citizen involvement in the stages of scientific process relating to: I) setting the research and innovation agenda; II) supervision and evaluation of the research and innovation; III) active involvement in research and funding thereof; IV) contribution of specific knowledge about their environment; V) compilation of data and dissemination of research results.

This concept has also been associated with the involvement of researchers in the communication of scientific results [Bauer and Jensen, 2011 ]. Public conferences, media interviews, publishing of promotional books, participation in public debates, and collaboration with non-governmental organisations, are some of the activities included in this definition.

For its part, the European Comission [ 2015 ] addresses this key RRI dimension through the design of three types of indicator that permit its evaluation:

- Process indicators: number and level of development of formal procedures for involving the public (conferences, consensus, referendum, amongst others), and number of citizen science projects.

- Results indicators: number and percentages referring to the financing of projects and initiatives directed by citizens or civil organisations; number of consultation committees that include citizens and civil organisations; percentage of citizens and social organisations that have special responsibilities in the committees and consultation bodies; and number of citizens participating in citizen science projects.

- Perception indicators: level of interest of citizens in topics related to science and technology; considerations on what the responsibility of science should be; and percentage of people who see science as part of the solution rather than a problem.

2 Methods

With the purpose of obtaining criteria and indicators agreed upon and validated by experts within the sphere of the public science communication and social networks, the Delphi method was applied [Osborne et al., 2003 ; Clayton, 1997 ; Murry and Hammons, 1995 ]. This has been used previously to design methodological proposals for analysing the communication of science [Ouarichi, Gutiérrez Pérez and Olvera-Lobo, 2017 ; Ouariachi, Olvera-Lobo and Gutiérrez-Pérez, 2017 ; Seakins and Dillon, 2013 ] and science education [Smith and Simpson, 1995 ; Blair and Uhl, 1993 ; Doyle, 1993 ]. It involves a systematic, interactive and group process aimed at obtaining opinions and consensus, from the experiences and subjective opinion of a group of experts [Scapolo and Miles, 2006 ; Turoff et al., 2006 ; Osborne et al., 2003 ]. The Delphi method is distinguished from other methods of interaction by two characteristics [Dailey and Holmberg, 1990 ; Whitman, 1990 ; Cyphert and Gant, 1971 ; Cochran, 1983 ; Uhl, 1983 ]. Firstly, the process is anonymous. In addition, reiterated responses are obtained from the group of experts.

The key aspect in the development of the working methodology was to obtain the consensus of the group, but with the maximum autonomy on the part of the participants. In order to do this, three rounds of consultations were carried out in an interactive and anonymous process which allowed the participants to provide their opinions, receive the conclusions from the rest of the group in each round and, lastly, reconsider their opinions in a final stage.

2.1 Stages of the methodological process

In the development of the Delphi method the iterative process culminates when the so-called saturation criterion is fulfilled. This is determined by consensus (understood as the level of convergence of the individual estimations with an average minimum score of 2 points out of 3), and stability (understood as the non-variability of the expert opinions between the successive rounds, independently of the level of convergence). Both conditions were reached in the third round, in which the process was concluded.

2.2 Stage 1: design of the protocol and selection of the group of experts

Stage 1 focused on the definition of the problem and the design of the technique. Following the establishment of the coordinating research team, comprising members of the Access and Evaluation of Scientific Information research group affiliated to the University of Granada (Spain), the problem was defined and the stages of the process to follow were determined, along with the criteria for the selection of experts, the characteristics of the instrument for the gathering of opinions (criteria, indicators, scope and structure), the system of communication with the participants, the process execution calendar and the results evaluation system.

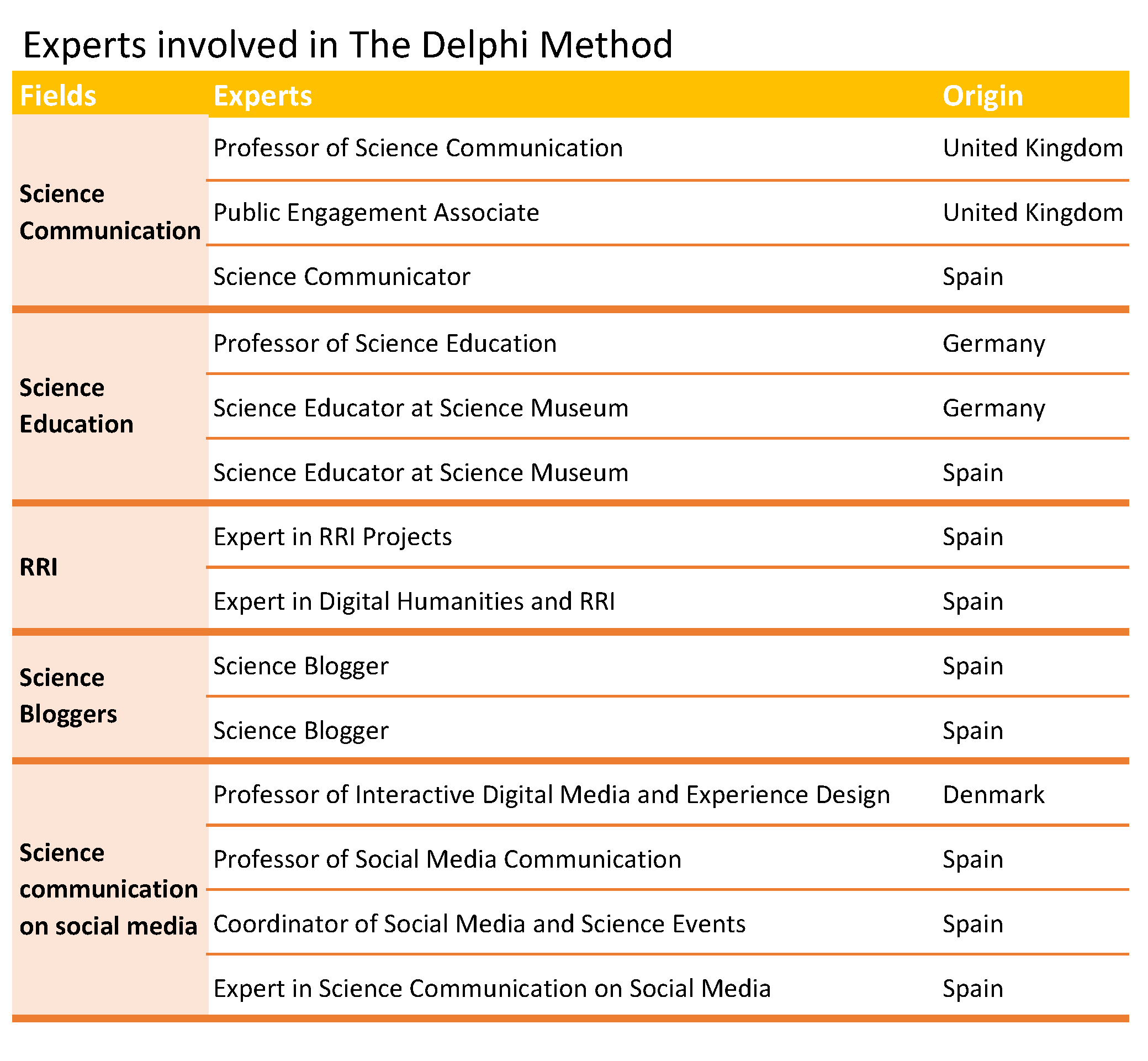

In relation to the selection of experts, the minimum number established in a Delphi panel is ten [Cochran, 1983 ]. In order to reduce the level of error and increase reliability, a total of twenty five experts were selected in different spheres relating to the study topic, namely, public science communication, science education, responsible research and innovation, science bloggers and social networks. Furthermore, the specialists had different geographical and cultural origins — United Kingdom, Germany, Finland, United States, Belgium, Holland, Denmark and Spain — The parity factor was also taken into account in relation to gender equality in the sample. Of the twenty five experts contacted, fifteen were women and ten were men. This parity was maintained for the fourteen that accepted and participated in the first round (Table 1 ). The number of expert participants in the second round dropped to thirteen, and in the third it stood at ten.

The reason for selecting experts in the spheres of public science communication, RRI and social networks responds to the interest in creating a scientifically validated tool that includes all of the elements that make it possible to discover whether the public participates in research projects driven by scientific institutions via the Internet, the manner of this participation and its effectiveness. To bring together all of the criteria and indicators that help in the extraction of this information, guidance is essential from experts who are aware, from a practical and theoretical point of view, of the advantages and disadvantages of this channel, along with the characteristics that make interaction between science and society effective for both parties.

It is also important to draw attention to the fact that the Delphi method demands the consultation of experts in the field who, thanks to their specific knowledge on the material analysed, are accredited to validate the evaluation tool designed.

This initial methodological approach does not exclude future studies from being extended and improved from the results of future empirical works and from the contributions of a non-expert public via other type of qualitative methodologies that do integrate this segment, such as focus groups.

The criteria that took precedence in the selection of experts were publications, professional and academic experience in the field, social impact (this item was taken into account mainly in relation to science bloggers), training, and coordination and organisation of international projects that involved the participation of the public in the research process, or which were linked to responsible research and innovation.

2.3 Stage 2: design of the instrument and communication with the experts

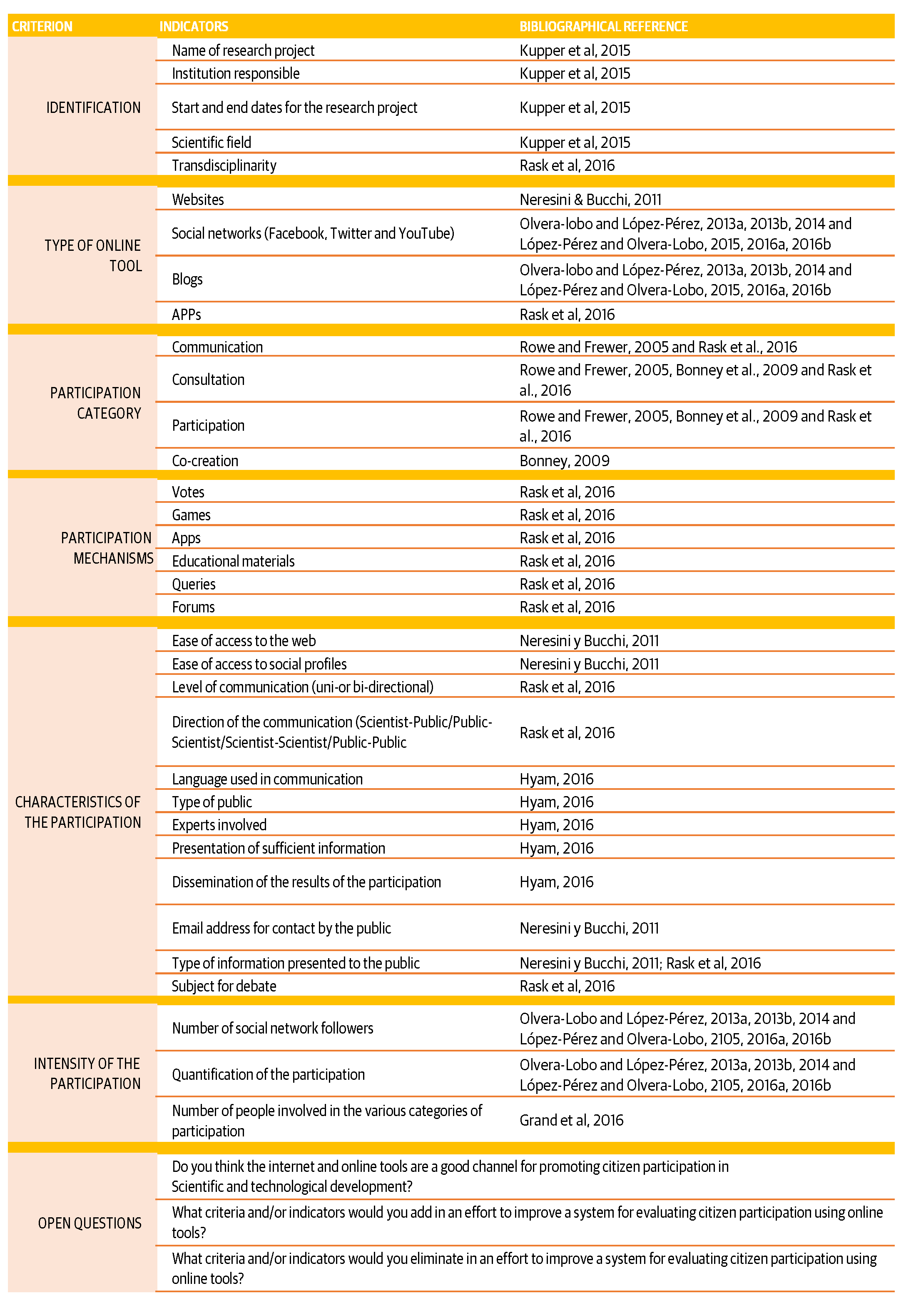

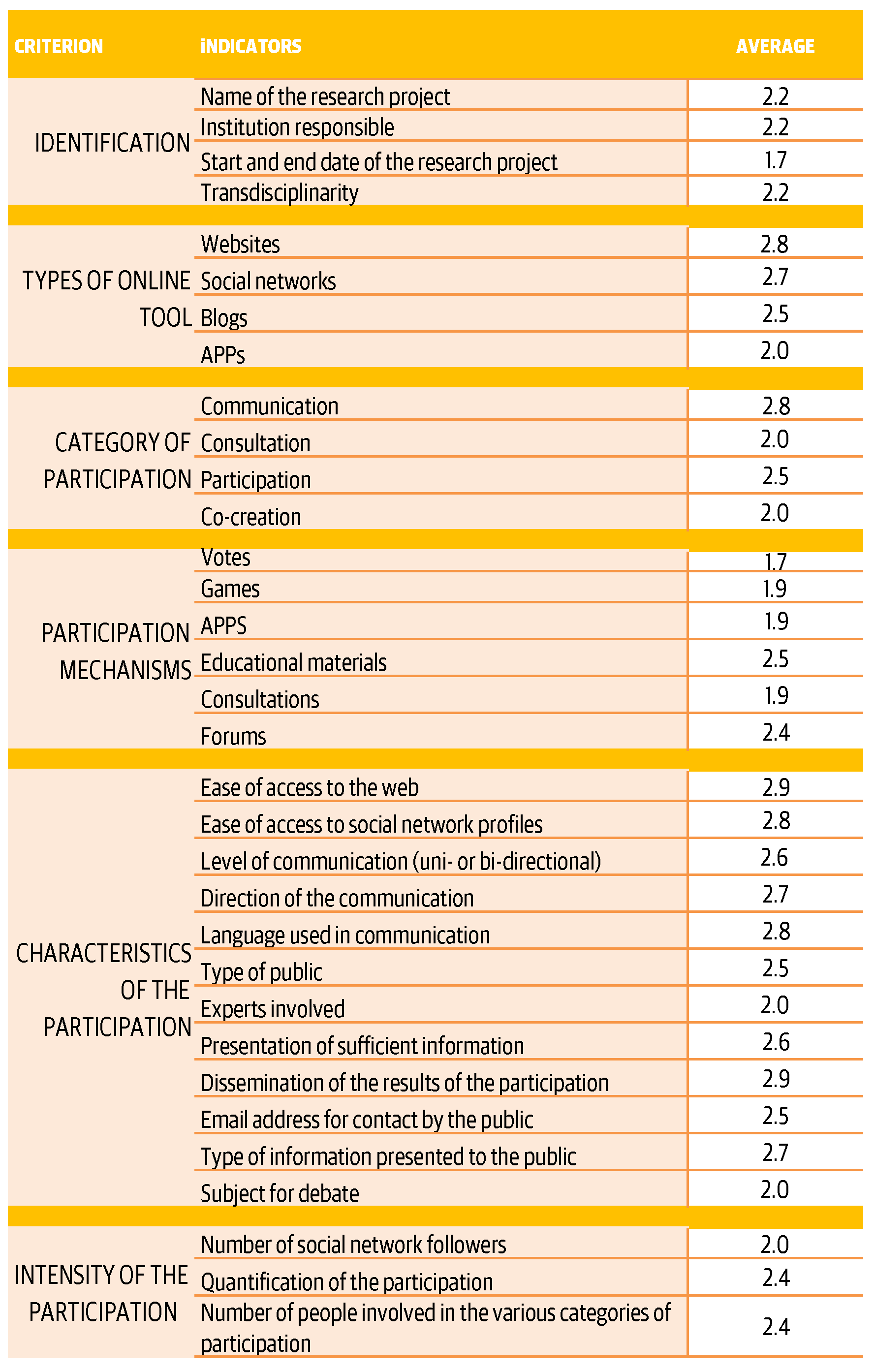

The questionnaire created for this paper is structured around six criteria based on the definitions and approaches to the concept of public engagement in science considered by the scientific literature (Table 2 ).

Identification, types of online tools used, category of participation, mechanisms of participation, characteristics of participation and intensity of participation were the dimensions to evaluate by the experts in the first round of consultation.

Each one of them was integrated in turn by a series of indicators set out from the revision of the scientific literature on science communication via the Internet and on public participation in science from the perspective of RRI.

The identification criterion is a general dimension that allows the monitoring of the institutions, research projects and scientific areas [Kupper et al., 2015 ] which involve the public during the scientific process.

The indicators of types of online tool included in the first round of consultation were scientifically proven as effective channels for direct interaction between science and society. Thus, included in this dimension were the existence of a specific site or page for the dissemination of research projects [Neresini and Bucchi, 2011 ]; the creation of profiles on the three networks with most social impact: Facebook, Twitter and Youtube [Olvera-Lobo and López-Pérez, 2013a ; Olvera-Lobo and López-Pérez, 2013b ; Olvera-Lobo and López-Pérez, 2014 ; López-Pérez and Olvera-Lobo, 2015 ; López-Pérez and Olvera-Lobo, 2016b ; López-Pérez and Olvera-Lobo, 2016a ]; the use of blogs for science communication or public engagement in science [Olvera-Lobo and López-Pérez, 2013a ; Olvera-Lobo and López-Pérez, 2013b ; Olvera-Lobo and López-Pérez, 2014 ; López-Pérez and Olvera-Lobo, 2015 ; López-Pérez and Olvera-Lobo, 2016b ; López-Pérez and Olvera-Lobo, 2016a ] and the use of applications for collecting public data or opinions.

Four categories of participation were identified, according to the type of citizen involvement in scientific research, and according to their level of interactivity. 1) Communication, understood as the dissemination of information on the results of science research or education through the development of didactic materials, the organisation of webinars or the organisation of traditional offline activities [Rowe and Frewer, 2005 ]; 2) consultation, in which questions are put to citizens in relation to topics of interest arising from one of the research stages [Rowe and Frewer, 2005 ; Bonney et al., 2009 ]; 3) participation, where citizens become involved in the research by collecting data, analysing results and even proposing topics and 4) co-creation, with citizens involved throughout the whole research process, from the decision on the topic addressed by the research project to the evaluation of the results [Bonney et al., 2009 ].

For the mechanisms of participation, a total of six indicators were included that were shown as effective for promoting participation in one of the stages of the scientific process [Rask et al., 2016 ]. These were: 1) votes; 2) games specifically designed to gather public opinions or evaluations on a specific scientific topic; 3) the use of apps to collect information or evaluations from the public, or designed for communicating research results; 4) educational materials designed to educate users on the scientific field that is the subject of the research project; 5) consultations for gathering opinions, for or against, at some stage of the research process and 6) debate forums for promoting the conversation with the public on different aspects related to the research project being developed.

As regards the participation characteristics criterion, the purpose of the indicators proposed in the first evaluation questionnaire for the experts was to identify the ease of access to information that favoured participation (content published in non-technical language) [Hyam, 2016 ]; the publication of profiles on visible sites [Neresini and Bucchi, 2011 ]; complementary information published to understand the object of the study [Hyam, 2016 ] etc.); the directionality of the communication [Rask et al., 2016 ] and the type of public participating in the actions [Hyam, 2016 ].

Finally, the participation intensity criterion included the number of followers on different social profiles, the number of comments or number of people involved in the different participation categories [López-Pérez and Olvera-Lobo, 2016b ; López-Pérez and Olvera-Lobo, 2016a ; López-Pérez and Olvera-Lobo, 2015 ; Grand et al., 2016 ; Olvera-Lobo and López-Pérez, 2013a ; Olvera-Lobo and López-Pérez, 2013b ; Olvera-Lobo and López-Pérez, 2014 ].

The response system for indicating the appropriateness of these indicators was based on the Likert scale, scoring the responses as 1 – low importance, 2 – moderate importance or 3 – high importance.

The questionnaire was completed with three open-ended questions that were asked to give autonomy to the experts in order for them to express their judgements and opinions based on their experience and specialisation. This also allowed us to obtain qualitative results and respond to one of the main objectives of the study, which was to determine whether the Internet is an appropriate platform for promoting public engagement.

Thus, the questions put forward sought to I) evaluate the appropriateness of the object of the study — Do you consider the Internet and online tools to be a good channel for promoting public participation in scientific and technological development? — or II) to improve and expand on the criteria and indicators proposed by the coordinating group — What criteria and/or indicators would you remove in order to improve a system for assessing public participation via online tools?

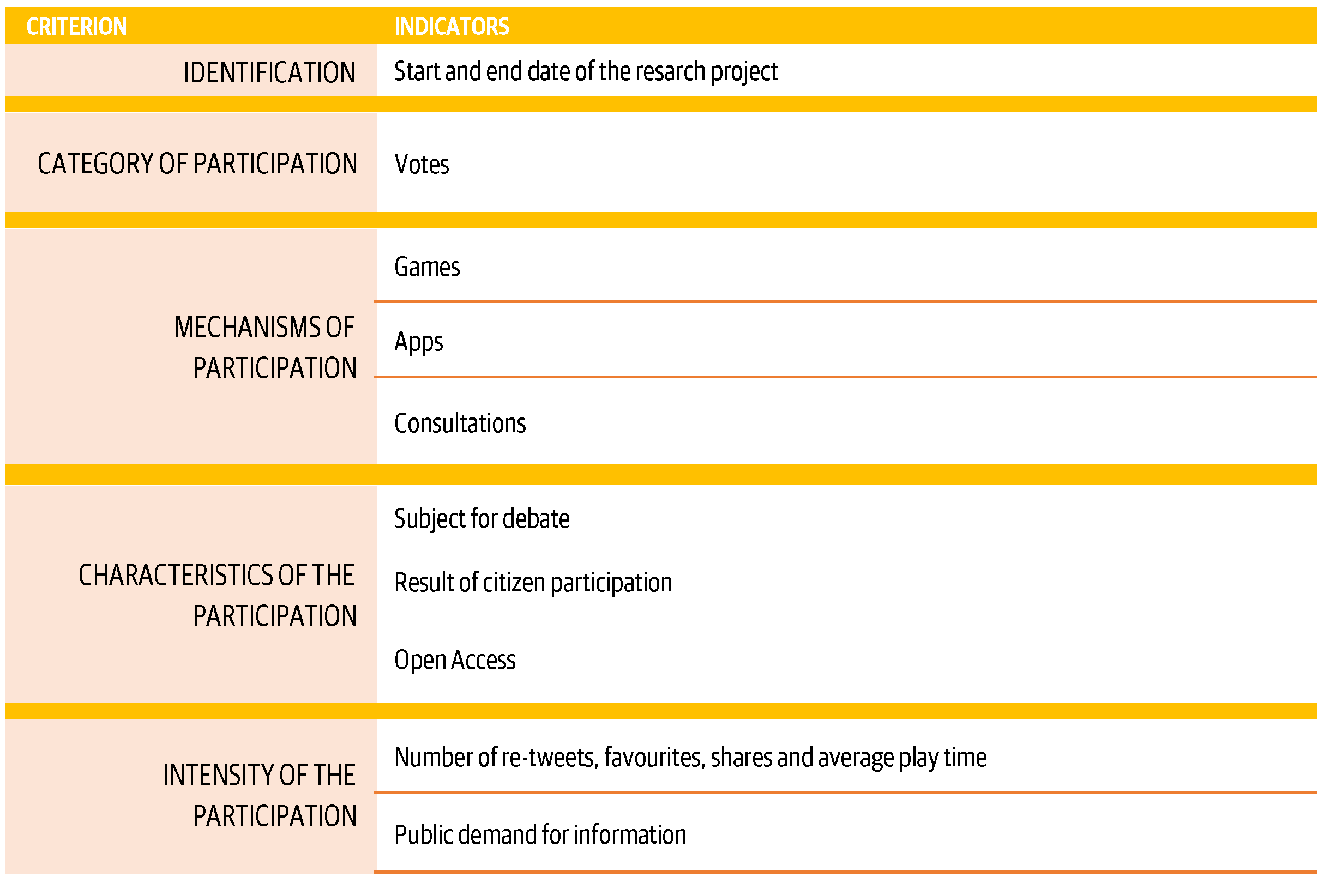

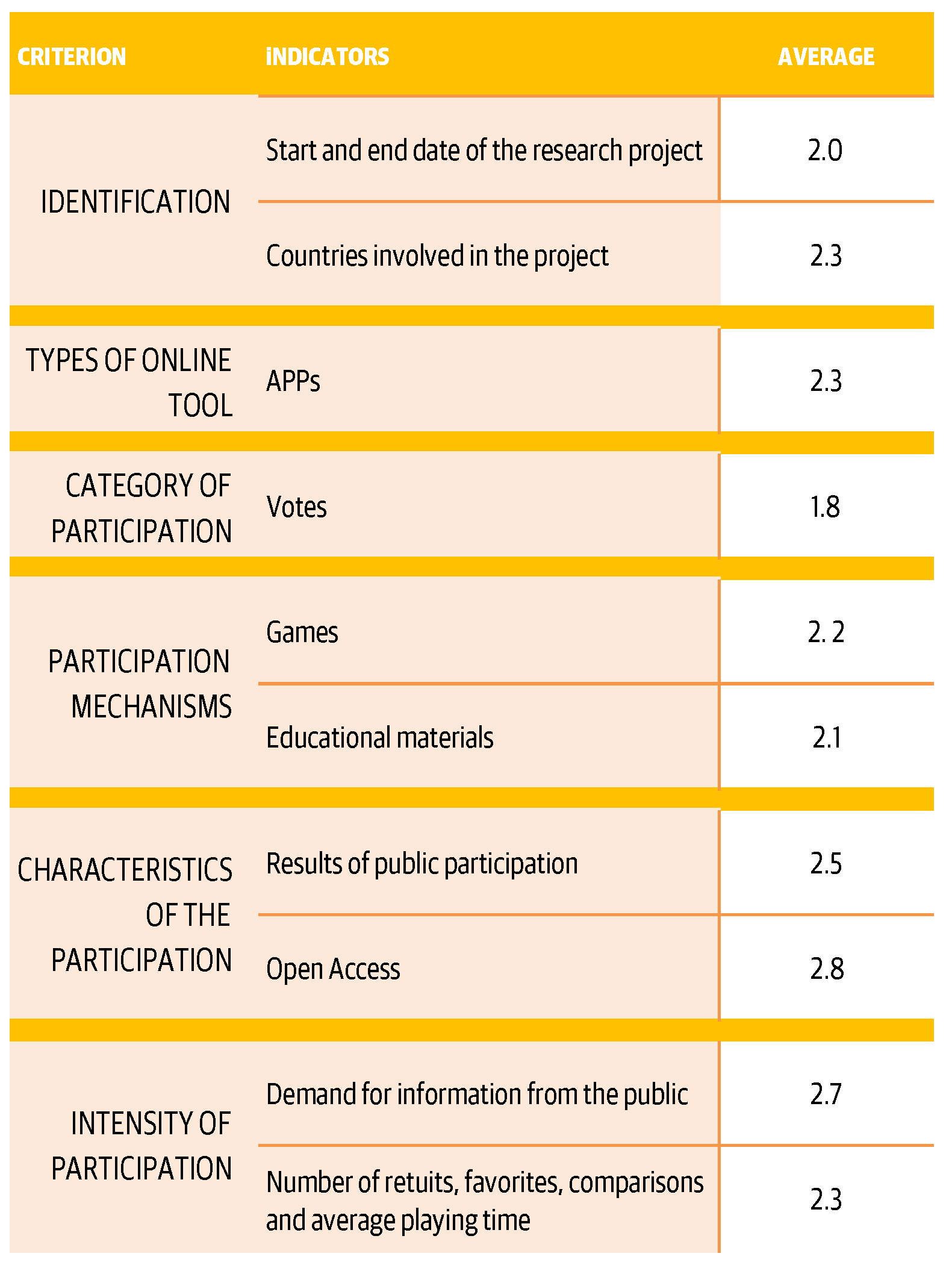

With the aim of including the contributions of the experts via the open questions and subject them to the consensus of the group, the questionnaire sent in the second round included the proposed indicators and integrated those that had not reached an average score of 2 points out of 3 in the first round, which was the score that had been agreed through consensus for inclusion in the tool. The purpose of including these indicators was to subject their consideration to a second reflection, as required by the Delphi method, before definitively eliminating them from the evaluation tool (Table 3 ).

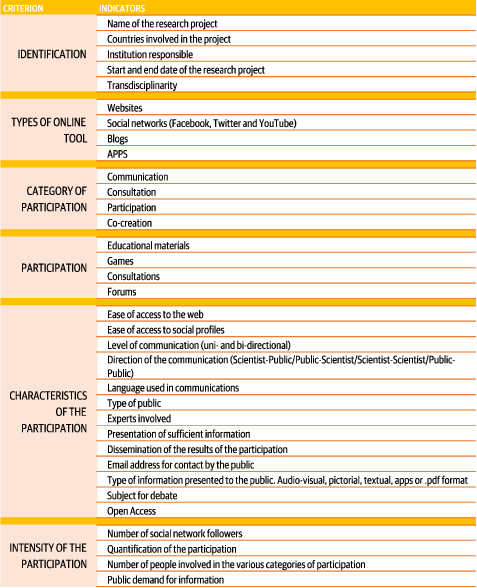

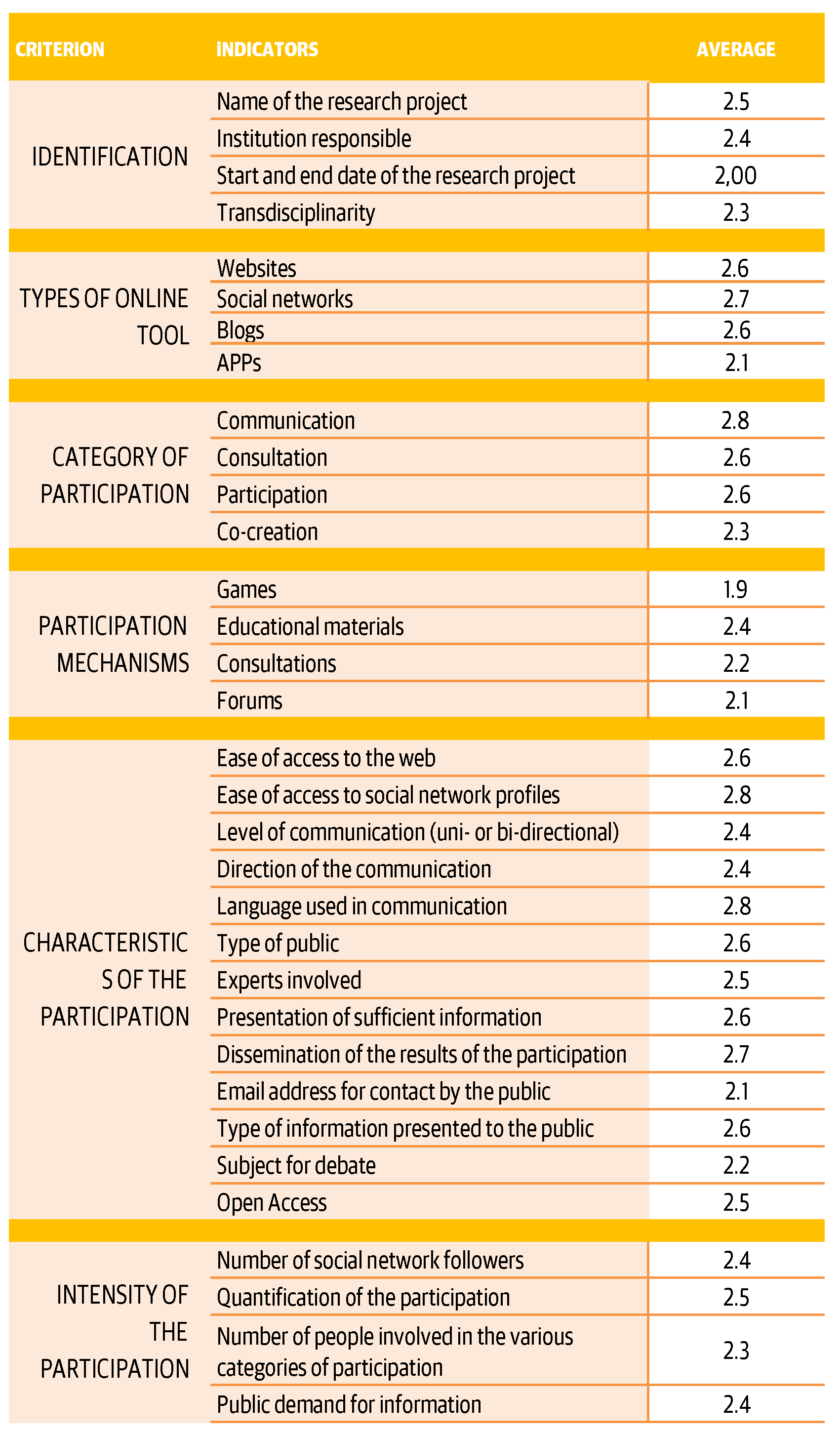

The third questionnaire was designed from the answers obtained, in which the indicators for which consensus had been reached in the first and second rounds were included. In this case the objective focused on checking stability in the answers between questionnaires 1, 2 and 3, and definitively integrating those indicators for which a consensus higher than an average score of 2 points had been reached (Table 4 ).

The system of communication with the experts was e-mail. They were all sent an online questionnaire via Google Drive, an open source application that allows you to design forms free of charge. The programming for the sending of the questionnaires took place between December 2016 and February 2017, with a period of approximately one month being established between the different rounds.

2.4 Stage 3: conclusions and communication of the consensus

The process concluded when the saturation criterion established by consensus and the stability of the evaluations of the experts in relation to the indicators included in the questionnaire were reached.

3 Results

3.1 Round 1

3.1.1 Qualitative results

All the experts who participated in the consultation responded affirmatively to the question of whether the Internet was an effective stage for developing the public engagement in science model. Accessibility for a more diverse user community without geographic or time limits was the most indicated value for supporting the validity of the digital channel. Notwithstanding, the majority coincided on the need to combine online and offline strategies to reach an effective implication in the scientific process. In this regard, the majority of the experts indicated that the exclusive use of the digital channel was detrimental to the quality of the interaction and complicated the prolongation of dialogue.

In terms of the indicators proposed by the experts for integration into the public engagement evaluation tool, these were 1) evaluating whether there is dissemination of the public contribution to the research process when it is implicated; 2) open access usage, that is, open publication of the results of research projects in which there has been co-creation or implication on the part of citizens; 3) public demand for information, that is, what type of questions do citizens ask researchers; and 4) number of retweets, favourites, shares and average playing time, which are values that can provide more information on the effectiveness and success of participation.

3.1.2 Quantitative results

Indicators with greatest level of consensus as being of high importance. As shown in Table 5 , in this first round the majority of the indicators to reach a higher level of consensus comprise the participation characteristics criterion. Ease of access to the web and the communication of scientific results are the closest to 100% consensus in high importance (both with an average of 2.9); these are followed by ease of access to social network profiles of the research project and the language used in communication (with 2.8 and the type of information presented to the public and the direction of the communication (both with an average of 2.7). Added to these would have to be indicators such as websites (average 2.8) and social networks (average 2.7) (both included in the tool types criterion). Finally, attention may be drawn to the communication indicator (included within the category of participation criterion) with an average of 2.8.

Indicators valued by consensus as being of low importance. Those indicators valued by consensus as being of low importance, with an average score of under 2 out of 3, are included in the criteria of identification, mechanisms of participation, types of tools and category of participation. When it comes to identification of the research, the most poorly scored indicator was that relating to the date of the beginning and end of the research project Games (with 1.9 points) and votes (with 1.7), belonging to the mechanisms of participation criterion, obtained lower scores. Finally, in tool types, the indicators considered by experts as being of least importance for the evaluation of public participation in science were Apps (average 1.9), and in category of participation, consultations (1.9) (see Table 5 ).

3.2 Round 2

Amongst the results achieved in round 2, it is worth mentioning that stability is not maintained in the responses on those indicators valued as being of low importance in round 1. Just one of these, the votes indicator (with an average of 1.8 and within the mechanisms of participation criterion) continued to be evaluated by the experts with a score under 2 (see Table 6 ).

The indicators which the majority considered to be of high importance were Open Access (within the characteristics of participation criterion) and public demand for information (belonging to the intensity of participation category) (both with an average of 2.7). Thirteen experts provided responses in this second round.

3.3 Round 3

The results obtained, from the responses of the 10 experts who participated in the third round of consultations, showed consensus on medium-high importance with average scores over 2 for all indicators proposed — except in the games indicator, which presented an average of 1.8 and, therefore, would be eliminated from the definitive evaluation tool.

Stability was maintained with respect to rounds 1 and 2, and the indicators that continued to obtain the closest average to 100% were language used in communication (2.8) and ease of access to profiles (2.8) (integrated into the characteristics of participation criterion); communication (2.8) (corresponding to category of participation) and social networks (2.7 and websites (2.6) included in the types of tools criterion) (see Table 7 ).

Following the three consultation rounds the group of participating experts used their responses, proposals and scores to validate the criteria and indicators presented in Table 8 . These indicators are those that integrate the tool for evaluating public engagement in science via the digital environment proposed here.

The indicators included in the “characteristics of participation” criteria were those that maintained the greatest consensus from round 1 to round 3. The communication of scientific results to the public, the language used to disseminate them and the ease of access to the social profiles of the research project are the indicators that captured the greatest consensus. To these we can add communication as a category of participation, along with social networks and websites as types of tools for promoting public participation in science.

4 Conclusions

The evaluation tool validated scientifically and presented in this paper is going to contribute to study the digital dimension of public engagement in science. The academic research about the impact of Internet on science communication are still scarce and the method of analysis proposed could be helpful to know more about of current Internet usage and its effectiveness for encouraging public engagement in the scientific process.

All the expert asked in this study agree in the importance to evaluate the power of web 2.0 for promoting the model of science with and for society. They focus on two main advantages of this channel, accessibility and its potential to reach diverse user communities without geographical or temporal limits are the main advantages. Although it has also been pointed to negative aspects such as a loss of quality in terms of interaction, and the continuity of dialogue compared to the offline scenario. In this sense, the majority of experts asked coincide in the fact that it is necessary to combine online and offline strategies in order to guarantee effective participation. This is an assessment that encourages, as a future line of research, the design of a tool that permits the evaluation of both scenarios, their complementarity and the dimensions in which each is more effective for an equal interaction between science and society.

The analytical framework scientifically validated by the Delphi method comprises thirty four indicators, structured into six criteria, which will allow the collection of data both on the usage and typology of Web 2.0 tools for promoting the interaction of society, and its effectiveness (number of people involved, type of communication, level of interaction). The analysis indicators put forward could, once contrasted with studies of an empirical nature, serve to evaluate and recognise digital public engagement fostered by scientific institutions, groups or research projects.

The methodological proposal validated and presented in this paper is a contribution situated halfway between two emerging fields in constant evolution — Web 2.0 tools and digital science communication itself. To this end, and despite having great potential, it will continue to be further enriched with a greater path and application in different contexts and empirical studies.

References

-

Abraham, J. and Davis, C. (2005). ‘Risking public safety: Experts, the medical profession and ‘acceptable’ drug injury’. Health, Risk & Society 7 (4), pp. 379–395. https://doi.org/10.1080/13698570500390473 .

-

Barben, D., Fisher, E., Selin, C. and Guston, D. (2008). ‘Anticipatory Governance of Nanotechnology: Foresight, Engagement, and Integration’. In: Handbook of Science and Technology Studies . Ed. by E. J. Hackett, O. Amsterdamska, M. E. Lynch and J. Wajcman. 3rd ed. Cambridge, MA, U.S.A.: MIT Press, pp. 979–1000.

-

Bauer, M. and Jensen, P. (2011). ‘The mobilization of scientists for public engagement’. Public Understanding of Science 20 (1), pp. 3–11. https://doi.org/10.1177/0963662510394457 .

-

Blair, S. and Uhl, N. (1993). ‘Using the Delphi method to improve the curriculum’. The Canadian Journal of Higher Education 23 (3), pp. 107–128. URL: http://journals.sfu.ca/cjhe/index.php/cjhe/article/view/183175 .

-

Boccia Artieri, G. (2015). Gli effetti sociali del web: introduzione. Ed. by G. Boccia Artieri. Milano, Italy: Franco Angeli, p. 42.

-

Bonney, R., Ballard, H., Jordan, R., McCallie, E., Phillips, T., Shirk, J. and Wilderman, C. C. (2009). Public Participation in Scientific Research: Defining the Field and Assessing Its Potential for Informal Science Education . A CAISE Inquiry Group Report. Washington, D.C., U.S.A.: Center for Advancement of Informal Science Education (CAISE). URL: http://www.informalscience.org/public-participation-scientific-research-defining-field-and-assessing-its-potential-informal-science .

-

Brown, D. J. (2016). Access to Scientific Research. Challenges facing communications in STM. Berlin, Germany: De Gruyter Saur. https://doi.org/10.1515/9783110369991 .

-

Bubela, T., Nisbet, M. C., Borchelt, R., Brunger, F., Critchley, C., Einsiedel, E., Geller, G., Gupta, A., Hampel, J., Hyde-lay, R., Jandciu, E. W., Jones, S. A., Kolopack, P., Lane, S., Lougheed, T., Nerlich, B., Ogbogu, U., O’riordan, K., Ouellette, C., Spear, M., Strauss, S., Thavaratnam, T., Willemse, L. and Caulfield, T. (2009). ‘Science communication reconsidered’. Nature Biotechnology 27 (6), pp. 514–518. https://doi.org/10.1038/nbt0609-514 .

-

Bucchi, M. and Neresini, F. (1995). ‘Science and Public Participation’. In: Handbook of Science and Technology Studies . Ed. by S. Jasanoff, G. E. Markle, J. C. Peterson and T. Pinch. 2nd ed. Thousand Oaks, CA, U.S.A.: Sage, pp. 449–472. ISBN: 9780262035682. https://doi.org/10.4135/9781412990127 .

-

Cho, M. K. and Relman, D. A. (2010). ‘Synthetic "Life," Ethics, National Security, and Public Discourse’. Science 329 (5987), pp. 38–39. https://doi.org/10.1126/science.1193749 .

-

Clayton, M. J. (1997). ‘Delphi: a technique to harness expert opinion for critical decision-making tasks in education’. Educational Psychology 17 (4), pp. 373–386. https://doi.org/10.1080/0144341970170401 .

-

Cochran, S. W. (1983). ‘The Delphi Method: Formulating and Refining Group Judgements’. Journal of Human Sciences 2 (2), pp. 111–117.

-

Coleman, S. (2001). ‘The Transformation of Citizenship?’ In: New Media and Politics . Ed. by B. Axford and H. Richard. London, U.K.: SAGE, pp. 109–126. https://doi.org/10.4135/9781446218846.n5 .

-

Colson, V. (2011). ‘Science blogs as competing channels for the dissemination of science news’. Journalism 12 (7), pp. 889–902. https://doi.org/10.1177/1464884911412834 .

-

Cyphert, F. R. and Gant, W. (1971). ‘The Delphi Technique: A Case Study’. Phi Delta Kappan 52 (5), pp. 272–273.

-

Dailey, A. L. and Holmberg, J. C. (1990). ‘Delphi — A catalytic strategy for motivating curriculum revision by faculty’. Community Junior College Research Quarterly of Research and Practice 14 (2), pp. 129–136. https://doi.org/10.1080/0361697900140207 .

-

Doyle, C. S. (1993). ‘The Delphi Method as a Qualitative Assessment Tool for Development of Outcome Measures for Information Literacy’. School Library Media Annual 11, pp. 132–144. URL: https://eric.ed.gov/?id=EJ476212 .

-

European Comission (2015). Indicators for promoting and monitoring Responsible Research and Innovation . URL: http://ec.europa.eu/research/swafs/pdf/pub_rri/rri_indicators_final_version.pdf .

-

European Union (2014). Responsible Research and Innovation — Europe’s ability to respond to societal challenges . Ed. by European Union. URL: https://ec.europa.eu/research/swafs/pdf/pub_rri/KI0214595ENC.pdf .

-

FraunhoferISI and TechnopolisGroup (2012). Interim evaluation & assessment of future options for Science in Society Actions. Assessment of future options . URL: https://ec.europa.eu/research/swafs/pdf/pub_archive/phase02-122012_en.pdf .

-

Glerup, C. and Horst, M. (2014). ‘Mapping ‘social responsibility’ in science’. Journal of Responsible Innovation 1 (1), pp. 31–50. https://doi.org/10.1080/23299460.2014.882077 .

-

Grand, A., Holliman, R., Collins, T. and Adams, A. (2016). ‘“We muddle our way through”: shared and distributed expertise in digital engagement with research’. 15 (4), A05. URL: http://jcom.sissa.it/archive/15/04/JCOM_1504_2016_A05 .

-

Guston, D. H. (2014). ‘Understanding ‘anticipatory governance’’. Social Studies of Science 44 (2), pp. 218–242. https://doi.org/10.1177/0306312713508669 .

-

Guston, D. H. and Sarewit, D. (2002). ‘Real-time technology assessment’. Technology in Society 24 (1–2), pp. 93–109. https://doi.org/10.1016/S0160–791X(01)00047-1 .

-

Hyam, P. (2016). Widening Public Involvement in Dialogue . URL: WideningPublicInvolvementinDialogue .

-

Irwin, A. (2006). ‘The Politics of Talk’. Social Studies of Science 36 (2), pp. 299–320. https://doi.org/10.1177/0306312706053350 .

-

Klüver, L., Hennen, L., Andersson, E., Mulder, H. A., Damianova, Z. and Kuhn, R. (2014). ‘Public Engagement in R&I processes. Promises and demands’. Engaging Society in Horizon 2020 (2). URL: http://engage2020.eu/media/Engage2020-Policy-Brief-Issue2_final.pdf .

-

Kouper, I. (2010). ‘Science blogs and public engagement with science: practices, challenges, and opportunities’. JCOM 09 (01), A02. URL: https://jcom.sissa.it/archive/09/01/Jcom0901%282010%29A02 .

-

Kupper, F., Rijnen, M., Vermeulen, S. and Broerse, J. (2015). Methodoloy for the collection and classification of RRI practices . URL: http://www.rri-tools.eu/documents/10184/107098/RRITools_D1.2Collection%26ClassificationofRRIPracticesMethodology.pdf/3af4e5e8-7a0d-4274-974c-b45fbeba3c6d .

-

López-Pérez, L. and Olvera-Lobo, M. D. (2015). ‘Comunicación de la ciencia 2.0 en España: el papel de los centros públicos de investigación y de medios digitales’. Revista Mediterránea de Comunicación 6 (2). https://doi.org/10.14198/medcom2015.6.2.08 .

-

— (2016a). ‘Comunicación pública de la ciencia a través de la web 2.0. El caso de los centros de investigación y universidades públicas de España’. El Profesional de la Información 25 (3), pp. 441–448. https://doi.org/10.3145/epi.2016.may.14 .

-

— (2016b). Social media as channels for the public communication of science. The case of Spanish research centers and public universities. Ed. by K. Knautz and K. S. Baran. Berlin, Germany: De Gruyter House.

-

Lovejoy, K., Waters, R. D. and Saxton, G. D. (2012). ‘Engaging stakeholders through Twitter: How nonprofit organizations are getting more out of 140 characters or less’. Public Relations Review 38 (2), pp. 313–318. https://doi.org/10.1016/j.pubrev.2012.01.005 .

-

Mahrt, M. and Puschmann, C. (2014). ‘Science blogging: an exploratory study of motives, styles, and audience reactions’. JCOM 13 (3), A05. URL: https://jcom.sissa.it/archive/13/03/JCOM_1303_2014_A05 .

-

Marschalek, I. (2017). Public engagement in Responsible Research and Innovation. A Critical Reflection from the Practitioner’s Point of View. Vienna, Austria: University of Vienna. URL: https://www.zsi.at/object/publication/4498/attach/Marschalek_Public_Engagement_in_RRI.pdf .

-

Murry, J. W. and Hammons, J. O. (1995). ‘Delphi: A Versatile Methodology for Conducting Qualitative Research’. The Review of Higher Education 18 (4), pp. 423–436. https://doi.org/10.1353/rhe.1995.0008 .

-

Neresini, F. and Bucchi, M. (2011). ‘Which indicators for the new public engagement activities? An exploratory study of European research institutions’. Public Understanding of Science 20 (1), pp. 64–79. https://doi.org/10.1177/0963662510388363 .

-

Olsson, T. (2016). ‘Social media and new forms for civic participation’. New Media & Society 18 (10), pp. 2242–2248. https://doi.org/10.1177/1461444816656338 .

-

Olvera-Lobo, M. D. and López-Pérez, L. (2013a). ‘La divulgación de la Ciencia española en la Web 2.0: el caso del Consejo Superior de Investigaciones Científicas en Andalucía y Cataluña’. Revista Mediterránea de Comunicación 4 (1), pp. 169–191. https://doi.org/10.14198/medcom2013.4.1.08 .

-

— (2013b). ‘The role of public universities and the primary digital national newspapers in the dissemination of Spanish science through the internet and web 2.0’. In: Proceedings of the First International Conference on Technological Ecosystem for Enhancing Multiculturality (TEEM ’13) . https://doi.org/10.1145/2536536.2536565 .

-

— (2014). ‘Science Communication 2.0’. Information Resources Management Journal 27 (3), pp. 42–58. https://doi.org/10.4018/irmj.2014070104 .

-

Osborne, J., Collins, S., Ratcliffe, M., Millar, R. and Duschl, R. (2003). ‘What “ideas-about-science” should be taught in school science? A Delphi study of the expert community’. Journal of Research in Science Teaching 40 (7), pp. 692–720. https://doi.org/10.1002/tea.10105 .

-

Ouariachi, T., Olvera-Lobo, M. D. and Gutiérrez-Pérez, J. (2017). ‘Analyzing Climate Change Communication Through Online Games’. Science Communication 39 (1), pp. 10–44. https://doi.org/10.1177/1075547016687998 .

-

Ouarichi, T., Gutiérrez Pérez, J. and Olvera-Lobo, M. D. (2017). ‘Criterios de evaluación de juegos en línea sobre cambio climático. Aplicación del método Delphi para su indentificación’’. Revista Mexicana de Investigación Educativa 22 (73), pp. 445–474. URL: http://digibug.ugr.es/bitstream/10481/46289/1/Ouariachi_EvaluacionJuegosCC.pdf .

-

Owen, R., Macnaghten, P. and Stilgoe, J. (2012). ‘Responsible research and innovation: From science in society to science for society, with society’. Science and Public Policy 39 (6), pp. 751–760. https://doi.org/10.1093/scipol/scs093 .

-

Pitrelli, N. (2017). ‘Big Data and digital methods in science communication research: opportunities, challenges and limits’. JCOM 16 (02), C01. URL: https://jcom.sissa.it/archive/16/02/JCOM_1602_2017_C01 .

-

Rarn, T., Mejlgaard, N. and Rask, M. (2014). Public Engagement Innovation for Horizon 2020. Inventory of PE mechanisims and initiatives . URL: http://www.vm.vu.lt/uploads/pdf/Public_Engagement_Innovations_H2020-2.pdf .

-

Rask, M., Mačiukaitė-Žvinienė, S., Tauginiené, L., Dikčius, V., Matschoss, K., Aarrevaara, T. and d’Andrea, L. (2016). Innovative Public Engagement. A conceptual model of public engagement in Dynamic and Responsible Governance of Research and Innovation European Union’s Seventh Framework. Programme for research, technological development and demonstration . URL: https://pe2020.eu/2016/05/26/innovative-public-engagement-a-conceptual-model-of-pe/ .

-

Rigutto, C. (2017). ‘The landscape of online visual communication of science’. JCOM 16 (02), C06. URL: https://jcom.sissa.it/archive/16/02/JCOM_1602_2017_C01/JCOM_1602_2017_C06 .

-

Rogers, R. (2015). ‘Digital Methods for Web Research’. In: Emerging Trends in the Social and Behavioral Sciences. Ed. by R. A. Scott and S. M. Kosslyn.

-

Rowe, G. and Frewer, L. J. (2005). ‘A Typology of Public Engagement Mechanisms’. Science, Technology & Human Values 30 (2), pp. 251–290. https://doi.org/10.1177/0162243904271724 .

-

Saffer, A. J., Sommerfeldt, E. J. and Taylor, M. (2013). ‘The effects of organizational Twitter interactivity on organization–public relationships’. Public Relations Review 39 (3), pp. 213–215. https://doi.org/10.1016/j.pubrev.2013.02.005 .

-

Scapolo, F. and Miles, I. (2006). ‘Eliciting experts’ knowledge: A comparison of two methods’. Technological Forecasting and Social Change 73 (6), pp. 679–704. https://doi.org/10.1016/j.techfore.2006.03.001 .

-

Seakins, A. and Dillon, J. (2013). ‘Exploring Research Themes in Public Engagement Within a Natural History Museum: A Modified Delphi Approach’. International Journal of Science Education 3 (1), pp. 52–76. https://doi.org/10.1080/21548455.2012.753168 .

-

Smith, K. S. and Simpson, R. D. (1995). ‘Validating teaching competencies for faculty members in higher education: A national study using the Delphi method’. Innovative Higher Education 19 (3), pp. 223–234. https://doi.org/10.1007/bf01191221 .

-

Stilgoe, J., Owen, R. and Macnaghten, P. (2013). ‘Developing a framework for responsible innovation’. Research Policy 42 (9), pp. 1568–1580. https://doi.org/10.1016/j.respol.2013.05.008 .

-

Su, L. Y.-F., Scheufele, D. A., Bell, L., Brossard, D. and Xenos, M. A. (2017). ‘Information-Sharing and Community-Building: Exploring the Use of Twitter in Science Public Relations’. Science Communication 39 (5), pp. 569–597. https://doi.org/10.1177/1075547017734226 .

-

Sutcliffe, H. (2011). A Report on Responsible Research and Innovation . Prepared for DG Research. European Commission. URL: http://ec.europa.eu/research/science-society/document_library/pdf_06/rri-report-hilary-sutcliffe_en.pdf .

-

Turoff, M., Hiltz, S. R., Li, Z., Wang, Y., Cho, H.-K. and Yao, X. (2006). ‘Online Collaborative Learning Enhancement through the Delphi Method’. Turkish Online Journal of Distance Education 7 (2). URL: https://web.njit.edu/~turoff/Papers/ozchi2004.htm .

-

Uhl, N. P. (1983). ‘Using the Delphi technique in institutional planning’. New Directions for Institutional Research 1983 (37), pp. 81–94. https://doi.org/10.1002/ir.37019833709 .

-

Von Schomberg, R. (2011). ‘Towards Responsible Research and Innovation in the Information and Communication Technologies and Security Technologies Fields’. EU Directorate General for Research and Innovation . https://doi.org/10.2139/ssrn.2436399 .

-

Waters, R. D., Burnett, E., Lamm, A. and Lucas, J. (2009). ‘Engaging stakeholders through social networking: How nonprofit organizations are using Facebook’. Public Relations Review 35 (2), pp. 102–106. https://doi.org/10.1016/j.pubrev.2009.01.006 .

-

Watts, D. J. (2007). ‘A twenty-first century science’. Nature 445 (7127), pp. 489–489. https://doi.org/10.1038/445489a .

-

Weigold, M. F. and Treise, D. (2004). ‘Attracting Teen Surfers to Science Web Sites’. Public Understanding of Science 13 (3), pp. 229–248. https://doi.org/10.1177/0963662504045504 .

-

Whitman, N. I. (1990). ‘The Delphi Technique as an Alternative for Committee Meetings’. Journal of Nursing Education 29 (8), pp. 377–379. URL: https://www.ncbi.nlm.nih.gov/pubmed/2199631 .

-

Yang, S.-U., Kang, M. and Johnson, P. (2010). ‘Effects of Narratives, Openness to Dialogic Communication, and Credibility on Engagement in Crisis Communication Through Organizational Blogs’. Communication Research 37 (4), pp. 473–497. https://doi.org/10.1177/0093650210362682 .

Authors

Lourdes López-Pérez holds a Ph.D. in Social Sciences and she graduated in Communication Science. She also has a Master degree in Science Information and Communication at the University of Granada and another of Marketing at the ESIC. She is a member of the research group “Access and evaluation of scientific information” of University of Granada and since 2006 she works as Science Communicator at a science museum. She has background as a teacher of communication and journalistic writing and a large experience organizing public engagement events and designing communication strategies for promoting science exhibitions. Besides to this, she has published more than twenty book chapters and articles about public engagement in science in national and international journals with a certified quality index (JCR, SJR, RESH). E-mail: lourdes.lpez@gmail.com .

María Dolores Olvera-Lobo holds a Ph.D. in Documentation Studies and is currently Full Professor in Information and Communication at the University of Granada, Spain. She is a member of the Scimago Group of the Spanish National Research Council (CSIC, Madrid). She is a director of the research group “Access and evaluation of scientific information”. she has published, both as author and co-author, books, essays and a considerable number of articles in national and international journals with a certified quality index (JCR, SJR, RESH). E-mail: molvera@ugr.es .