In February of 2014, Bill Nye, former host of “Bill Nye the Science Guy,” debated Ken Hamm, President and CEO of Answers in Genesis (a religious organization that objects to the theory of evolution and maintains that the earth is 6,000 years old). The debate, streamed online, attracted an audience of nearly 750,000 and subsequently obtained a substantial amount of media coverage [Patterson, 2014 ]. Although heavily publicized in both digital and traditional media, the question remains whether participating in such debates is truly effective at informing the public at-large. Indeed, some commentators argued that by giving a well-known creationist a stage in which to espouse his views, Bill Nye actually hurt the cause of increasing scientific literacy in the general population [Chowdhury, 2014 ]. Others have maintained the opposite, advising that scientists and science educators should speak directly to the lay audience in order to combat misinformation and foster a sense of trust and respect between the scientific community and the citizenry [Bauer, 2009 ; Bauer, Allum and Miller, 2007 ; Simis et al., 2016 ].

On initial glance, partaking in a debate is an excellent tactic in which to present scientific evidence. Indeed, organizations like the American Association for the Advancement of Science (AAAS) encourages scientists to be proactive in their approach and cultivate relationships with non-scientists in order to promote scientific knowledge [AAAS, 2018 ]. By watching debates, it is hoped that viewers will modify their positions to align with the scientific consensus, as research indicates that individuals can be swayed if the consensus frame is stressed [Ding et al., 2011 ]. Although the debate format seems like a superb platform in which to reach a large swath of people, it has yet to be tested empirically whether debating with skeptics is a useful approach. The purpose of this study, then, was to examine if scientists participating in an unrestricted debate can influence knowledge, certainty, and attitudes towards contentious, yet relatively settled, scientific issues. As scientists often argue with non-scientist in these open discussions, the study also sought to ascertain if the title/position of the skeptic would affect the dependent measures.

1 Background

Across a myriad of issues, there is a rift between the level of acceptance of certain claims among the scientific community and the public. Whereas most scientific assertions are open to further scrutiny, a “consensus” among the vast majority of experts within a particular field is rare, and yet several scientific claims are acknowledged almost unanimously. In terms of anthropogenic climate change, for example, 87% of scientists accept that “the earth is getting warmer mostly because of human activity,” but not even 50% of Americans admit to this position [Funk and Kennedy, 2016b ]. Similarly, 88% of scientists accept that it is “safe to eat genetically modified foods,” however, 39% of Americans feel that consuming GMOs are worse for one’s health [Funk and Kennedy, 2016a ]. Finally, 98% of scientists accept that “humans and other living things have evolved over time,” but among the general American population, only 62% concur with the statement [Masci, 2017 ].

Often, the primary objective of scientists and public educators of science when entering public forums is to highlight the scientific consensus underlying specific theories and hypotheses with the hope that stressing the consensus within the scientific community will affect how the citizenry views established issues and science in general [Ding et al., 2011 ; Lewandowsky, Gignac and Vaughan, 2013 ; McCright, Dunlap and Xiao, 2013 ]. Research indicates that exposing individuals to the scientific consensus is often the first step in changing their support for government intervention in the form of laws and related statutes [Ding et al., 2011 ; McCright, Dunlap and Xiao, 2013 ; Lewandowsky, Gignac and Vaughan, 2013 ] With that said, the shift of policy preferences among the voting population does not necessarily mean that governments will implement policies that favor the scientific view [McCright and Dunlap, 2003 ]. The difference between the attitudes of the public and scientists is especially curious given that scientists, on average, are still considered reputable sources of information [Funk and Rainie, 2015 ; Rainie, 2017 ]. It is here where scholars have found that although scientists are observed to be credible and may use that integrity to increase scientific understanding, outside actors can create a sense of skepticism and doubt surrounding settled issues by politicizing neutral scientific findings into manufactured controversies.

1.1 Politics and science

The pursuit of knowledge and engaging in scientific endeavors do not exist in a political vacuum [Scheufele, 2014 ]. Rather, as scientific consensus builds around an issue, lawmakers may introduce policies that are designed to tackle or at least mitigate a specific problem, or political leaders may ignore the issue completely. Indeed, one has to only point at the current climate change “debate” to see how a settled scientific topic can become a politically manufactured controversy within the public square [Boykoff and Yulsman, 2013 ; Metcalf and Weisbach, 2009 ; Nelkin, 1995 ; Nisbet and Fahy, 2015 ]. Oftentimes, when a scientific consensus (e.g., anthropic climate change caused by human activity) is reached that may negatively impact an entrenched industry (e.g., petroleum corporations), doubt and ambiguity are introduced in order to stall preemptive policy changes [McCright and Dunlap, 2003 ]. The causal link between tobacco smoking and cancer has often been cited by scholars as the prototypical example of a scientific consensus being questioned in the pursuit of profits. Although cigarette manufactures were aware of the link between tobacco smoking and cancer diagnoses in the middle part of the 20th century, industry officials continually sought to sow doubt well into the 21st century in terms of whether smoking tobacco was truly a key factor in the development of certain types of cancers [Drope and Chapman, 2001 ; Hong and Bero, 2002 ].

According to Nelkin [ 1995 ], controversies may also arise when the acceptance of a scientific consensus among the public leads to decisions that could cause organizations and businesses to not only lose money, but political control as well. For example, many young earth creationists (i.e., individuals who believe that the earth is no more than 6,000 years old) fear that if more Americans accept the underlying principles of the theory of evolution, their clout in shaping public policy related to education will diminish [Larson, 2003 ]. As such, individuals and groups, including lobbying arms, think tanks, and pundits, strive to introduce skepticism surrounding even the basic tenets of evolution in an attempt to create disagreement with the goal of stalling government intervention [Ceccarelli, 2011 ; Larson, 2003 ]. These actors often run the gamut from religious figures to politicians. In fact, state legislators, often at the behest of religious institutions, have proposed bills that would force secondary-school teachers to introduce “creation science” as an alternative explanation to the world’s biodiversity, while simultaneously downplaying the widely accepted theory of evolution through natural section [Berkman and Plutzer, 2009 ; Larson, 2003 ].

In terms of climate change, United States senators, representatives, governors, and a host of other lawmakers at both the local and national level have expressed skepticism with the underlying premise that human activity is the primary reason for a warming planet [Carvalho and Peterson, 2012 ; Giddens, 2011 ]. In February of 2015, for example, Senator Jim Inhofe of Oklahoma brought a snowball into the United States Senate Chamber to demonstrate and emphasize his incredulity towards the science of climate change [Cama, 2015 ]. President Trump, too, has highlighted his distrust towards the climate change consensus by Tweeting in 2012, “the concept of global warming was created by and for the Chinese…” [Wong, 2016 ]. To bolster skeptic claims, fringe scientists may also by cited by those wishing to sow doubt among the lay population [Boykoff and Yulsman, 2013 ].

As politically manufactured controversies develop and become self-sustaining through the actions of a multitude of organizations and alternative media platforms, topics that were once non-controversial become linked to ideological convictions and religious beliefs [Bohr, 2014 ; Drummond and Fischhoff, 2017 ; Hamilton, 2011 ; Hobson and Niemeyer, 2013 ; Nisbet and Markowitz, 2014 ]. By politicalizing these issues and tying them to religious and political dogma, the ability of science educators to change attitudes, and perhaps behaviors, fades. In response, some scientists have entered the public sphere to refute misinformation and spin by relying on the gateway belief model to change attitudes, beliefs and behaviors [van der Linden et al., 2015 ].

1.2 Gateway belief model

In order to alter the misconceptions shrouding settled scientific issues, scientists and public educators have come to rely on the gateway belief model (GBM) as a standard in which to communicate with non-scientists. According to van der Linden et al. [ 2015 ], the GBM assumes that the change in the perceived level of scientific consensus surrounding an issue will affect the belief that the issue is indeed factual and/or occurring. This belief will subsequently influence the amount of policy support given towards strategies to combat and/or remedy the problem. By stressing the consensus position through dissimilar mediums, it is presumed that the public’s stance will eventually align with the scientific opinion. From this standpoint, non-scientists within a society are recognized to be both rational and open receivers of scientific data, and will consequently modify their stance when presented with credible evidence [Sturgis and Allum, 2004 ; van der Linden et al., 2015 ].

Some previous scholarship has supported this underlying premise. For example, van der Linden et al. [ 2015 ] found that by merely “increasing public perceptions of the scientific consensus causes a significant increase in the belief that climate change is (a) happening, (b) human-caused and a (c) worrisome problem” (p. 6). Similarly, but using visual exemplars, Dixon et al. [ 2015 ] discovered that delivering the consensus of vaccine safety can overcome initial confusion related to the non-existent vaccine-autism link. By using a national survey design, Ding et al. [ 2011 ], too, found evidence suggesting a positive relationship with “perceived scientific agreement” and factors associated with the acceptance that human activity is the main contributor to climate change. In a recent meta-analysis, Hornsey et al. [ 2016 ] established that underscoring the consensus narrative is a significant variable in terms of convincing people that climate change is real.

While there is empirical data to support such a consensus-driven rhetorical strategy, the gateway belief model posits that individuals are knowledge-deficient and scientists can remedy this deficiency by highlighting the scientific consensus via a top-down approach. The underlying premise that individuals are “empty vessels” in which to fill with information has been challenged and critiqued by other studies, however. Contemporary scholarship has found that attitudes and beliefs towards some science topics are inherently intertwined with political ideology and other variables (e.g., religiosity) that have, on preliminary observation, seemingly nothing to do with science literacy and acceptance [Drummond and Fischhoff, 2017 ]. In particular, Kahan, Jenkins-Smith and Braman [ 2011 ] found that an individual’s cultural cognition will oftentimes trump scientific findings, regardless of the specific issue under investigation. Results demonstrated that participants engaged in a form of motivated reasoning to “recognize such information as sound in a selective pattern that reinforces their cultural predispositions” [Kahan, Jenkins-Smith and Braman, 2011 , p. 169]. Similarly, Hart and Nisbet [ 2011 ] discovered that factual information about climate change did not alter the level of support for climate change mitigation statutes. Instead, group identity signals as well as political affiliation were shown to affect how climate change information was treated. Past research has also shown that stressing the consensus position can actually cause a backlash effect and strengthen fabricated beliefs [Dixon et al., 2015 ; Kahan, 2015 ]. Additional scholarship within communication and psychology supports the overall assertion that factual information will not necessarily lead to attitude and/or behavioral change due to the inherent clout of ideological beliefs and cognitive biases [Allum et al., 2008 ; Howe and Leiserowitz, 2013 ; Kahan et al., 2012 ; Myers et al., 2012 ; Sturgis and Allum, 2004 ].

Science educators accepting an invitation to debate, in an open and unrestricted environment, those who express the contrarian position associated with a scientific consensus are in a unique position to test whether the gateway belief model is applicable across multiple contexts. As stated previously, some research has found that individuals can be persuaded through the use of the consensus description in a non-professional setting, while other findings contradict this notion, and suggest that ideology and religiously are often the most dominant factors when analyzing scientific information. As such, the following research questions are proposed:

- RQ1:

- Does being exposed to a debate focusing on the climate change consensus affect personal beliefs, scientist credibility, scientific certainty, personal certainty and the potential to support policy changes related to climate change?

- RQ2:

- Does being exposed to a debate focusing on the consensus of the theory of evolution affect personal beliefs, scientist credibility, scientific certainty, personal certainty and the potential to support policy changes related to teaching the theory of evolution?

- RQ3:

- Does being exposed to a debate focusing on the safety consensus of genetically modified organisms affect personal beliefs, scientist credibility, scientific certainty, personal certainty, and the potential to change behavior related to consuming genetically modified foods?

1.3 Scientists’ source credibility

When removed from the traditional mediated environment, scientists and science media are seen as credible when discussing science-specific matters [Brewer and Ley, 2013 ; Kotcher et al., 2017 ]. As stated by Fiske and Dupree [ 2014 ] and Pornpitakpan [ 2004 ], credibility is often conceptualized as perceived expertise and trustworthiness of a message source. Observed credibility often acts as a heuristic cue, and can dictate how contradictory messages are received and scrutinized [Eagly and Chaiken, 1993 ]. Indeed, as decades of persuasion research implies, source credibility is an important variable to consider when addressing whether a persuasive message will be successful in changing attitudes, beliefs, and behavior [Hovland and Weiss, 1951 ; Eagly and Chaiken, 1993 ; Pornpitakpan, 2004 ]. In general, the more credible the source, the more likely the audience will at least consider, if not accept, the proposed claim. Within the climate change context, for example, Lombardi, Seyranian and Sinatra [ 2014 ] found that source credibility was a significant predictor in whether individuals thought that climate change was plausible. The more credible the source, the more likely individuals supposed that human-induced climate change was at least a possibility. Kotcher et al. [ 2017 ] found that not only does the public view scientists with a high level of perceived credibility, but there is also little downside (in terms of negatively affecting their credibility) for scientist to engage in most forms of advocacy.

As debates often pit mainstream scientists against individuals who may or may not have formal scientific training, one might accept that scientists would have an edge related to overall credibility when compared to their skeptic debating partner. However, some scholarship suggests that individuals may have trouble distinguishing the truth between statements made by opposing experts [McKnight and Coronel, 2017 ], thus negating scientists’ intrinsic credibility advantage in a debate. In conjunction with research underscoring the importance of individual-level traits (e.g., political party affiliation, ideology, religiosity, etc.), source credibility may serve as a weak cue when assessing claims centering on contentious scientific issues. Compound that with the unique structure of mainstream scientists debating figures both inside and outside of the scientific community, research questions should be proposed rather than hypotheses.

- RQ1a:

- Does the type of skeptic debating the consensus of climate change affect personal beliefs, scientist credibility, scientific certainty, personal certainty and the potential to support policy changes related to climate change?

- RQ2a:

- Does the type of skeptic debating the consensus of the theory of evolution affect personal beliefs, scientist credibility, scientific certainty, personal certainty and the potential to support policy changes related to teaching the theory of evolution?

- RQ3a:

- Does the type of skeptic debating the safety consensus of genetically modified organisms affect personal beliefs, scientist credibility, scientific certainty, personal certainty, and the potential to change behavior related to consuming genetically modified foods?

2 Method & procedure

To answer the proposed research questions, a 3 4 pre/posttest cross-sectional experiment was undertaken using undergraduate students as participants. In all, 305 students, from a large Western university specifically located in the Mountain West, completed the project in its entirety in exchange for course/extra credit. The students were recruited from different majors across the university via an online database in which students could sign-up to participate in research projects directed by faculty members. Of those who finished the experiment, 208 ( N = 208) passed the two manipulation check items. The two manipulation questions were nominal in their design and solicited participants to indicate the issue covered as well as the title of the skeptic debater. In regards to gender of the 208 participants included in the final analysis, 46% ( n = 95) identified as “male,” while 54% identified as “female” ( n = 113). Nearly 90% ( n = 168) of the participants considered themselves “white,” .5% ( n = 1) identified as “black,” 11.1% ( n = 23) as “Hispanic,” and 2.9% ( n = 6) defined their ethnicity as “Asian.” The remainder ( n = 10) described themselves as “Other.” The average age of the participants was 25 years old.

Finally, as research suggests political ideology and religiosity are important variables to address when examining persuasive effects associated with science communication, two measures were included to capture these factors. Political ideology was measured using a 7-point scale with “1” being defined as “Very Conservative” and “7” being expressed as “Very Liberal.” The mean political ideology score of the 208 participants was 3.56 with a standard deviation of 1.03. In terms of religiosity, the 7-point measure asked participants to rate themselves between “1,” defined as “Very Religious,” and “7,” described as “Not at all Religious.” The mean score was 3.41 with a standard deviation of 1.22.

2.1 Independent variables

The study varied the science issue independent variable (i.e., theory of evolution, climate change, and safety of genetically modified organisms/foods) and the skeptic debating figure (i.e., religious authority, politician, scientist, and organization president). Participants were introduced to the experiment via an in-class and email announcement. Students who volunteered to complete the project were asked to visit a specific computer lab on campus where they were formally presented with the task. The experiment was done in a computer lab in order to increase overall control.

Participants were first asked a series of pre-test questions related to personal belief, scientist credibility, and scientific and personal certainty of the consensus associated with the issues that acted as the independent variables. Participants were also asked to indicate their support for possible policy changes related to the scientific topics as well as the potential for behavior modification. A series of “dummy” items were included in the pre-test to conceal the main purpose of the experiment. After answering the questions, participants were asked to read a non-relevant newspaper article and then were randomly assigned to read one of the 12 manipulated debate segments.

The transcripts were obtained from actual debates produced for general public consumption (see appendix A for a screenshot). The GMO and evolution debate transcripts were borrowed from Intelligence Squared, an organization that hosts public conferences that are aired on mainstream media outlets. The debate involving climate change originally aired on CNN’s “11th Hour” program in December of 2013, and included climate change skeptic Marc Morano, founder of www.climatedepot.com, and Michael Brune, executive director of the Sierra Club. This particular transcript was chosen due to its length (the segment lasted 10 minutes) and the number of different arguments made by Morano on behalf of climate change skeptics.

Each of the conditions varied in the issue discussed (i.e., theory of evolution, climate change, GMO safety) and the title of the debater (i.e., politician, religious figure, scientist, and organization president) who voiced their opposition towards the settled scientific issue. The content; however, was kept consistent. Past scholarship has found participants best understand, and have greater recall, when the scientific consensus is presented in a clear and concise manner [van der Linden et al., 2015 ]. Thus, a summarizing sentence was inserted into each condition that supported a general consensus among scientists about the acceptance of the theory of evolution, safety of GMOs, and the understanding that human activity is the chief cause of climate change. A photographic still was included across conditions in order to increase realism. After reading the debate transcript, participants were asked to complete a post-test, including the items from the pre-test randomly placed within the post-test questionnaire. Once finished, participants were thanked for their time and asked to exit the web browser.

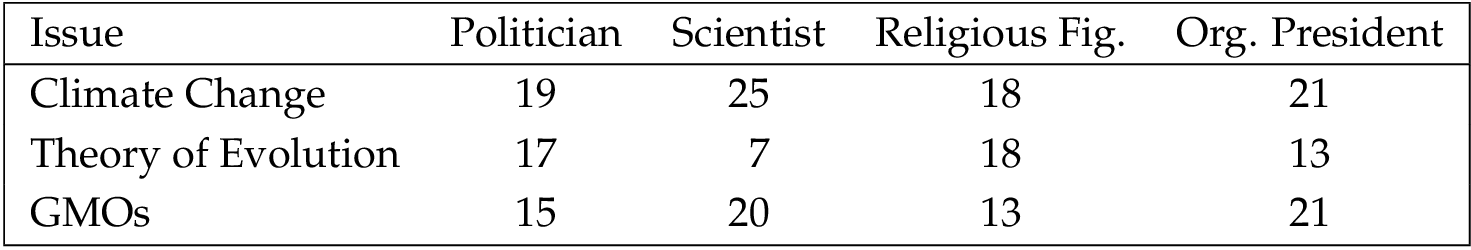

In total, 55 ( n = 55) participants were assigned to the evolution debate, 69 ( n = 69) were exposed to the GMO debate, and 83 ( n = 83) were asked to read the debate centering on climate change (Please see Table 1 in appendix B for more detailed information related to assigned conditions). As stated by Cohen [ 1988 ], an a priori power analysis, via G* 3.13, revealed that in order to detect a medium effect size between the pre and post-test groups only (Cohen’s d .5) with , a sample size of at least 34 participants was needed for each group, which was achieved.

For the anti-debater conditions, 19 ( n = 19) participants were randomly assigned to the politician skeptic, 25 ( n = 25) to the anti-consensus scientist, 18 ( n = 18) to the religious authority, and 21 ( n = 21) to the organization president along climate change. In terms of the theory of evolution condition, 17 ( n = 17) were assigned to the politician debater, 7 ( n = 7) to the scientist, 18 ( n = 18) to the religious figure, and 13 ( n = 13) to the organization president. Finally, 15 ( n = 15) participants were randomly assigned to the politician cell, 20 ( n = 20) to the scientist, 13 ( n = 13) to the religious debater, and 21 ( n = 21) to the organization president along the GMO issue. An a priori power analysis, via G* 3.13, stated that in order to detect a large effect size between the conditions (Cohen’s d .4) with , a sample size of at least 18 participants was needed for each group. This was achieved in 8 of the 12 cells.

2.2 Dependent measures

The primary purpose of the study was to learn if exposure to a debate deliberating a settled scientific issue would influence the beliefs of participants, the perception of scientist credibility, scientific and personal certainty, and support for policy adaptions/behavior change. As such, a series of items were included to accurately capture the variables.

Personal belief. A total of four belief items were included in both the pre and post-test regardless of the debate segment that was randomly assigned. Each item was designed to measure if participants “believed” in the fundamental consensus of each of the three manufactured controversies included in the project (i.e., climate change, the theory of evolution, and GMO safety). These items were borrowed and adapted from past Pew Research surveys [Funk and Kennedy, 2016b ; Funk and Kennedy, 2016a ; Funk and Rainie, 2015 ]. For climate change, participants were asked if they believed “humans are the primary contributors to climate change” via 1–7 Likert-based scale with “1” being defined as “strongly disagree” and “7” as “strongly agree.” The issue of GMO safety and the acceptance of the theory of evolution used the same 7-point Likert scale as well with participants being asked if they believed “that the use of genetic modification technology to produce foods is generally safe” and if they agreed that “human beings evolved from earlier species of animals.”

Credibility of scientists. To measure the credibility of scientists in general, four Likert-based items with “1” defined as “strongly disagree” and “7” as “strongly agree” were included to determine if participants viewed scientists as “competent,” “honest,” “trustworthy,” and “have expertise in their field of study.” This credibility measure was borrowed and adapted from several sources [i.e. McCroskey and Teven, 1999 ; Ohanian, 1990 ; Kertz and Ohanian, 1992 ]. The factors were averaged to create a “scientist credibility” index. The index achieved acceptable reliability on the pretest (Cronbach’s =.75) and good reliability on the posttest (Cronbach’s = .86).

Scientific and personal certainty. Previous research shows that the degree of perceived external certainty (i.e., individuals are/are not confident that scientists agree regarding a divisive issue) can influence how one views and considers manufactured controversies [Dixon and Clarke, 2013 ]; therefore, several items were used to measure both scientific and personal certainty regarding climate change, the theory of evolution, and the safety of GMOs. A 7-point Likert-based scale, with “1” being defined as “very uncertain” and “7” labeled “very certain,” was applied. The items asked participants to indicate how certain they were that there was a general consensus among scientists that “humans are the primary contributors to climate change,” “human beings evolved from earlier species of animals,” and “genetically modified foods are safe to eat.”

Behavioral and policy change. Several items inquired into whether participants would change their behavior and/or support policy changes associated with the issues covered in the project using a 7-point Likert-based scale with “1” defined as “strongly disagree” and “7” as “strongly agree.” For climate change, a question asked if “steps should be taken to combat climate change.” The theory of evolution item asked if participants agreed or disagreed with the following statement, “The theory of evolution should be taught in public schools.” Finally, participants were asked if they agreed or disagreed with the following sentence, “I would eat genetically modified food.”

3 Results

Research question 1 (RQ1) asked if exposure to a debate segment focusing on the consensus of climate change among scientists would affect beliefs, scientist credibility, scientific and personal certainty, and policy support related to climate change. A paired sample t-test was conducted testing the mean differences between the pre and post test scores along the five dependent measures. No significant differences ( p .05) were found.

Research question 1a (RQ1a) examined if the title of the skeptic debater would affect beliefs, scientist credibility, scientific and personal certainty, and policy support associated with the climate change hypothesis. A multivariate analysis of covariance (MANCOVA), with the anti-consensus figures (e.g., religious official, politician, scientist, and organization president) as the independent variables, political ideology and religiosity as the covariates, and belief, scientist credibility, scientific and personal certainty, and policy support as the dependent variables, was applied to answer research question 1a. No significant differences were found ( p .05) between the independent factors.

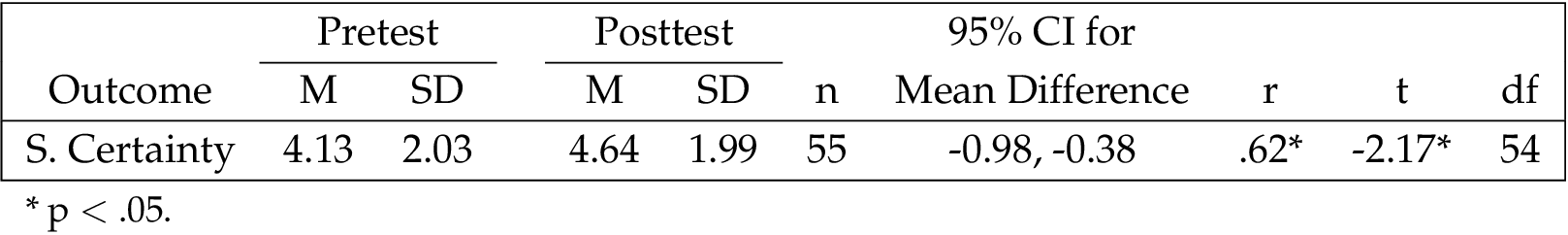

Whereas research question 1 focused on climate change, research question 2 (RQ2) centered on the theory evolution, and asked whether exposure to a debate segment would influence participant belief about the theory, scientist credibility, issue certainty (scientist and their own), and support related to the teaching of evolution in public schools. In order to answer the question, a paired sample t-test was conducted testing mean differences between the pretest and posttest dependent measures. Significant mean differences ( p .05) were found along the “scientific certainty” dependent measure only, with participants ( n = 55) rating scientific certainty higher (pretest M = 4.13, SD = 2.03) after exposure to the debate (posttest M = 4.64, SD = 1.99, t(54) = -2.17, with a Cohen’s d of .26, suggesting a small effect size (see Table 3 under appendix B for more detailed information).

In additional question (RQ2a) was put forward to determine if the skeptic debater’s title/position (i.e., religious authority, scientist, leader of an organization, and politician) would affect the dependent variables. Once again, a multivariate analysis of covariance (MANCOVA) with the anti-consensus figures as the independent variables, political ideology and religiosity as covariates, beliefs, scientist credibility, scientific and personal certainty, and policy support related to the theory of evolution as the dependent variables, was used to answer research question 2a. No significant differences ( p .05) were found.

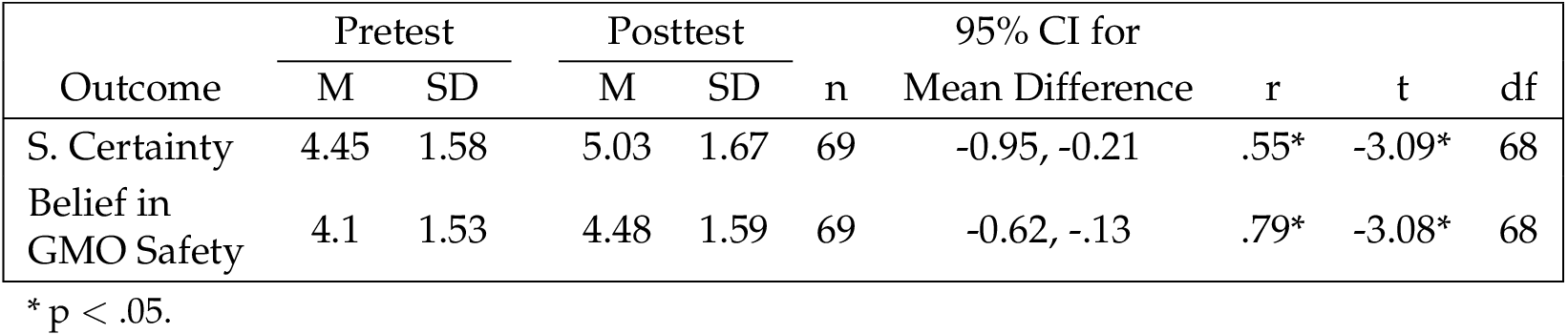

The final research question (RQ3) was similar to the previous two inquiries; however, it asked whether exposure to the debate centering on GMO safety would influence the dependent variables. As above, a paired sample t-test was conducted to establish if mean differences would be found. Significant mean differences were discovered along belief in GMO safety and certainty about scientific acceptance regarding the safety of genetically modified foods. Along the personal belief in GMO safety, significant differences were found ( p .05) among the participants ( n = 69) between the pretest ( M = 4.1, SD = 1.53) and posttest ( M = 4.48, SD = 1.59), t(68) = - 3.1, with a Cohen’s d of .24, representing a small effect size. In terms of the scientific issue certainty measure, participant ( n = 69) average pretest scores ( M = 4.45, SD = 1.58) were significantly higher ( p .05) after exposure to the debate ( M = 5.03, SD = 1.69); t(68) = -3.0, with a Cohen’s d of .35, signifying a medium effect size. (see Table 2 under appendix B for more detailed information).

Similar to research question 1a and 2a, a multivariate analysis of covariance (MANCOVA), with the anti-consensus debate figures (e.g., religious figure, politician, scientist, and organization president) as the independent variables, GMO safety beliefs, scientist credibility, scientific and personal certainty, and behavior change related to the safety of GMOs as the dependent variables, and political ideology and religiosity as the covariates, was used to answer research question 3a. No differences were found ( p .05) across the measures.

4 Discussion

The purpose of this study was twofold. First, the experiment was designed to discover how the mere exposure to a debate centering on a controversial, albeit reasonably settled, scientific issue impacts personal belief towards the issue, scientist credibility in general, certainty (personal and scientific) as well as the possibility for behavior change and/or policy support. As politically manufactured controversies are often sustained by a multitude of actors representing various interest groups, the second objective of the experiment was to determine if the title/position of the skeptic debater would affect these same dependent measures.

In terms of establishing effects related to the exposure of the stimulus, differences were found for the scientific topics of genetically modified organisms (GMOs) and the theory of evolution. More specifically, participants increased their perception of the scientific certainty of the safety of genetically modified foods as well as their own personal belief in the safety of GMOs. In addition, participants, after exposure to the evolution debate segment, were more certain of the scientific consensus that “humans evolved from earlier species of animals.” In contrast, the title/position of the skeptic did not significantly influence the dependent variables, regardless of the issue being considered.

The results are in line with previous findings showing that it is becoming increasingly difficult to persuade individuals when a scientific subject becomes politically and/or religiously polarized, even when scientists highlight the consensus position [Simis et al., 2016 ]. Whereas the theory of evolution is inherently tied to religious doctrine and the level of acceptance of climate change is often contingent on political ideology, the safety of GMOs has yet to become strongly linked to any particular political dogma or religious framework. On its merits, GMO safety still remains a scientific question rather than a political and/or religious matter. This conclusion is strengthened given the fact that scientific certainty regarding the theory of evolution increased, but personal belief and the potential for policy support (i.e., agreeing that evolution should be taught in public schools) did not change. Participants may have understood that there was a consensus among researchers that the theory of evolution is strongly accepted within the scientific community; but that fact did not change their own personal opinions.

Further, the participants recruited for this experiment were rather unique in that they leaned slightly conservative in their political outlook, and on average, were more religious than the midpoint on the religiosity scale would indicate. Being more conservative and religious may have served as a cognitive bulwark when participants were asked to read the debate segment concentrating on climate change and the theory of evolution, respectively. Instead of processing the information presented rationally and objectively, the participants may have analyzed the debate segment through an ideological lens. This would not be surprising as some research supports the notion that political conservatives tend to be skeptical of the climate change consensus, while religious individuals are more likely to object to the precepts of the theory of evolution through natural selection [Larson, 2003 ; McCright and Dunlap, 2003 ]. As the safety of GMOs is not necessarily interwoven into a political position nor a religious creed, it may have been easier for individuals to not only accept the scientific consensus, but also change their own personal beliefs to align with the scientific position.

On initial glance, this may seem problematic; however, the propensity for individuals to remain steadfast in their attitudes and beliefs suggests that destructive unanimity among elites may be tempered by the citizenry at-large. For example, a consensus-driven argument to support and engage in xenophobia, violence, or just indifference may be negated by individuals willing to remain committed to their underlying belief structure, regardless of the pressure from experts and leaders. In this respect, the lack of effects may be a positive, especially when considering how consensus can lead a populace to engage in disparaging behaviors and/or hold dehumanizing attitudes towards marginalized groups and people [Gilmore, Meeks and Domke, 2013 ].

As a strategy, then, participating in a shared debate and stressing the consensus frame may not be an effective way to truly change minds if the debate itself emphasizes a manufactured controversy. Instead, science educators may want to employ other tactics (e.g., creating civic workshops) when addressing topics linked to robust convictions and values.

Past research has found that by stressing the consensus position among scientists, attitude change that aligns with the scientific conclusion may occur via the gateway belief model [Ding et al., 2011 ; van der Linden et al., 2015 ]. The results from the present study both support and contradict this underlying premise. For example, participants were more aware of the scientific consensus of the theory of evolution; however, their attitudes did not change. In contrast, scientific certainty and personal beliefs about the safety of GMOs both increased after exposure to the debate segment. Once again, the data suggest that the primary factor on whether being aware of the consensus will then subsequently modify personal beliefs is conditional on the issue being discussed. Striving to increase the perception of the scientific consensus within the general population may still be an effective manner in which to inform and persuade; however, it may not be a panacea to the problems inherent in changing attitudes, beliefs, and behaviors associated with contentious issues.

In terms of the lack of effects among the skeptic position/title, it may be that because climate change and the theory of evolution are so closely associated to ideology and religiosity, respectively, the position/title of the debater was deemed irrelevant by the participants. What may have mattered most was not so much the opponent of the consensus position, but the topic under discussion. This is good news for science educators since the debate issue itself may be more vital than the designation of the individual advocating for the skeptic viewpoint. Even the fictional anti-consensus scientist did not seem to have an influence on the dependent measures. From these findings, scientists should be willing to debate both scientists and non-scientists in the public arena without necessarily having to worry about their opponent’s credentials. With this said, a few key caveats must be addressed. In order to reach an acceptable power level, the effect size was set at .4 rather than the traditional .25, as suggested by Cohen [ 1988 ], highlighting the possibility that the skeptic title/position did impact how participants perceived the issue as a whole. Further, four of the 12 cells did not achieve the necessary number of participants. This is problematic, but controlling for political ideology and religiosity does help to strengthen the findings that the title/position of the counter-debater had little influence on participant views.

While some scholars have encouraged scientists to remove themselves from public policy discussions in order for the practice of science to remain politically neutral and unbiased, research by Kotcher et al. [ 2017 ] has shown that scientific credibility does not erode when scientists become advocates. Coupled with the findings from this study, science educators may be able to take greater proactive steps across unique formats, such as debates, to increase scientific literacy in the lay population. Indeed, the average score on the scientist credibility index remained consistent after exposure to the stimuli across all conditions (pretest M = 5.15, posttest M = 5.16). From this perspective, scientists should not shirk from the limelight, and continue to attempt to increase scientific knowledge and persuade individuals to accept the scientific consensus regarding both provocative and non-controversial topics.

4.1 Limitations and future research

First, participants were asked to read a debate segment rather than read a debate in its entirety. Reading the entire debate transcript may affect the participants differently. In a similar vein, watching a debate segment or viewing the entire debate, too, may be a more realistic way in which to measure effects. As this study was intended to be a “first step” in understanding the effects of scientific debates, future research should continue to move forward by subjecting participants to video debate segments.

Further, the dependent measures, while being employed in previous communication research, are somewhat limited in their scope. For example, the personal beliefs of participants were measured using one item each. This isn’t to say that a single question can’t accurately capture an individual’s beliefs about a particular statement, rather it may only assess a component of their overall feelings towards an issue. Future research should include more robust measures in which to assess participant attitudes, values, and beliefs.

Using students as participants is often problematic given their unique demographic makeup. Contemporary research indicates that student samples are biased due to undergraduates being rather homogenous across several key variables, including socio-economic status, race, ethnicity, and age [Hanel and Vione, 2016 ]. To remedy this, future science communication (including investigations into science persuasion) scholarship needs to sample a broader population set that is more representative of the public.

In sum, the results show that science educators can sway individuals by focusing on the consensus position in a debate format; however, the amount of influence may be issue-dependent. Although engaging in debates may have merit, the finite time of scientists and science educators may be better used elsewhere if the debate highlights issues that have become heavily polarized and/or incorporated into a religious context.

A Screenshot of the stimulus

B Assigned conditions and statistical analyses

References

-

Allum, N., Sturgis, P., Tabourazi, D. and Brunton-Smith, I. (2008). ‘Science knowledge and attitudes across cultures: a meta-analysis’. Public Understanding of Science 17 (1), pp. 35–54. https://doi.org/10.1177/0963662506070159 .

-

American Association for the Advancement of Science (2018). Why public engagement matters . URL: https://www.aaas.org/pes/what-public-engagement .

-

Bauer, M. W. (2009). ‘The Evolution of Public Understanding of Science — Discourse and Comparative Evidence’. Science, Technology and Society 14 (2), p. 221. https://doi.org/10.1177/097172180901400202 .

-

Bauer, M. W., Allum, N. and Miller, S. (2007). ‘What can we learn from 25 years of PUS survey research? Liberating and expanding the agenda’. Public Understanding of Science 16 (1), pp. 79–95. https://doi.org/10.1177/0963662506071287 .

-

Berkman, M. B. and Plutzer, E. (2009). ‘Scientific expertise and the culture war: public opinion and the teaching of evolution in the American states’. Perspectives on Politics 7 (03), pp. 485–499. https://doi.org/10.1017/s153759270999082x .

-

Bohr, J. (2014). ‘Public views on the dangers and importance of climate change: predicting climate change beliefs in the United States through income moderated by party identification’. Climatic Change 126 (1-2), pp. 217–227. https://doi.org/10.1007/s10584-014-1198-9 .

-

Boykoff, M. T. and Yulsman, T. (2013). ‘Political economy, media and climate change: sinews of modern life’. Wiley Interdisciplinary Reviews: Climate Change 4 (5), pp. 359–371. https://doi.org/10.1002/wcc.233 .

-

Brewer, P. R. and Ley, B. L. (2013). ‘Whose Science Do You Believe? Explaining Trust in Sources of Scientific Information About the Environment’. Science Communication 35 (1), pp. 115–137. https://doi.org/10.1177/1075547012441691 .

-

Cama, T. (2015). ‘Inhofe hurls snowball on Senate floor’. The Hill . URL: http://thehill.com/policy/energy-environment/234026-sen-inhofe-throws-snowball-to-disprove-climate-change .

-

Carvalho, A. and Peterson, T. A. (2012). Climate change politics: communication and public engagement. Amherst, NY, U.S.A.: Cambria Press.

-

Ceccarelli, L. (2011). ‘Manufactured scientific controversy: science, rhetoric and public debate’. Rhetoric & Public Affairs 14 (2), pp. 195–228. https://doi.org/10.1353/rap.2010.0222 .

-

Chowdhury, S. (5th February 2014). ‘Bill Nye versus Ken Ham: who won?’ Christian Science Monitor . URL: https://www.csmonitor.com/Science/2014/0205/Bill-Nye-versus-Ken-Ham-Who-won .

-

Cohen, D. (1988). Statistical power analysis for the behavioral sciences. 2nd ed. Hillsdale, NJ, U.S.A.: Erlbaum.

-

Ding, D., Maibach, E. W., Zhao, X., Roser-Renouf, C. and Leiserowitz, A. (2011). ‘Support for climate policy and societal action are linked to perceptions about scientific agreement’. Nature Climate Change 1 (9), pp. 462–466. https://doi.org/10.1038/nclimate1295 .

-

Dixon, G. N. and Clarke, C. E. (2013). ‘Heightening Uncertainty Around Certain Science: Media coverage, false balance, and the autism vaccine controversy’. Science Communication 35 (3), pp. 358–382. https://doi.org/10.1177/1075547012458290 .

-

Dixon, G. N., McKeever, B. W., Holton, A. E., Clarke, C. and Eosco, G. (2015). ‘The power of a picture: overcoming scientific misinformation by communicating weight-of-evidence information with visual exemplars’. Journal of Communication 65 (4), pp. 639–659. https://doi.org/10.1111/jcom.12159 .

-

Drope, J. and Chapman, S. (2001). ‘Tobacco industry efforts at discrediting scientific knowledge of environmental tobacco smoke: a review of internal industry documents’. Journal of Epidemiology & Community Health 55 (8), pp. 588–594. https://doi.org/10.1136/jech.55.8.588 .

-

Drummond, C. and Fischhoff, B. (2017). ‘Individuals with greater science literacy and education have more polarized beliefs on controversial science topics’. Proceedings of the National Academy of Sciences 114 (36), pp. 9587–9592. https://doi.org/10.1073/pnas.1704882114 .

-

Eagly, A. H. and Chaiken, S. (1993). The Psychology of Attitudes. Orlando, FL, U.S.A.: Harcourt Brace Jovanovich College Publishers.

-

Fiske, S. T. and Dupree, C. (2014). ‘Gaining trust as well as respect in communicating to motivated audiences about science topics’. Proceedings of the National Academy of Sciences 111 (Supplement 4), pp. 13593–13597. https://doi.org/10.1073/pnas.1317505111 .

-

Funk, C. and Kennedy, B. (2016a). ‘Public opinion about genetically modified foods and trust in scientists connected with these foods’. Pew Research Center: Internet & Technology . URL: http://www.pewinternet.org/2016/12/01/public-opinion-about-genetically-modified-foods-and-trust-in-scientists-connected-with-these-foods/ .

-

— (2016b). ‘Public views on climate change and climate scientists’. Pew Research Center: Internet & Technology . URL: http://www.pewinternet.org/public-views-on-climate-change-and-climate-scientists .

-

Funk, C. and Rainie, L. (2015). ‘Public and scientists’ views on science and society’. Pew Research Center: Internet & Technology . URL: http://www.pewinternet.org/public-and-scientists-views-on-science-and-society/ .

-

Giddens, A. (2011). The politics of climate change. 2nd ed. Cambridge, U.K.: Policy Press.

-

Gilmore, J., Meeks, L. and Domke, D. (2013). ‘Why do (we think) they hate us: anti-americanism, patriotic messages and attributions of blame’. International Journal of Communication 7, pp. 701–721. URL: http://ijoc.org/index.php/ijoc/article/view/1683 .

-

Hamilton, L. C. (2011). ‘Education, politics and opinions about climate change evidence for interaction effects’. Climatic Change 104 (2), pp. 231–242. https://doi.org/10.1007/s10584-010-9957-8 .

-

Hanel, P. H. P. and Vione, K. C. (2016). ‘Do student samples provide an accurate estimate of the general public?’ PLOS ONE 11 (12), e0168354. https://doi.org/10.1371/journal.pone.0168354 .

-

Hart, P. S. and Nisbet, E. C. (2011). ‘Boomerang effects in science communication: how motivated reasoning and identity cutes amplify opinion polarization about climate mitigation policies’. Communication Research 39 (6), pp. 701–723. https://doi.org/10.1177/0093650211416646 .

-

Hobson, K. and Niemeyer, S. (2013). ‘“What sceptics believe”: the effects of information and deliberation on climate change scepticism’. Public Understanding of Science 22 (4), pp. 396–412. https://doi.org/10.1177/0963662511430459 .

-

Hong, M.-K. and Bero, L. A. (2002). ‘How the tobacco industry responded to an influential study of the health effects of secondhand smoke’. BMJ 325 (7377), pp. 1413–1416. https://doi.org/10.1136/bmj.325.7377.1413 .

-

Hornsey, M. J., Harris, E. A., Bain, P. G. and Fielding, K. S. (2016). ‘Meta-analyses of the determinants and outcomes of belief in climate change’. Nature Climate Change 6 (6), pp. 622–626. https://doi.org/10.1038/nclimate2943 .

-

Hovland, C. I. and Weiss, W. (1951). ‘The influence of source credibility on communication effectiveness’. Public Opinion Quarterly 15 (4), pp. 635–650. https://doi.org/10.1086/266350 .

-

Howe, P. D. and Leiserowitz, A. (2013). ‘Who remembers a hot summer or a cold winter? The asymmetric effect of beliefs about global warming on perceptions of local climate conditions in the U.S.’ Global Environmental Change 23 (6), pp. 1488–1500. https://doi.org/10.1016/j.gloenvcha.2013.09.014 .

-

Kahan, D. M. (2015). ‘Climate-Science Communication and the Measurement Problem’. Advances in Political Psychology 36, pp. 1–43. https://doi.org/10.1111/pops.12244 .

-

Kahan, D. M., Jenkins-Smith, H. and Braman, D. (2011). ‘Cultural cognition of scientific consensus’. Journal of Risk Research 14 (2), pp. 147–174. https://doi.org/10.1080/13669877.2010.511246 .

-

Kahan, D. M., Peters, E., Wittlin, M., Slovic, P., Ouellette, L. L., Braman, D. and Mandel, G. (2012). ‘The polarizing impact of science literacy and numeracy on perceived climate change risks’. Nature Climate Change 2, pp. 732–735. https://doi.org/10.1038/nclimate1547 .

-

Kertz, C. L. and Ohanian, R. (1992). ‘Source credibility, legal liability and the law of endorsements’. Journal of Public Policy and Marketing 11 (1), pp. 12–23. URL: https://www.jstor.org/stable/30000021 .

-

Kotcher, J. E., Myers, T. A., Vraga, E. K., Stenhouse, N. and Maibach, E. W. (2017). ‘Does engagement in advocacy hurt the credibility of scientists? Results from a randomized national survey experiment’. Environmental Communication 11 (3), pp. 415–429. https://doi.org/10.1080/17524032.2016.1275736 .

-

Larson, E. L. (2003). Trial and error: the American controversy over creation and evolution. 3rd ed. New York, NY, U.S.A.: Oxford University Press.

-

Lewandowsky, S., Gignac, G. E. and Vaughan, S. (2013). ‘The pivotal role of perceived scientific consensus in acceptance of science’. Nature Climate Change 3 (4), pp. 399–404. https://doi.org/10.1038/nclimate1720 .

-

Lombardi, D., Seyranian, V. and Sinatra, G. M. (2014). ‘Source effects and plausibility judgments when reading about climate change’. Discourse Processes 51 (1-2), pp. 75–92. https://doi.org/10.1080/0163853x.2013.855049 .

-

Masci, D. (2017). ‘For Darwin Day, 6 facts about the evolution debate’. Pew Research Center . URL: http://www.pewresearch.org/fact-tank/2017/02/10/darwin-day/ .

-

McCright, A. M. and Dunlap, R. E. (2003). ‘Defeating Kyoto: the conservative movement’s impact on U.S. climate change policy’. Social Problems 50 (3), pp. 348–373. https://doi.org/10.1525/sp.2003.50.3.348 .

-

McCright, A. M., Dunlap, R. E. and Xiao, C. (2013). ‘Perceived scientific agreement and support for government action on climate change in the U.S.A.’ Climatic Change 119 (2), pp. 511–518. https://doi.org/10.1007/s10584-013-0704-9 .

-

McCroskey, J. C. and Teven, J. J. (1999). ‘Goodwill: a reexamination of the construct and its measurement’. Communication Monographs 66 (1), pp. 90–103. https://doi.org/10.1080/03637759909376464 .

-

McKnight, J. and Coronel, J. C. (2017). ‘Evaluating scientists as sources of science information: evidence from eye movements’. Journal of Communication 67 (4), pp. 565–585. https://doi.org/10.1111/jcom.12317 .

-

Metcalf, G. E. and Weisbach, D. (2009). ‘The design of a carbon tax’. Harvard Environmental Law Review 33 (2), pp. 499–556.

-

Myers, T. A., Maibach, E. W., Roser-Renouf, C., Akerlof, K. and Leiserowitz, A. A. (2012). ‘The relationship between personal experience and belief in the reality of global warming’. Nature Climate Change 3 (4), pp. 343–347. https://doi.org/10.1038/nclimate1754 .

-

Nelkin, D. (1995). Selling Science. How the Press Covers Science and Technology. Revised edition. New York, U.S.A.: Freeman and company.

-

Nisbet, M. and Markowitz, E. M. (2014). ‘Understanding public opinion in debates over biomedical research: looking beyond political partisanship to focus on beliefs about science and society’. PLoS ONE 9 (2), e88473. https://doi.org/10.1371/journal.pone.0088473 .

-

Nisbet, M. C. and Fahy, D. (2015). ‘The need for knowledge-based journalism in politicized science debates’. The Annals of the American Academy of Political and Social Science 658 (1), pp. 223–234. https://doi.org/10.1177/0002716214559887 .

-

Ohanian, R. (1990). ‘Construction and validation of a scale to measure celebrity endorsers’ perceived expertise, trustworthiness and attractiveness’. Journal of Advertising 19 (3), pp. 39–52. https://doi.org/10.1080/00913367.1990.10673191 .

-

Patterson, J. W. (2014). ‘A reflection on the Bill Nye-Ken Hamm debate’. Reports of the National Center for Science Education 34 (2), pp. 1–3. URL: http://reports.ncse.com/index.php/rncse/article/view/298 .

-

Pornpitakpan, C. (2004). ‘The Persuasiveness of Source Credibility: A Critical Review of Five Decades’ Evidence’. Journal of Applied Social Psychology 34 (2), pp. 243–281. https://doi.org/10.1111/j.1559-1816.2004.tb02547.x .

-

Rainie, L. (2017). ‘U.S. public trust in science and scientists’. Pew Research Center: Internet & Technology . URL: http://www.pewinternet.org/u-s-public-trust-in-science .

-

Scheufele, D. A. (2014). ‘Science communication as political communication’. Proceedings of the National Academy of Sciences 111 (Supplement 4), pp. 13585–13592. https://doi.org/10.1073/pnas.1317516111 .

-

Simis, M. J., Madden, H., Cacciatore, M. A. and Yeo, S. K. (2016). ‘The lure of rationality: why does the deficit model persist in science communication?’ Public Understanding of Science 25 (4), pp. 400–414. https://doi.org/10.1177/0963662516629749 .

-

Sturgis, P. and Allum, N. (2004). ‘Science in Society: Re-Evaluating the Deficit Model of Public Attitudes’. Public Understanding of Science 13 (1), pp. 55–74. https://doi.org/10.1177/0963662504042690 .

-

van der Linden, S. L., Leiserowitz, A. A., Feinberg, G. D. and Maibach, E. W. (2015). ‘The Scientific Consensus on Climate Change as a Gateway Belief: Experimental Evidence’. PLOS ONE 10 (2), e0118489. https://doi.org/10.1371/journal.pone.0118489 .

-

Wong, E. (18th November 2016). ‘Trump has called climate change a Chinese hoax. Beijing says it is anything but’. The New York Times . URL: https://www.nytimes.com/2016/11/19/world/asia/china-trump-climate-change.html .

Author

Dr. David Morin is an Assistant Professor in the Department of Communication at Utah Valley University. His scholarly interests include science communication, political communication, and priming research. E-mail: david.morin@uvu.edu .