1 Introduction

The number of citizen science projects has grown significantly in the past two decades, emerging as a valuable resource for conducting research across a variety of fields [Kullenberg and Kasperowski, 2016 ]. Public engagement in these projects can range from spending less than a minute classifying an image online to engaging in multiple steps of the scientific process (e.g., asking questions, collecting data, analyzing data, disseminating findings; Bonney et al. [ 2009b ]). As citizen science continues to grow, there may be growing demand for participation resulting in increased competition among projects to recruit and retain those interested in engaging in the activities associated with these projects [Sauermann and Franzoni, 2015 ].

Researchers have begun examining participant motivations in an effort to determine best practices for recruiting and retaining volunteers. These studies reveal a range of intrinsic and extrinsic motivators and demonstrate great diversity in what drives participation. Projects requiring data collection in the field have shown volunteers motivated by environmental conservation and social interaction with like-minded individuals [Bradford and Israel, 2004 ; Crall et al., 2013 ; Wright et al., 2015 ], whereas contributing to science and interest in science emerge as primary motivators in online environments [Raddick et al., 2010 ; Cox et al., 2015b ; Curtis, 2015 ; Land-Zandstra et al., 2015 ].

Online and crowdsourced citizen science projects, those requiring data collection or data processing tasks to be distributed across the crowd, represent a type of citizen science that appeals to many motivations [Cox et al., 2015b ]. These projects span diverse fields, including astronomy, archaeology, neurology, climatology, physics, and environmental science and exhibit a great deal of heterogeneity in their design, implementation, and level of participant engagement [Franzoni and Sauermann, 2014 ]. They can support volunteers interested in only making minimum contributions at one point in time, which might be more appealing than projects requiring leaving one’s home, a long-term commitment, or financial obligations [Jochum and Paylor, 2013 ]. Opportunities for limited engagement may facilitate recruitment of new volunteers interesting in contributing to a worthy cause while potentially finding participants motivated to stay with a project long-term.

Volunteer retention in crowdsourced projects is one of the keys to project success as research has demonstrated that a small percentage of volunteers provide a disproportionately large share of the contributions [Sauermann and Franzoni, 2015 ]. These individuals invest more in the projects not just through their data processing contributions but also through their interaction with other project members (volunteers and researchers). Through this engagement, they help form an online community dedicated to delivering notable project outcomes. These dedicated volunteers likely have a significant role in the retention of other volunteers by providing social connections among individuals with similar interests and motivations [Wenger, McDermott and Snyder, 2002 ; Wiggins and Crowston, 2010 ].

Collective action theory has been applied in this context, where a group with common interests takes actions to reach collective goals [Triezenberg et al., 2012 ]. Based on this theory, projects could launch campaigns that seek participation from existing members interested in advancing the target goals of the collective. Social media and other online platforms could facilitate communications across members while potentially drawing new recruits into the community [Triezenberg et al., 2012 ]. However, little research has examined the ability of a marketing campaign using traditional or social media channels to support re-engagement of existing members or the recruitment and retention of new volunteers into these projects [Robson et al., 2013 ].

Crimmins et al. [ 2014 ] examined the ability of six email blasts to registered participants of Nature’s Notebook, a citizen science program of the USA National Phenology Network, to increase participation in the project. Participation requires making observations of phenological events at a field site of one’s choosing and uploading those data to an online data repository. The targeted campaign resulted in a 184% increase in the number of observations made (N=2,144) and also increased the number of active participants [Crimmins et al., 2014 ]. The study did not look at recruitment of individuals not already registered with the project or other methods of recruitment outside the email listserv, but it demonstrated a successful approach for re-engaging an existing audience.

Robson et al. [ 2013 ] compared social networking marketing strategies to more traditional media promotions for a citizen science project named Creek Watch. Participation required downloading an application to a smartphone from the project website and using it to take a picture of a local water body and make simple observations. The study found that social networking and press releases were equally effective means for increasing project awareness (i.e., traffic to project website) and recruitment (i.e., download of data collection app from website) but that targeting local groups with interest in the topic was most effective at increasing data collection. The study did not, however, examine retention rates associated with the project before and during the campaign.

We built on the efforts of these previous studies to more fully examine the ability of multiple marketing strategies to increase project awareness and drive project recruitment and retention in an online crowdsourced citizen science project (Season Spotter). We used web analytics, commonly used by businesses to develop and guide online marketing strategies to drive consumer behavior, to inform our study. We wanted to know which marketing strategies used in the campaign were most effective at driving traffic to Season Spotter and whether the campaign could increase and sustain project engagement over time.

2 Materials and methods

2.1 About Season Spotter

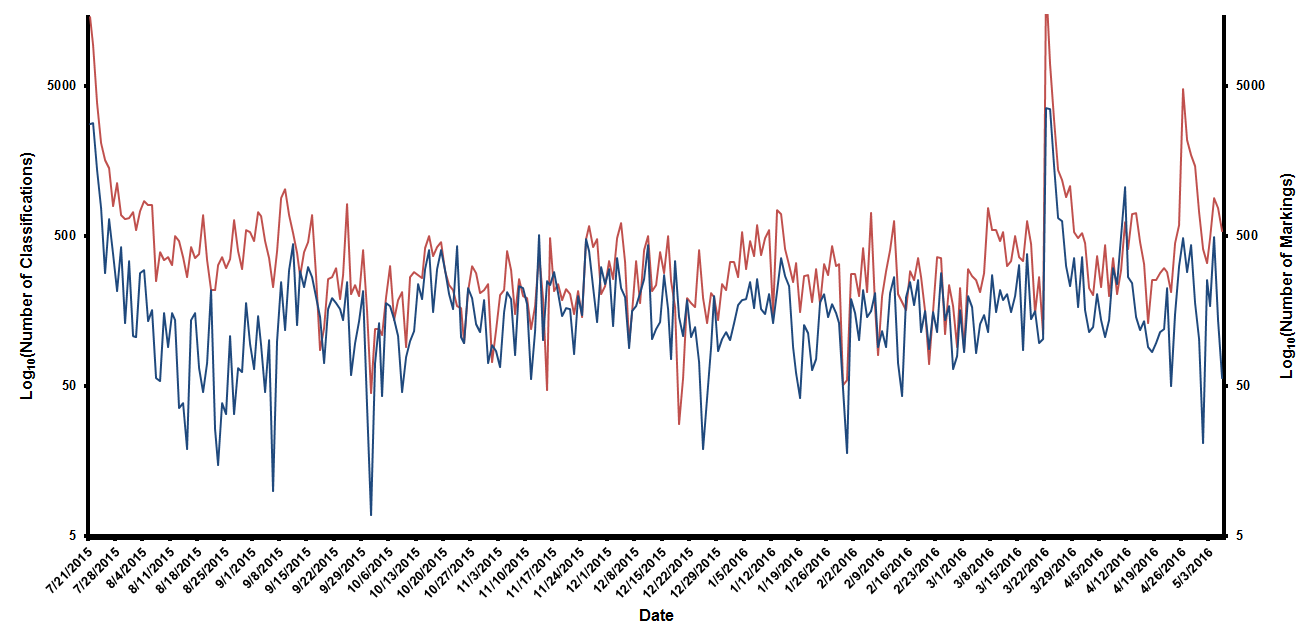

Season Spotter is one of 42 projects hosted on the online citizen science platform, the Zooniverse ( www.zooniverse.org ). The Zooniverse currently has over 1.4 million participants that engage in these projects, performing big data processing tasks across a variety of scientific fields [Masters et al., 2016 ]. The Season Spotter project (launched on July 21, 2015) supports the analysis of images uploaded to the Zooniverse from the PhenoCam network [Kosmala et al., 2016 ]. The network uses digital cameras to capture seasonal changes in plants over time across 300 sites, a majority of which are located in North America [Richardson et al., 2009 ; Sonnentag et al., 2012 ; Brown et al., 2016 ]. Participants that engage in the Season Spotter project can do one of two tasks: classify images (answer a series of questions about one image or two images side by side) or mark images (draw a line around a specified area or object in an image). Each image is classified and marked by multiple volunteers to ensure data quality before being retired. Prior to launching our marketing campaign on March 3, 2016, approximately 7,000 volunteers had classified 105,000 images, and marked 45,000 images.

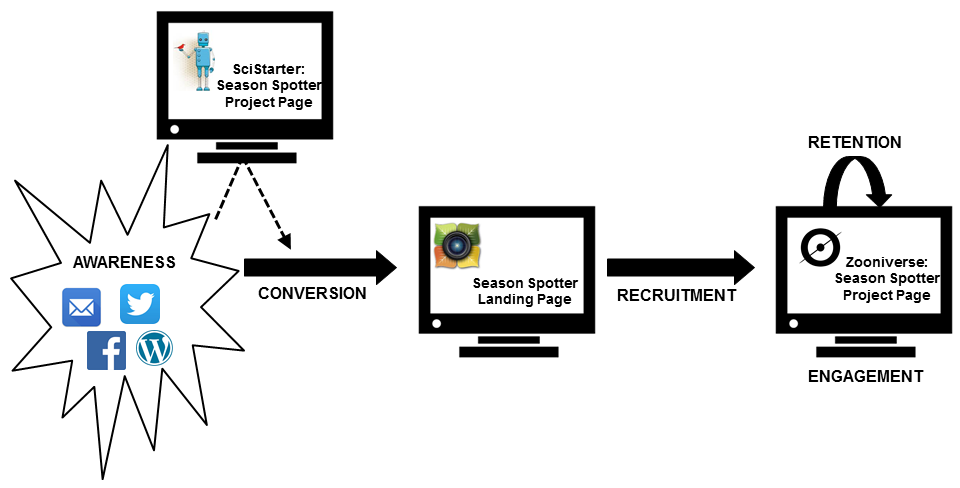

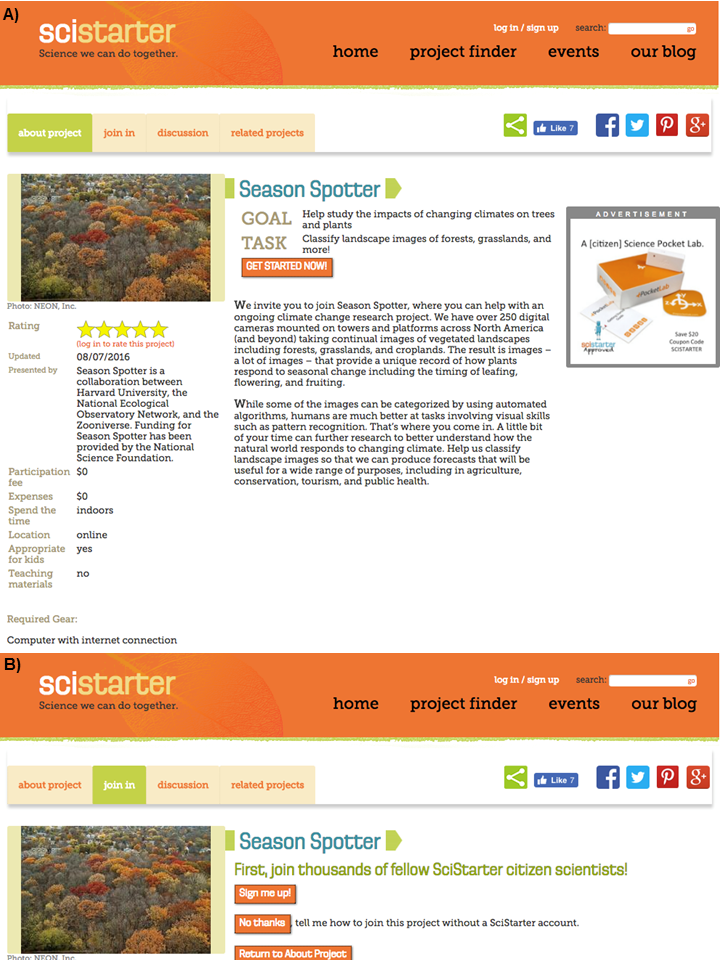

Initial recruitment to the project and ongoing outreach efforts directed potential participants to the project’s landing page ( www.SeasonSpotter.org ; Figure 1 ). Call to action buttons on the site allow site visitors to select whether they want to classify or mark images or visit Season Spotter’s Facebook ( www.facebook.com/seasonspotter ), Twitter ( www.twitter.com/seasonspotter ), or blog pages ( www.seasonspotter.wordpress.com ). A click to classify or mark images takes visitors to pages within the Zooniverse where they perform these tasks (Figure 1 ). Once in the Zooniverse, visitors can also click tabs to learn more about the research (Research tab), read frequently asked questions (FAQ tab), participate in a discussion forum with other volunteers and the research team (Talk tab), or be redirected to the project’s blog site where researchers provide project updates (Blog tab). Prior to launching the campaign, our Facebook and Twitter accounts were connected to our blog site so that twice-weekly blog posts automatically posted to those accounts. No additional posts or tweets were sent out prior to the campaign.

2.2 Marketing campaign

We conducted our marketing campaign from March 3 to April 4, 2016. The campaign challenged targeted audiences to complete the classification of 9,512 pairs of spring images in one month to produce the data needed to address a research question being included in an upcoming manuscript submission [Kosmala et al., 2016 ]. Our study did not have funding to examine the motivations or demographics of current Season Spotter participants, but we identified audiences to target based on external research for participation in other Zooniverse projects [Raddick et al., 2010 ; Raddick et al., 2013 ; Cox et al., 2015b ]. In addition to the Zooniverse, we partnered with another project (Project Budburst; www.budburst.org ) ran by one of our affiliate organizations, the National Ecological Observatory Network (NEON; www.neonscience.org ), to promote the campaign.

We also partnered with SciStarter ( www.scistarter.com ) for campaign promotion. SciStarter serves as a web-based aggregator of information, videos, and blogs on citizen science projects globally. The organization helps connect volunteers to projects that interest them using targeted marketing strategies (Twitter, Facebook, newsletter, live events, multiple blogs through a syndicated blog network run by SciStarter, APIs to share the Project Finder). Project administrators can also purchase advertising space on the home page or targeted project pages. Season Spotter created a project page on SciStarter on November 24, 2015 (Figure 2 ).

For the duration of the campaign, we added a progress bar to SeasonSpotter.org so visitors could see the progress in reaching the campaign goal (Figure 3 ). Citizen science research has demonstrated the importance of providing continuous feedback to volunteers [Bonney et al., 2009a ; Crimmins et al., 2014 ]. Following best practices [Landing Page Conversion Course, 2013 ], we also highlighted the call to action button on the landing page to direct visitors to participate in the target task (classify) instead of the non-target task (mark; Figure 3 ).

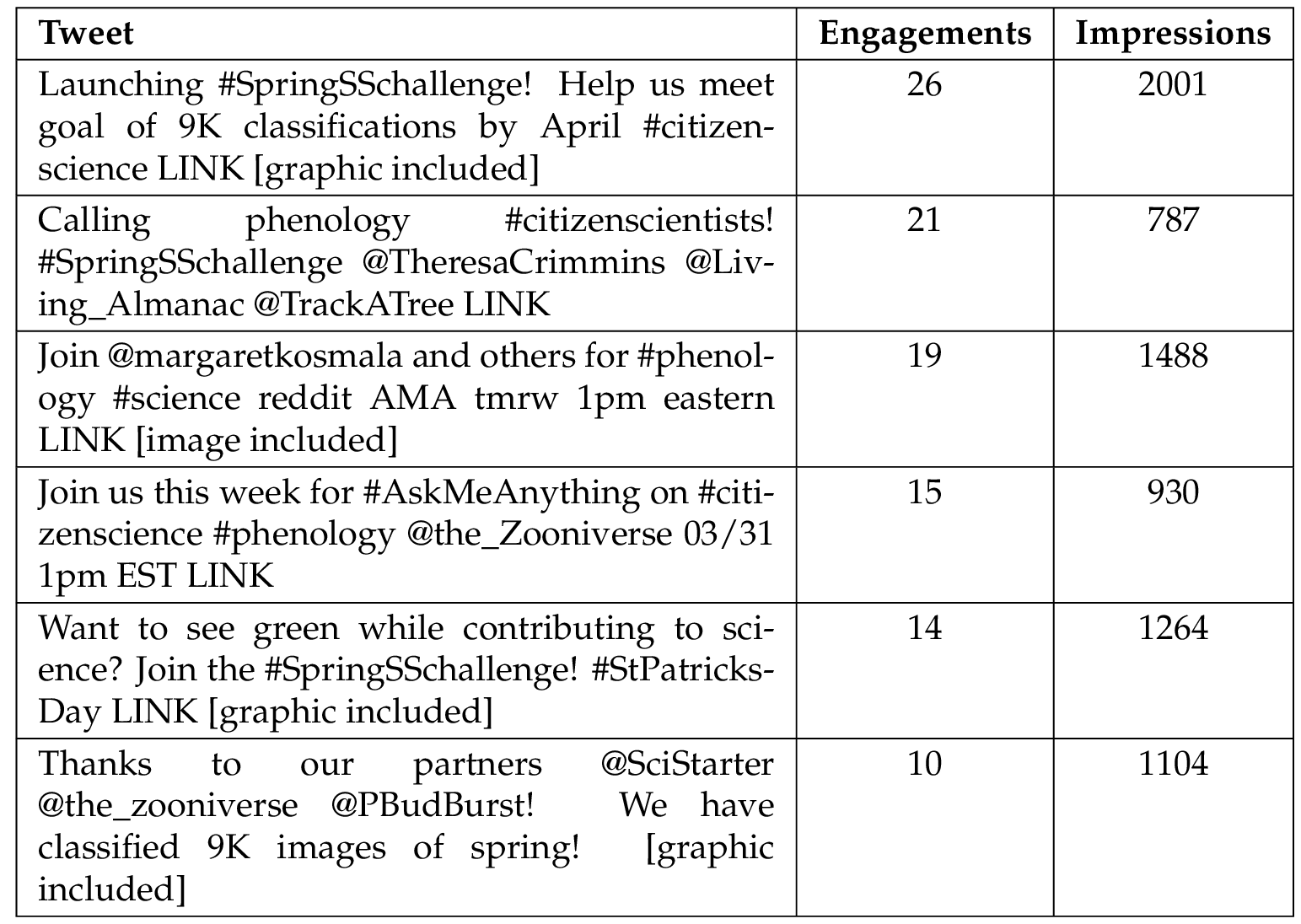

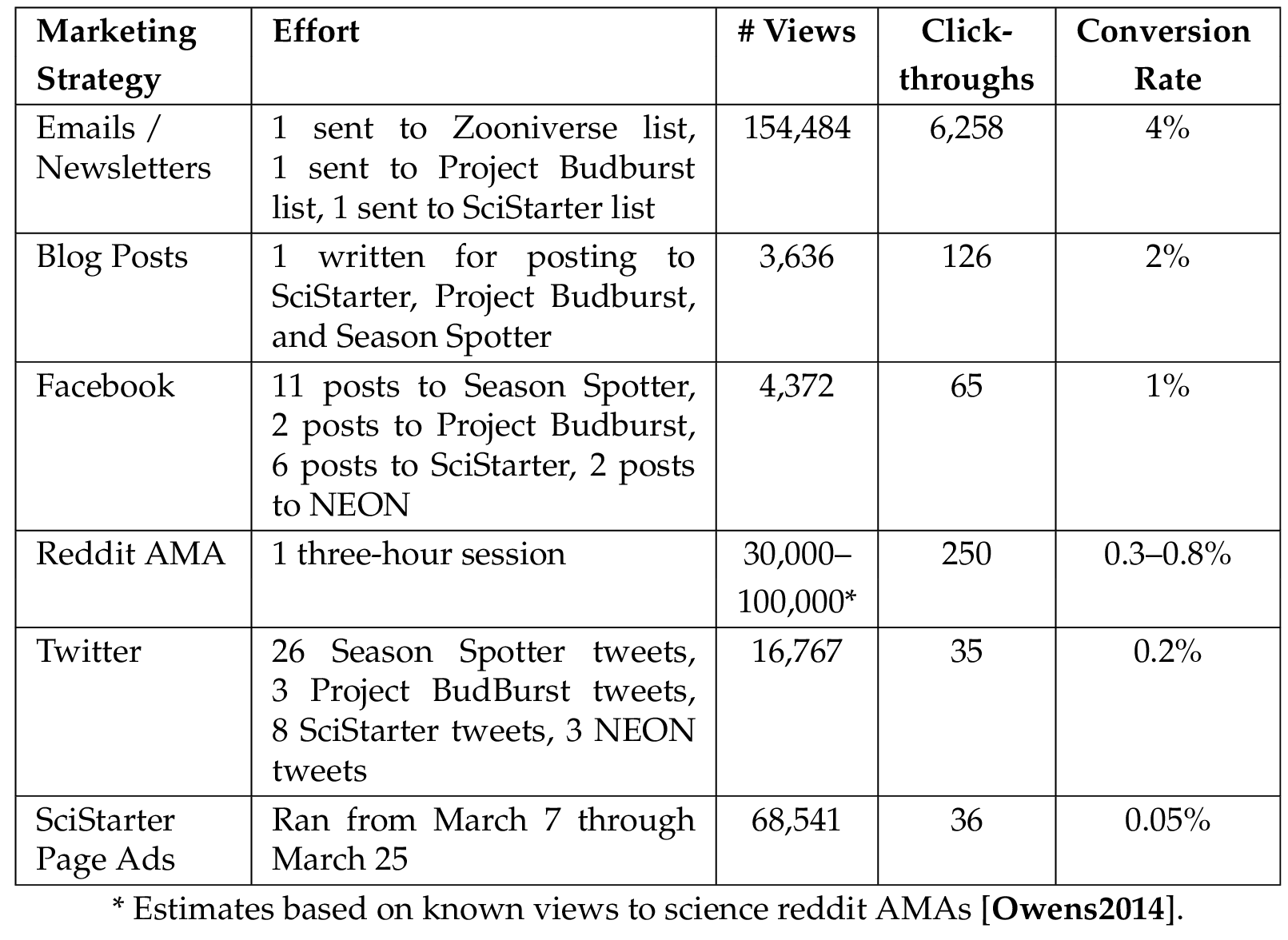

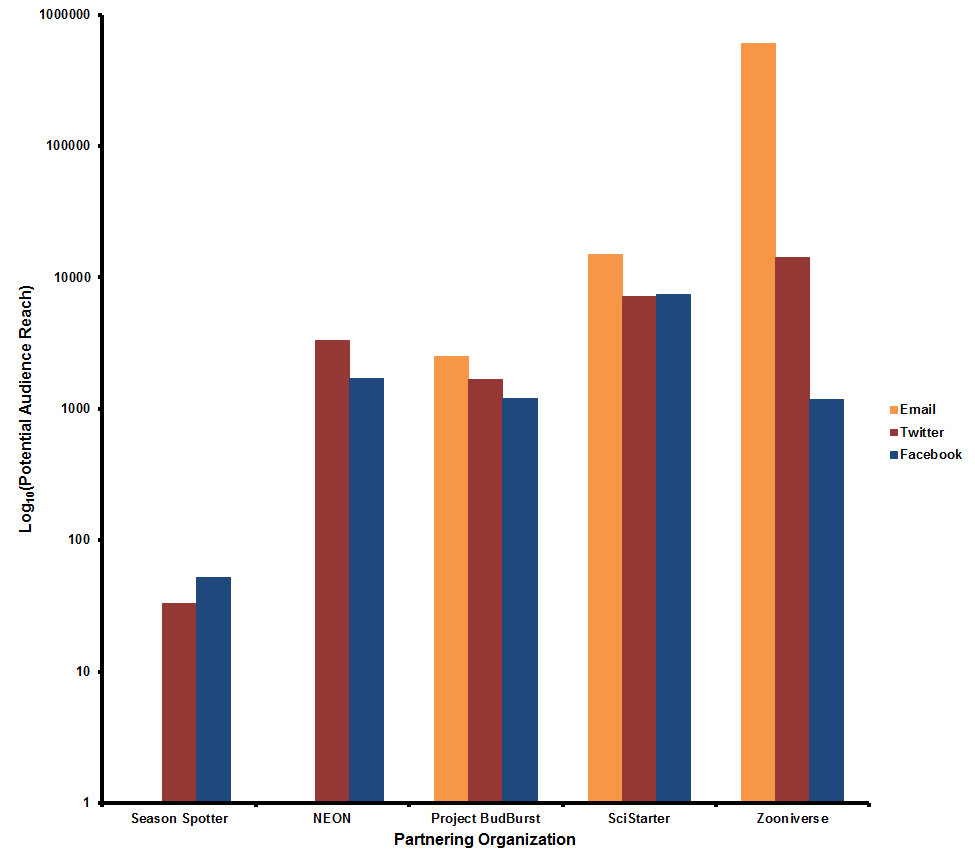

We followed typical online marketing practices for posting to social media accounts during the campaign: pinning campaign announcement to top of Facebook and Twitter pages, using images and videos in posts, using popular hashtags, including relevant Twitter handles, using only two hashtags per tweet, and using a common campaign hashtag across tweets (#SpringSSchallenge; Lee, 2015 ; Moz, 2016b ; Moz, 2016a ; Twitter Inc., 2016 ). We also disconnected automatic blog posting to Facebook and Twitter accounts to better track and analyze the effects of the campaign and sent out tweets targeting the campaign that were relevant to specific days (e.g., #StPatricksDay; Table 1 ). We posted to Season Spotter’s blog, Facebook, and Twitter announcing the campaign using a specially designed campaign graphic (top of Figure 3 ). SciStarter used this same graphic for its banner and project page ads, which ran from March 7–25. During the campaign, Season Spotter posted 11 times to Facebook and sent out 26 tweets compared to eight posts and six tweets the month prior to the campaign. While social media management sites (e.g., Buffer) can automate delivery of posts at times of day followers are more likely to see them, we manually submitted posts during our campaign to not interfere with our web analytics. Therefore, we posted at times staff were available to do so. The marketing effort for each partner organization is provided in Table 2 , and the reach of their existing audiences is provided in Figure 4 .

To address the participant motivation of “interest in science” for other Zooniverse projects, Season Spotter also hosted a three-hour Reddit.com Ask Me Anything (AMA) as part of the campaign on March 31 [Kosmala, Hufkens and Gray, 2016 ]. Reddit is a social media platform (home to 234 million users and 8 billion monthly page views) where users submit content that receives up or down votes from other users, which are then used to organize and promote content [Reddit Inc., 2016 ]. The science forum, or “subreddit” (www.reddit.com/r/science/), facilitates discussions among Reddit users with an interest in science. In science AMAs, members of the public ask questions of scientists in a moderated online discussion forum. The scientists are asked to prioritize answering those questions receiving the most up-votes by users. As part of the Season Spotter AMA, a description of the project and research goals were provided on the Reddit host site along with a link to SeasonSpotter.org.

2.3 Analysis

We used Google Analytics to track and report website traffic to SeasonSpotter.org, the Season Spotter project pages on SciStarter, and the Season Spotter project pages in the Zooniverse. To track the success of each independent marketing strategy, we assigned unique Urchin Traffic Monitor (UTM) parameters (tags added to a URL to track clicks on a link in Google Analytics) to each link within a tweet, post, blog, newsletter, or email. Google Analytics provides the number of sessions (number of grouped interactions of a single user that take place on a website ending after 30 minutes of inactivity), new users (number of users that have not visited the site before a designated time period), page views (number of times a user views a page; there can be multiple page views per session), and unique page views (the number of user sessions per page; there can be only one unique page view per individual per session).

All statistical analyses were performed using SPSS (Version 23). We compared campaign metrics associated with the flow of possible participation (awareness, conversion, recruitment, engagement, retention; Figure 1 ) at two points in time: one month prior to the campaign as an established baseline (February 8–March 2, 2016) and the month of the campaign (March 3–April 4, 2016). For these comparisons, we conducted independent samples -tests and used Levene’s test to examine equality of variances. If variances were unequal, we used an approximation of the -statistic that assumes unequal variances. We assessed all variables for normality using the Shapiro-Wilk test and right-skew transformed the data using when needed to improve normality.

2.4 Awareness

We defined awareness as the number of contacts made through the campaign (Figure 1 ). To assess overall awareness, we summed the number of times Twitter put a campaign tweet in someone’s timeline or search results (Twitter impressions), the number of times Facebook put a campaign post in a user’s newsfeed (Facebook post reach), blog views, and the number of emails opened (not received) across all our partner and affiliate organizations. We recorded the number of views to SciStarter pages where the banner or project page ads were displayed. We also assigned a number of views to our Reddit AMA, which we estimated conservatively at 30,000 based on existing data for science subreddit AMAs [Owens, 2014 ]. To determine whether project awareness increased during the campaign, we conducted a -test using metrics associated with Season Spotter’s Facebook and Twitter accounts (Twitter impressions, Twitter engagements, Facebook post reach).

Because SciStarter marketing sometimes directed individuals to the Season Spotter project page on SciStarter instead of SeasonSpotter.org, we also conducted -test analysis using unique page views to the project page on SciStarter to see if the campaign increased traffic to that site.

2.5 Conversion

We defined conversion as the number of times an individual arrived at SeasonSpotter.org either indirectly through SciStarter or directly from any of our marketing strategies (Figure 1 ). To calculate the conversion rate, we divided the number of sessions by the number of total views associated with each marketing strategy. We also performed a two-way ANOVA to assess whether the number of new users or total sessions to SeasonSpotter.org was influenced by one of three referral sources prior to or during the campaign: direct or organic, third party, or partner. Direct referrals occur when a visitor types the URL directly into a browser, whereas organic referrals occur when a visitor finds a site through an Internet browser search. We identified third party referrals as those referrals not coming from one of our affiliate or partnering organizations, and partner referrals were those coming from one of our partnering organizations (Figure 4 ). To assess increases in new users to SeasonSpotter.org during the campaign, we conducted a -test analysis of new users per day.

2.6 Recruitment

We defined recruitment as the rate at which people visiting SeasonSpotter.org clicked through to perform tasks in the Zooniverse (Figure 1 ). We added Google Analytics’ event tracking code to SeasonSpotter.org to collect data on the number of times (events) a user clicked a call to action button on the website. For our recruitment analysis, we divided our data into campaign target events (classify images) and other non-target events (mark images or visit Season Spotter Facebook, Twitter, or blog pages). We calculated a recruitment conversion rate for each day of the campaign as the total number of target or non-target events divided by the total number of unique page views.

To determine if we sustained the same level of recruitment following the campaign as we did during the campaign, we ran a -test to examine differences in the number of target events and non-target events that occurred during and one month following the campaign (April 5–May 4, 2016).

2.7 Engagement

We defined engagement as the number of target tasks (classify images) and non-target tasks (mark images) completed for the project within the Zooniverse (Figure 1 ). We examined differences in means with a -test prior to and during the campaign for total number of classifications, total number of classification sessions (group of classifications in which the delay between two successive images is less than one hour), total number of participants classifying images, total number of marked images, total number of marking sessions, and total number of participants marking images.

Because SeasonSpotter.org is a separate website from the Zooniverse and we did not link sessions or users on SeasonSpotter.org to sessions or users in the Zooniverse, our level of granularity per user across platforms was reduced. However, Google Analytics tracking on the Zooniverse website provided data on what percentage of referrals to Season Spotter’s task pages came from SeasonSpotter.org versus other referral locations. We summed the total number of sessions from each referring source to the pages for classifying images (our target) within the Zooniverse prior to and during the campaign.

2.8 Retention

We examined two types of project retention in our analyses. Short-term retention was defined as the length of time an individual spends working on tasks during one session. Long-term retention was defined as the number of days over which an individual engaged with the project (i.e., number of days between a participant’s first and last classification), regardless of the number of classifications or time spent classifying on individual days. Although our challenge was interested in increased time spent classifying images in the short term (the first measure of retention), the project aimed to retain these volunteers in the long run after the campaign challenge ended to continue supporting the project over its lifetime (the second measure of retention).

For short-term retention, we examined differences in the total time spent classifying and marking images in one session prior to and during the campaign. For long-term retention, we examined the number of days retained for users that signed on at any time during the campaign. We counted the number of days retained up until 30 days after each participant’s first classification. To compare the retention rates of individuals that signed on during the campaign to a baseline, we also calculated the number of days retained for individuals that did their first classification within the two months prior to the campaign (December 31, 2015–February 1, 2016). We then calculated the number of days these individuals were retained up until 30 days after each participant’s first classification. We performed a log rank test to determine if there were differences in the retention distributions before and during the campaign. To examine the number of existing registered users that may have re-engaged with the project as a result of the campaign, we calculated the number of registered users that contributed to the project during the campaign period that had not contributed for more than thirty days before the campaign started.

3 Results

We report results of -test and ANOVA analyses as mean standard deviation.

3.1 Awareness

Through our marketing campaign, we made 254,101 contacts (Table 2 ). This number is likely an overestimate of the number of people reached because the same individual may have been exposed to multiple marketing strategies. Over the course of the campaign, the Season Spotter project gained two new Facebook page likes and 50 new Twitter followers.

More Twitter impressions occurred per tweet on Season Spotter’s account during the campaign ( ) compared to before ( ; , ). Engagements per tweet also significantly increased ( ; ; , ), but our Facebook post reach did not ( ; ; , ). Tweets with ten or more engagements during the campaign period included those launching the challenge, tagging other citizen science projects with a similar mission, referencing the AMA, and referencing a trending topic (Table 1 ). Therefore, targeted social media strategies used through the campaign were more effective than automatically generated tweets and posts via the blog at reaching and engaging the audience reached, at least on Twitter.

Marketing strategies employed by SciStarter also increased visitors to the Season Spotter project page each day during ( ) compared to before the campaign ( ; , ), further supporting the campaign’s effectiveness at increasing project awareness.

3.2 Conversion

Our marketing campaign drove more daily users to SeasonSpotter.org ( ) than arrived before the campaign ( ; , ). Conversion rates for marketing strategies that directed individuals to SeasonSpotter.org averaged 1% (Table 2 ). Emails had the highest conversion rate (4%) with the project page ads hosted on SciStarter having the lowest conversion rate (0.05%; Table 2 ). Facebook posts, tweets, and blog posts from SciStarter directed individuals to the Season Spotter project page on SciStarter. Therefore, the SciStarter project page is not directly comparable to other approaches. The conversion rate from the SciStarter project page to SeasonSpotter.org was 13% with 1,395 views and 175 click-throughs. Each campaign strategy therefore resulted in a small fraction of individuals made aware of the campaign visiting SeasonSpotter.org.

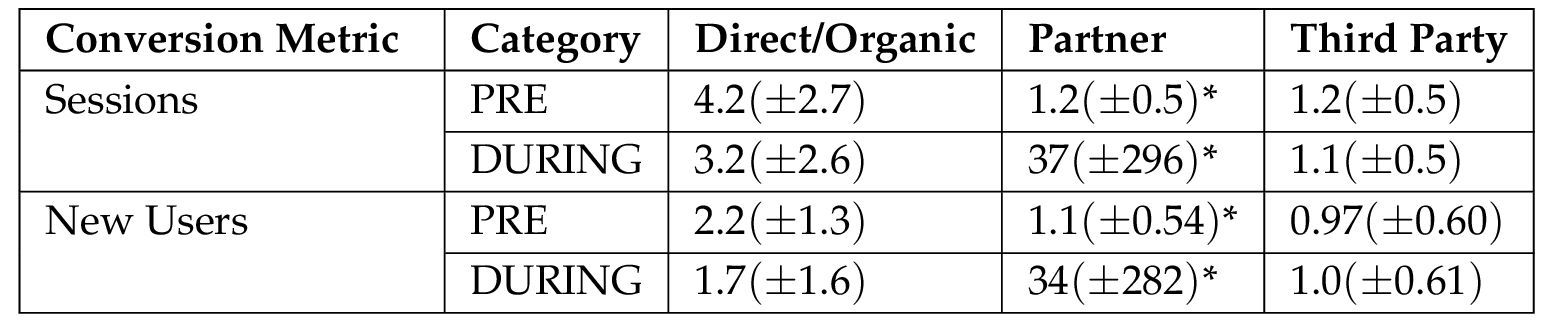

There was a statistically significant interaction when comparing the effects of the referral source (direct/organic, partner, third party) on the number of sessions prior to and during the campaign ( , , partial ). Therefore, we performed post hoc comparisons using the least significant difference (LSD) method. The difference in the number of sessions per day before and during the campaign was significant for partner referrals ( , , partial ) but not direct and organic referrals ( , , partial ) or third party referrals ( , , partial ; Table 3 ).

There was a statistically significant interaction when examining the effects of the referral source on the number of new users prior to and during the campaign ( , , partial ). LSD results showed a statistical difference in the number of daily new users before and during the campaign for partner referrals ( , , partial ) but not from visitors referred directly or organically ( , , partial ) or those referred from a third party ( , , partial ; Table 3 ). These results indicate that our campaign successfully drove traffic to our site through our recruitment methods, and that traffic arriving at Season Spotter via other means was unaffected by the campaign.

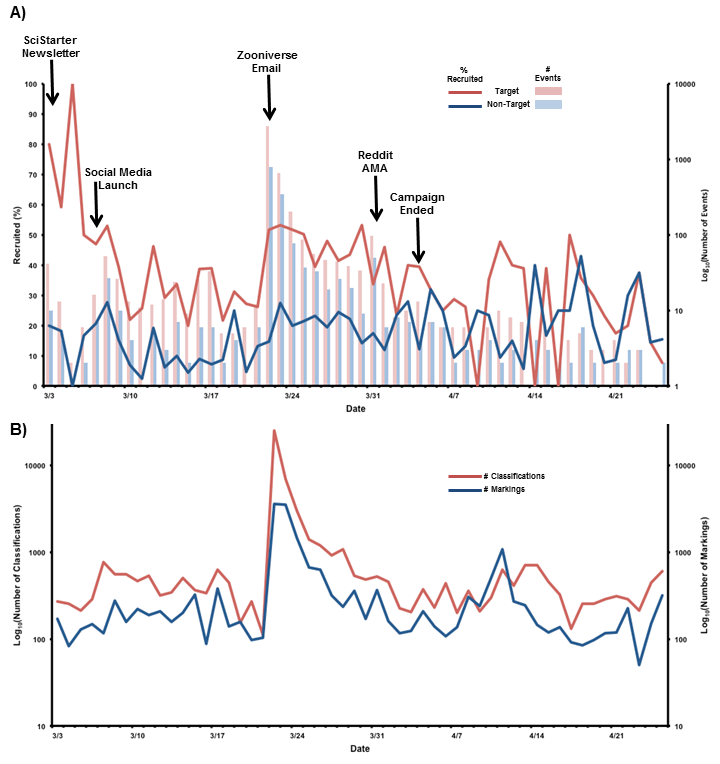

3.3 Recruitment

Recruitment analysis found that, on average, only 42.6% of visitors to SeasonSpotter.org clicked through to classify images in the Zooniverse. Of the rest, 15.6% of visitors clicked through to other pages not targeted by our campaign. More target events occurred daily during the campaign ( ) compared to the month following the campaign ( ; , ; Figure 5 A). The same held true for non-target events ( ; ; , ; Figure 5 A). We also found a significant difference between the number of daily sessions on Season Spotter’s classify pages in the Zooniverse one month prior to ( ) and during the campaign ( ; , ; Figure 5 A). These data demonstrate that traffic driven to SeasponSpotter.org by the campaign resulted in increased clicks on the site’s call to action buttons. However, a low recruitment rate resulted in substantial loss of potential participants at this step in the process (Figure 1 ).

3.4 Engagement

Volunteers classified the 9,512 pairs of spring images by March 23, meeting our goal before the official end of the campaign. At that time, we switched our campaign message for participants to begin classifying an additional 9,730 autumn images and changed our progress bar to reflect the transition on SeasonSpotter.org. Over the course of the campaign, 1,598 registered Zooniverse users and 4,008 unregistered users from 105 countries made 56,756 classifications.

Results showed statistically significant increases in the total number of classifications per day ( ; ; , ), classification sessions per day ( ; ; , ), and the number of volunteers performing classifications each day ( ; ; , ) during the campaign compared with one month prior to the campaign (Figures 5 B, 6 ). Although not a target activity of the campaign, we also found significant increases in the total number of images marked ( ; ; , ), marking sessions ( ; ; , ), and the number of volunteers marking images ( ; ; , ; Figures 5 B, 6 ). These findings suggest that the campaign resulted in increased engagement in target and non-target associated activities.

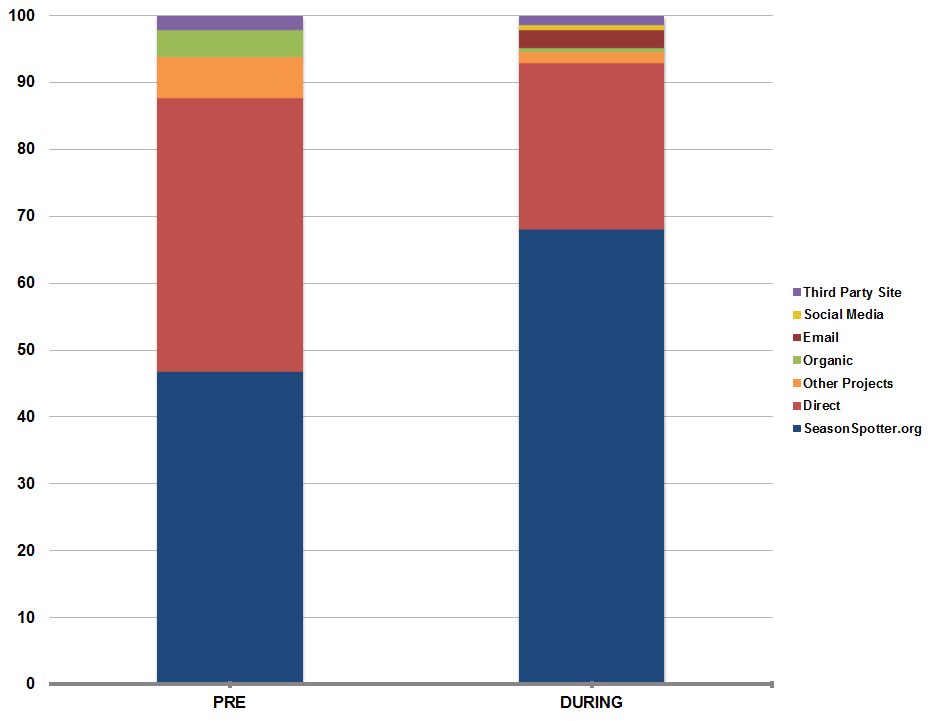

Since the Season Spotter project was created, 75% of all referrals to the classification pages in the Zooniverse have been from SeasonSpotter.org. The percentage of referrals coming from different sources shifts prior to and during the campaign (Figure 7 ). SeasonSpotter.org accounted for 46% of referrals one month prior to the campaign, and the percentage increased to 68% during the campaign. Direct referrals likely account for regular visitors that have the Zooniverse project URL saved in their web browser. We also saw the addition of referrals from emails and social media during the campaign while no referrals were driven from these sources the month prior (Figure 7 ). These data demonstrate that increased engagement in the Zooniverse resulted from increased traffic to SeasonSpotter.org.

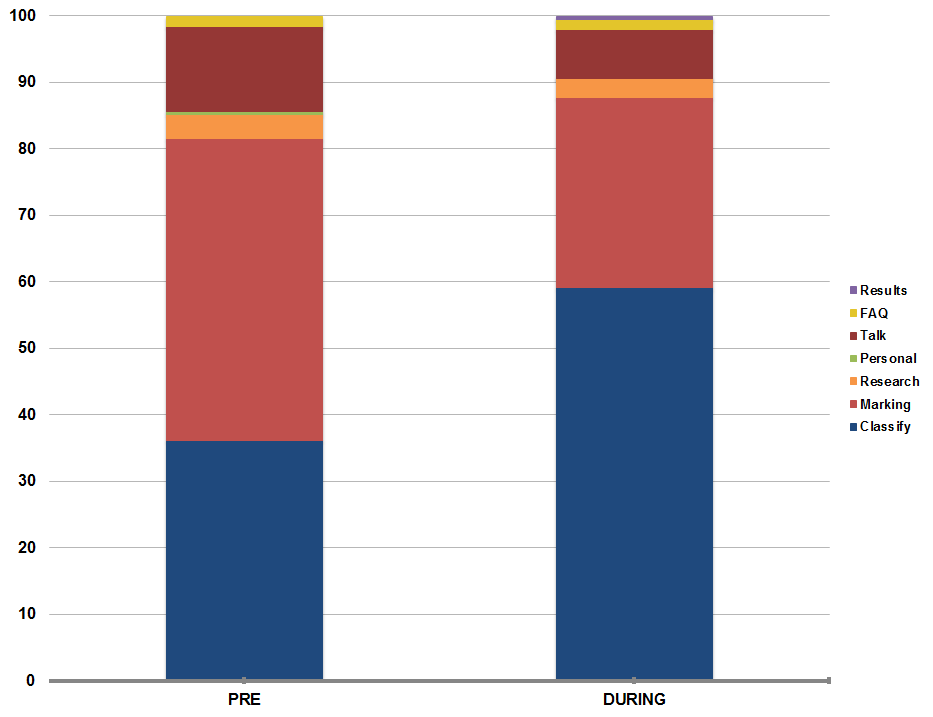

We also found shifts in the percentage of visits to target and non-target Season Spotter pages within the Zooniverse (Figure 8 ). A lower percentage of visitors went to the page to classify images before the campaign (36%) than during the campaign (59%). We saw increases in the percentage of total visits to the image marking pages before the campaign (45%) compared to during (29%). We also saw increases in the absolute number of views to pages describing the research project (research; 143 to 604), the project discussion forum (talk; 511 to 1554), frequency asked questions (FAQ; 62 to 313), and project result pages (results; 0 to 115; Figure 8 ).

3.5 Retention

Analysis of short-term retention examined increases in time spent doing target and non-target activities. Results showed no significant increases in the average time (in seconds) spent classifying images ( ; ; , ) or marking images ( ; ; , ).

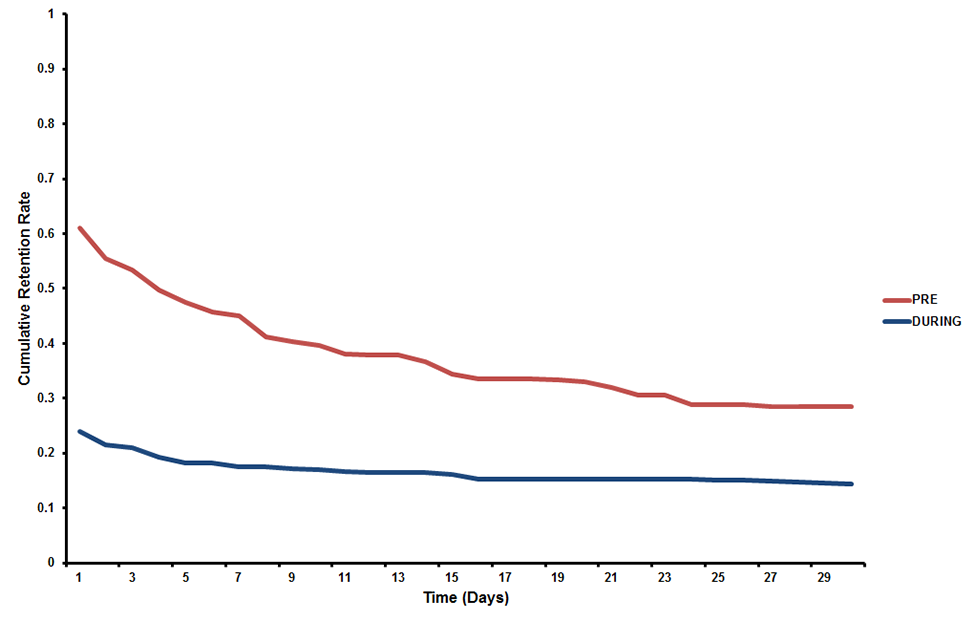

Analysis of long-term retention examined whether users returned to the project following their first classification session. Since the project started but prior to the campaign, 7,089 participants had classified images, and 81% of those were not retained after their first classification session. During the campaign, 5,083 new participants did their first classification, and 94% of those were not retained after their first classification session. The log rank test found that the retention distributions for the two on-boarding periods examined were significantly different ( ; ). Participants that had classified images before the campaign had a median interval between first and last classification of four days compared to an interval of less than one day for participants during the campaign (Figure 9 ). So although patterns of short-term retention did not change during the campaign, patterns of long-term retention did with a greater proportion of participants only contributing to the project for one classification session.

Our data also showed that 45% of registered volunteers that had not classified an image for at least 30 days prior to the campaign (N=1,848) re-engaged with the project during the campaign period. Although we do not have direct cause and effect data, we can safely assume these individuals re-engaged with the project as a result of the campaign.

4 Discussion

The rapid growth in citizen science may result in greater competition among projects to recruit volunteers and retain their participation [Sauermann and Franzoni, 2015 ]. This is especially true for crowdsourced projects with high attrition rates [Nov, Arazy and Anderson, 2011 ]. Our marketing campaign for the online citizen science project Season Spotter was successful at meeting the target of classifying more than 9,000 pairs of images in less than a month. We also brought increased awareness to the project, re-engaged existing volunteers, and gained new participants that have been retained since the campaign ended. Lessons learned from this campaign will not only support the future success of Season Spotter, but also inform the success of other online citizen science projects.

4.1 Lesson 1: invest in growing your audience

Many citizen science projects have limited staff available to support outreach efforts or to maintain a constant social media presence [Chu, Leonard and Stevenson, 2012 ; Sauermann and Franzoni, 2015 ]. However, the data from our campaign demonstrates that fairly minimal effort can result in dramatic increases in participation, provided an appropriate audience is reached (Table 2 ). We went from an average of 2,000 classifications completed per month to more than 55,000 classifications during the month of our campaign. These findings are consistent with those from the Nature’s Notebook study in which the project launched a successful year-long campaign utilizing just a week of combined staff time [Crimmins et al., 2014 ]. Considering this return on investment, projects should take time early on to become familiar with using social media platforms and developing outreach strategies for growing new and re-engaging existing audiences [Robson et al., 2013 ].

The types of strategies employed should be diverse and consider the audience needing to be reached as well as existing project needs. Demographic data, for instance, may show that Instagram is likely a more popular form of social media than Facebook for communicating with your volunteers. In our campaign, we saw more engagement via Twitter than Facebook which suggests our social media activities should focus on this platform.

Generally, to grow your audience, project surveys indicate that volunteers learn about opportunities to participate through numerous channels [Chu, Leonard and Stevenson, 2012 ; Robson et al., 2013 ]. Email has shown the greatest promise for reaching existing audiences [Crimmins et al., 2014 ], but project managers should also consider what needs to be achieved as part of the outreach effort. For example, the Creek Watch study found that social media was better at supporting recruitment efforts while engaging targeted community groups increased data collection rates [Robson et al., 2013 ]. Projects should also consider whether or not participation needs to occur in short bursts or be sustained over long periods, which also helps determine appropriate recruitment and communication strategies.

Programs should seek ways to be creative with their outreach strategies and tap into volunteer motivations. For example, the Reddit AMA was viewed by a large number of people with an interest in science and likely reached individuals that may have interest but do not currently contribute to citizen science projects. In addition, although not officially part of our research study, one of NEON’s researchers posted a request to participate in the campaign on her personal Facebook page. This resulted in 40 hits to SeasonSpotter.org (a 10% conversion rate). Other researchers have recommended requesting contributions in the same manner as walk or bike-a-thons where participants request involvement from close friends and gain recognition for bringing in contributions [Chu, Leonard and Stevenson, 2012 ]. Future research might examine the success of additional marketing platforms beyond those tested here and consider the role of personal social connections in distributing participation requests.

The importance of knowing and growing your audience is exemplified by the low conversion rates across marketing strategies [Chaffey, 2016 ]. Considering this, it is important to increase the audience pool so that a low conversion rate still results in high numbers of participants. For example, the Zooniverse has a large pool of registered users that we reached out to in our campaign. Even though the email sent out to these users had a conversion rate of only 4%, approximately 6,000 individuals reached contributed to the campaign. This email resulted in the completion of our campaign goal the day after it went out, with approximately 30,000 classifications made in less than 24 hours.

Many projects will not have access to a ready group of volunteers like the Zooniverse. Having access to this community may have influenced the ability of our findings to be broadly applicable across citizen science projects not similarly structured. However, 71% of our campaign participants were not previous Zooniverse users based on our data and this provides some indication that implementing campaigns would be effective for other online, crowdsourced projects not part of a similar platform. It is also important to note that while successful in our campaign, engaging an existing community that spans dozens of projects has the potential to result in stronger competition among projects or volunteer burnout if too many requests are made of the volunteer community. More research is also needed on projects that require more of a volunteer commitment and require being physically present to volunteer. All new projects, whether online or in person, may find resources like SciStarter an effective means to tap into existing audiences with interest in all types of citizen science projects.

Our campaign resulted in low retention rates with a majority of our volunteers participating in only one classification session and not returning. Sauermann and Franzoni [ 2015 ] found similar user patterns from their review of other Zooniverse projects with 10% of participants contributing, on average, 79% of the classifications. Therefore, considering the high turnover, high recruitment rates are necessary to replace users continuously leaving the project. For the small percentage that do remain, providing online support through on-going communications and periodic calls to action will be vital to re-engagement and long-term retention. Continuous feedback on project successes and acknowledging volunteer contributions play a vital role in retention as well [Bonney et al., 2009a ; Crimmins et al., 2014 ]. Highly engaged volunteers can also play a key role in volunteer recruitment, which needs further study.

4.2 Lesson 2: continuously engage with your existing audience

Recruitment efforts may use extensive resources for a project [Cooper et al., 2007 ], so special effort needs to be made to retain those volunteers already making contributions. This needs to be considered from the onset of the project. Successful strategies rely on keeping past and present participants well-informed and creating a shared sense of community [Chu, Leonard and Stevenson, 2012 ]. Projects can do this continuously through blogs, newsletters, and online discussion forums but targeted campaigns like ours might be necessary to re-engage members in making contributions [Dickinson et al., 2012 ].

During our campaign, we successfully increased traffic to SeasonSpotter.org, which drove increased engagement in the project. However, our data demonstrated a significant drop in visits to the website following the campaign (Figure 5 ). Other studies have shown a decline in the frequency of participation even for highly active users with spikes in contributions occurring following media appearances by project researchers, press releases, and targeted social media campaigns [Stafford et al., 2010 ; Morais, Raddick and Santos, 2013 ; Crimmins et al., 2014 ; Sauermann and Franzoni, 2015 ]. Therefore, project leaders must continue to engage with their audience to retain their participation while being cautious not to overburden participants [Crimmins et al., 2014 ].

For the Zooniverse, peaks in engagement result when an email goes out to the community announcing the launch of a new project or requesting participation to meet a specific project need, as was the case for our campaign (Figure 6 ; Sauermann and Franzoni, 2015 ). This serves as a prime example of collective action theory, where a large online community is driven to action by a request to reach a target goal [Triezenberg et al., 2012 ]. Developing a community of participants such as that found within the Zooniverse takes time to establish and project leaders will need to be patient and work strategically to grow such a community. Future research should examine how a marketing campaign influences project recruitment for new projects with no audience and existing projects without access to an established community of volunteers.

4.3 Lesson 3: collaborate when conducting outreach initiatives

Projects should seek to form partnerships with organizations that share common goals to support their outreach efforts [Cooper et al., 2007 ; Purcell, Garibay and Dickinson, 2012 ]. Season Spotter launched in July of 2015, making it only nine months old when we launched our campaign. Therefore, the potential audience reach of Season Spotter by itself was low (Figure 4 ). Solely, Season Spotter accounted for only 2% of the number of contacts made through the campaign. By working with our partners and affiliate organizations, we increased the reach of our campaign by almost 45 times.

As mentioned previously, we had access to the Zooniverse’s expansive community of 1.4 million registered users for our campaign. However, projects without access to this number of users or new projects may need to tap into other existing citizen science networks to support volunteer recruitment. Through its extensive marketing efforts and audience reach (Figure 4 ), SciStarter also drove significant recruitment to SeasonSpotter.org. It therefore provides a valuable tool for projects seeking to connect with similar projects or reach out to a growing community of motivated volunteers. Although not utilized for this study, the Citizen Science Associations in the United States, Europe, and Australia have growing memberships of researchers and practitioners in the field of citizen science. Email listservs and discussion forums provided by these communities offer additional opportunities to build on the existing networks of established projects. After a new audience begins to grow, projects should take time to invest in understanding their motivations to participate and continue to participate to support efforts to drive future recruitment efforts.

4.4 Lesson 4: follow best practices for project design

For many years, marketing research has examined strategies for successful recruitment and retention of consumers such as designing social media messages and websites to improve conversion rates. Our study applied some of these strategies to the design and analysis of our campaign, but there is likely much more to be learned by applying marketing strategies to citizen science recruitment and retention efforts. Uchoa, Esteves and de Souza [ 2013 ] used principles from marketing research to propose a framework supporting development of marketing strategies and online collaboration tools for crowdsourced citizen science projects. The authors provide ways to support development of these systems by targeting four dimensions: the crowd, collaboration, communication, and crowdware [Uchoa, Esteves and de Souza, 2013 ]. These dimensions integrate well into the lessons discussed previously and provide further insight into best practices for project design. Design across all these dimensions needs to be an iterative process continuously adjusting to the needs of the community [Newman et al., 2011 ; Uchoa, Esteves and de Souza, 2013 ].

The crowd dimension requires project developers to understand participant motivations, consider the ideal audience needed to target those motivations for performing the necessary project tasks, and then identify target groups with members having those characteristics [Uchoa, Esteves and de Souza, 2013 ]. Within this dimension, best practices include supporting the development of a strategic plan for audience growth, while considering time and staff limitations; identifying viable partners for network growth; and promoting volunteer satisfaction and retention by responding to their motivations [Stukas et al., 2009 ]. Our study was limited by data provided by the motivations of volunteers within the Zooniverse that may or may not have contributed to our project. When resources are available, projects should seek to define the audience they need to reach and then understand the audience once it exists while continuously assessing their needs and motivations.

The collaboration dimension targets a project’s ability to deliver experiences that attract and retain new recruits [Uchoa, Esteves and de Souza, 2013 ]. Attention has to be placed on whether the benefit of participation is worth the cost, and projects must consider how they can provide diverse opportunities for participation. Future research that extends beyond what we investigated here should address differences in recruitment and retention between crowdsourced projects that offer the opportunity to volunteer briefly and conveniently to those projects requiring training, equipment, travel, or other costs associated with participation. Projects should also consider how the repetition of simplified tasks in crowdsourced environments might impact volunteer retention and how a platform like the Zooniverse provides opportunities for volunteers to move between projects over time.

The communication dimension supports the need to define appropriate communication strategies for reaching new audiences and for strengthening existing communities [Uchoa, Esteves and de Souza, 2013 ]. Many projects already communicate with volunteers via blogs, discussion forums, and social networking, but these projects need to periodically check in with the community to ensure these methods of communication continue to meet their needs [Newman et al., 2011 ]. Projects should also consider how communications need to be structured differently to support recruitment versus retention.

The crowdware dimension supports the development of websites and apps with regard to usability, accessibility, and data security [Uchoa, Esteves and de Souza, 2013 ]. Volunteer recruitment, retention, and ultimately project success may hinge on the usability of project interfaces [Newman et al., 2010 ]. The online citizen science project eBird increased its number of annual observations from 200,000 in 2005 to 1.3 million in 2010 by incorporating features requested by the user community [Chu, Leonard and Stevenson, 2012 ].

Our findings further support the importance of considering all four dimensions during project design. As shown in Figure 1 , someone made aware of the Season Spotter campaign had to go through a series of steps before reaching the target classification task. Our conversion rates showed a drop off in recruitment at each step with less than 50% of visitors to SeasonSpotter.org resulting in a click on one of the call to action buttons. Only 13% of visitors to Season Spotter’s project page on SciStarter clicked through to SeasonSpotter.org. Therefore, changes in the crowd dimension (our marketing strategy) or the crowdware dimension (website design) might be implemented to increase conversion rates. For example, links provided in our campaign could have clicked directly through to the Zooniverse classification pages instead of sending users to SeasonSpotter.org first. These findings have strong implications for the design of citizen science projects, project aggregators, and marketing campaigns.

4.5 Lesson 5: increasing participation results in indirect impacts

Although our campaign specifically targeted participation in our challenge, the campaign also resulted in several indirect impacts worth noting. The campaign, which drove increased engagement in the classification of images, also drove increased engagement in image marking, a non-target task of the campaign. This finding indicates that targeted campaigns that drive recruitment to a website for a specific purpose may result in site visitors supporting additional goals of the project as well.

The Zooniverse added 102 registered users through the Season Spotter project during our month-long campaign. Prior to this, the Zooniverse averaged 48 new accounts created through Season Spotter each month, excluding the first three days of the project, which brought in 71 new registered users. A larger pool of registered users in the Zooniverse means more potential recruits for other projects that are part of the platform as well.

Through our study, we also found increased traffic to pages (FAQ, Research, Results, Talk) that support participant learning and networking with the community. Generally, crowdsourcing projects tend to favor goals of data production instead of participant learning [Masters et al., 2016 ] but recent studies have shown that learning does occur through participation in these projects [Prather et al., 2013 ; Sauermann and Franzoni, 2015 ; Masters et al., 2016 ]. Learning occurs even if the motivation to learn is low among participants [Raddick et al., 2010 ] and if projects were not designed with learning as a primary goal [Masters et al., 2016 ]. Learning can be supported through interactions among volunteer and scientist participation in online discussion forums and other communication channels [Cox et al., 2015a ]. More research is needed on how access to learning features within a crowdsourced environment can contribute to gains in knowledge and skills of participants.

5 Conclusions

Citizen science will continue to serve as a valuable tool for conducting scientific research at scales not possible using traditional approaches. Research in the field is just beginning to unlock the diverse motivations that drive participation in these projects; realizing volunteer recruitment and retention are crucial to project success. We provided insights into how a targeted marketing campaign implemented across a group of partnering organizations supported the recruitment and retention of volunteers in an online, crowdsourced citizen science project. Our findings corroborated other studies showing increased recruitment of new volunteers and re-engagement of existing volunteers using diverse outreach efforts. However, the ability to sustain project engagement relies on projects maintaining continuous communications with their audiences. Projects should seek to develop long-term outreach strategies prior to their launch while iteratively working to make project design meet the needs of participants.

Acknowledgments

The work for this project was funded by NSF Macrosystems Biology grant EF-1065029. We would like to thank the Zooniverse community for its support of this research and all the volunteers that contributed to Season Spotter (a full list of volunteer names is available online at http://SeasonSpotter.org/contributors.html). Dennis Ward (NEON), Leslie Goldman (NEON), Rob Goulding (NEON), Kat Bevington (NEON), Eva Lewandowski (SciStarter), Jill Nugent (SciStarter), and Daniel Arbuckle (SciStarter) helped promote the campaign through affiliate marketing channels. Dennis Ward and Koen Hufkens (Harvard University) provided web design support for SeasonSpotter.org. Colin Williams (NEON) designed the graphic art used in our campaign and assisted with figure formatting.

6 Tables

7 Figures

References

-

Bonney, R., Cooper, C. B., Dickinson, J., Kelling, S., Phillips, T., Rosenberg, K. V. and Shirk, J. (2009a). ‘Citizen Science: a Developing Tool for Expanding Science Knowledge and Scientific Literacy’. BioScience 59 (11), pp. 977–984. https://doi.org/10.1525/bio.2009.59.11.9 .

-

Bonney, R., Ballard, H., Jordan, R., McCallie, E., Phillips, T., Shirk, J. and Wilderman, C. C. (2009b). Public Participation in Scientific Research: Defining the Field and Assessing Its Potential for Informal Science Education . A CAISE Inquiry Group Report. Washington, DC, U.S.A.: Center for Advancement of Informal Science Education (CAISE). URL: http://www.informalscience.org/public-participation-scientific-research-defining-field-and-assessing-its-potential-informal-science .

-

Bradford, B. M. and Israel, G. D. (2004). Evaluating Volunteer Motivation for Sea Turtle Conservation in Florida. Gainesville, FL, U.S.A.: University of Florida, Agriculture Education, Communication Department, Institute of Agriculture and Food Sciences, AEC, 372.

-

Brown, T. B., Hultine, K. R., Steltzer, H., Denny, E. G., Denslow, M. W., Granados, J., Henderson, S., Moore, D., Nagai, S., SanClements, M., Sánchez-Azofeifa, A., Sonnentag, O., Tazik, D. and Richardson, A. D. (2016). ‘Using phenocams to monitor our changing Earth: toward a global phenocam network’. Frontiers in Ecology and the Environment 14 (2), pp. 84–93. https://doi.org/10.1002/fee.1222 .

-

Chaffey, D. (2016). Ecommerce conversion rates . URL: http://www.smartinsights.com/ecommerce/ecommerce-analytics/ecommerce-conversion-rates/ .

-

Chu, M., Leonard, P. and Stevenson, F. (2012). ‘Growing the Base for Citizen Science: Recruiting and Engaging Participants’. In: Citizen Science: Public Participation in Environmental Research. Ed. by J. L. Dickinson and R. Bonney. Ithaca, NY, U.S.A.: Cornell University Press, pp. 69–81. URL: http://www.cornellpress.cornell.edu/book/?GCOI=80140100107290 .

-

Cooper, C. B., Dickinson, J., Phillips, T. and Bonney, R. (2007). ‘Citizen science as a tool for conservation in residential ecosystems’. Ecology and Society 12 (2), p. 11. URL: http://www.ecologyandsociety.org/vol12/iss2/art11/ .

-

Cox, J., Oh, E. Y., Simmons, B., Lintott, C. L., Masters, K. L., Greenhill, A., Graham, G. and Holmes, K. (2015a). ‘Defining and Measuring Success in Online Citizen Science: A Case Study of Zooniverse Projects’. Computing in Science & Engineering 17 (4), pp. 28–41. https://doi.org/10.1109/MCSE.2015.65 .

-

Cox, J., Oh, E. Y., Simmons, B., Graham, G., Greenhill, A., Lintott, C., Masters, K. and Woodcock, J. (2015b). Doing Good Online: An Investigation into the Characteristics and Motivations of Digital Volunteers . SSRN. URL: http://ssrn.com/abstract=2717402 .

-

Crall, A. W., Jordan, R., Holfelder, K., Newman, G. J., Graham, J. and Waller, D. M. (2013). ‘The impacts of an invasive species citizen science training program on participant attitudes, behavior, and science literacy’. Public Understanding of Science 22 (6), pp. 745–764. https://doi.org/10.1177/0963662511434894 . PMID: 23825234 .

-

Crimmins, T. M., Weltzin, J. F., Rosemartin, A. H., Surina, E. M., Marsh, L. and Denny, E. G. (2014). ‘Focused Campaign Increases Activity Among Participants in Nature’s Notebook, a Citizen Science Project’. Natural Sciences Education 43 (1), pp. 64–72.

-

Curtis, V. (2015). ‘Motivation to Participate in an Online Citizen Science Game: a Study of Foldit’. Science Communication 37 (6), pp. 723–746. https://doi.org/10.1177/1075547015609322 .

-

Dickinson, J. L. and Bonney, R., eds. (2012). Citizen Science: Public Participation in Environmental Research. Ithaca, NY, U.S.A.: Cornell University Press. URL: http://www.cornellpress.cornell.edu/book/?GCOI=80140100107290 .

-

Dickinson, J. L., Shirk, J., Bonter, D., Bonney, R., Crain, R. L., Martin, J., Phillips, T. and Purcell, K. (2012). ‘The current state of citizen science as a tool for ecological research and public engagement’. Frontiers in Ecology and the Environment 10 (6), pp. 291–297. https://doi.org/10.1890/110236 .

-

Franzoni, C. and Sauermann, H. (2014). ‘Crowd science: The organization of scientific research in open collaborative projects’. Research Policy 43 (1), pp. 1–20. https://doi.org/10.1016/j.respol.2013.07.005 .

-

Jochum, V. and Paylor, J. (2013). New ways of giving time: Opportunities and challenges in micro-Volunteering: A literature review. London, U.K.: NCVO.

-

Kosmala, M., Hufkens, K. and Gray, J. (2016). ‘Reddit AMA’. The Winnower . https://doi.org/10.15200/winn.145942.24206 .

-

Kosmala, M., Crall, A., Cheng, R., Hufkens, K., Henderson, S. and Richardson, A. (2016). ‘Season Spotter: Using Citizen Science to Validate and Scale Plant Phenology from Near-Surface Remote Sensing’. Remote Sensing 8 (9), p. 726. https://doi.org/10.3390/rs8090726 .

-

Kullenberg, C. and Kasperowski, D. (2016). ‘What is Citizen Science? A Scientometric Meta-Analysis’. Plos One 11 (1), e0147152. https://doi.org/10.1371/journal.pone.0147152 .

-

Land-Zandstra, A. M., Devilee, J. L. A., Snik, F., Buurmeijer, F. and van den Broek, J. M. (2015). ‘Citizen science on a smartphone: Participants’ motivations and learning’. Public Understanding of Science 25 (1), pp. 45–60. https://doi.org/10.1177/0963662515602406 .

-

Landing Page Conversion Course (2013). Part 3: Call-to-Action Placement and Design . URL: http://thelandingpagecourse.com/call-to-action-design-cta-buttons/ .

-

Lee, K. (2015). How to Create a Social Media Marketing Strategy from Scratch . URL: https://blog.bufferapp.com/social-media-marketing-plan .

-

Masters, K., Oh, E. Y., Cox, J., Simmons, B., Lintott, C., Graham, G., Greenhill, A. and Holmes, K. (2016). ‘Science learning via participation in online citizen science’. JCOM 15 (3), A07. URL: https://jcom.sissa.it/archive/15/03/JCOM_1503_2016_A07 .

-

Morais, A. M. M., Raddick, J. and Santos, R. D. C. (2013). ‘Visualization and characterization of users in a citizen science project’. In: Proc. SPIE 8758, Next-Generation Analyst , p. 87580L. https://doi.org/10.1117/12.2015888 .

-

Moz (2016a). Chapter 6: Facebook . URL: https://moz.com/beginners-guide-to-social-media/facebook .

-

— (2016b). Chapter 7: Twitter . URL: https://moz.com/beginners-guide-to-social-media/twitter .

-

Newman, G., Zimmerman, D., Crall, A., Laituri, M., Graham, J. and Stapel, L. (2010). ‘User-friendly web mapping: lessons from a citizen science website’. International Journal of Geographical Information Science 24 (12), pp. 1851–1869. https://doi.org/10.1080/13658816.2010.490532 .

-

Newman, G., Graham, J., Crall, A. and Laituri, M. (2011). ‘The art and science of multi-scale citizen science support’. Ecological Informatics 6 (3), pp. 217–227. https://doi.org/10.1016/j.ecoinf.2011.03.002 .

-

Nov, O., Arazy, O. and Anderson, D. (2011). ‘Technology-mediated citizen science participation: a motivational model’. In: Proceedings of the Fifth international AAAI Conference on Weblogs and Social Media . (Barcelona, Spain), pp. 249–256.

-

Owens, S. (7th October 2014). ‘The World’s Largest 2-Way Dialogue between Scientists and the Public’. Scientific American . URL: https://www.scientificamerican.com/article/the-world-s-largest-2-way-dialogue-between-scientists-and-the-public/ .

-

Prather, E. E., Cormier, S., Wallace, C. S., Lintott, C., Raddick, M. J. and Smith, A. (2013). ‘Measuring the Conceptual Understandings of Citizen Scientists Participating in Zooniverse Projects: A First Approach’. Astronomy Education Review 12 (1), 010109, pp. 1–14. https://doi.org/10.3847/AER2013002 .

-

Purcell, K., Garibay, C. and Dickinson, J. L. (2012). ‘A gateway to science for all: celebrate urban birds’. In: Citizen Science: Public Participation in Environmental Research. Ed. by J. L. Dickinson and R. Bonney. Ithaca, NY, U.S.A.: Cornell University Press. URL: http://www.cornellpress.cornell.edu/book/?GCOI=80140100107290 .

-

Raddick, M. J., Bracey, G., Gay, P. L., Lintott, C. J., Murray, P., Schawinski, K., Szalay, A. S. and Vandenberg, J. (2010). ‘Galaxy Zoo: Exploring the Motivations of Citizen Science Volunteers’. Astronomy Education Review 9 (1), 010103, pp. 1–18. https://doi.org/10.3847/AER2009036 . arXiv: 0909.2925 .

-

Raddick, M. J., Bracey, G., Gay, P. L., Lintott, C. J., Cardamone, C., Murray, P., Schawinski, K., Szalay, A. S. and Vandenberg, J. (2013). ‘Galaxy Zoo: Motivations of Citizen Scientists’. Astronomy Education Review 12 (1), pp. 010106–010101. https://doi.org/10.3847/AER2011021 . arXiv: 1303.6886 .

-

Reddit Inc. (2016). About Reddit . URL: https://about.reddit.com/ .

-

Richardson, A. D., Braswell, B. H., Hollinger, D. Y., Jenkins, J. P. and Ollinger, S. V. (2009). ‘Near-surface remote sensing of spatial and temporal variation in canopy phenology’. Ecological Applications 19 (6), pp. 1417–1428. https://doi.org/10.1890/08-2022.1 .

-

Robson, C., Hearst, M. A., Kau, C. and Pierce, J. (2013). ‘Comparing the use of Social Networking and Traditional Media Channels for Promoting Citizen Science’. Paper presented at the CSCW 2013. San Antonio, TX, U.S.A.

-

Sauermann, H. and Franzoni, C. (2015). ‘Crowd science user contribution patterns and their implications’. Proceedings of the National Academy of Sciences 112 (3), pp. 679–684. https://doi.org/10.1073/pnas.1408907112 .

-

Sonnentag, O., Hufkens, K., Teshera-Sterne, C., Young, A. M., Friedl, M., Braswell, B. H., Milliman, T., O’Keefe, J. and Richardson, A. D. (2012). ‘Digital repeat photography for phenological research in forest ecosystems’. Agricultural and Forest Meteorology 152, pp. 159–177. https://doi.org/10.1016/j.agrformet.2011.09.009 .

-

Stafford, R., Hart, A. G., Collins, L., Kirkhope, C. L., Williams, R. L., Rees, S. G., Lloyd, J. R. and Goodenough, A. E. (2010). ‘Eu-Social Science: The Role of Internet Social Networks in the Collection of Bee Biodiversity Data’. PLoS ONE 5 (12), e14381. https://doi.org/10.1371/journal.pone.0014381 .

-

Stukas, A. A., Worth, K. A., Clary, E. G. and Snyder, M. (2009). ‘The Matching of Motivations to Affordances in the Volunteer Environment: An Index for Assessing the Impact of Multiple Matches on Volunteer Outcomes’. Nonprofit and Voluntary Sector Quarterly 38 (1), pp. 5–28. https://doi.org/10.1177/0899764008314810 .

-

Triezenberg, H. A., Knuth, B. A., Yuan, Y. C. and Dickinson, J. L. (2012). ‘Internet-Based Social Networking and Collective Action Models of Citizen Science: Theory Meets Possibility’. In: Citizen Science: Public Participation in Environmental Research. Ed. by J. L. Dickinson and R. Bonney. Ithaca, NY, U.S.A.: Cornell University Press, pp. 214–225. URL: http://www.cornellpress.cornell.edu/book/?GCOI=80140100107290 .

-

Twitter Inc. (2016). Create your Twitter content strategy . URL: https://business.twitter.com/en/basics/what-to-tweet.html .

-

Uchoa, A. P., Esteves, M. G. P. and de Souza, J. M. (2013). ‘Mix4Crowds — Toward a framework to design crowd collaboration with science’. In: Proceedings of the 2013 IEEE 17th International Conference on Computer Supported Cooperative Work in Design (CSCWD) , pp. 61–66. https://doi.org/10.1109/CSCWD.2013.6580940 .

-

Wenger, E., McDermott, R. and Snyder, W. M. (2002). Cultivating Communities of Practice. Boston, MA, U.S.A.: Harvard Business Review Press.

-

Wiggins, A. and Crowston, K. (2010). ‘Developing a Conceptual Model of Virtual Organizations for Citizen Science’. International Journal of Organizational Design and Engineering 1 (1), pp. 148–162. https://doi.org/10.1504/IJODE.2010.035191 .

-

Wright, D. R., Underhill, L. G., Keene, M. and Knight, A. T. (2015). ‘Understanding the Motivations and Satisfactions of Volunteers to Improve the Effectiveness of Citizen Science Programs’. Society & Natural Resources 28 (9), pp. 1013–1029. https://doi.org/10.1080/08941920.2015.1054976 .

Authors

Alycia Crall serves as a science educator and evaluator for the National Ecological Observatory Network. She received her bachelor degrees in Environmental Health Science and Ecology at the University of Georgia, her master’s degree in Ecology at Colorado State University, and her Ph.D. in Environmental Studies at the University of Wisconsin-Madison. Her research interests include citizen science, socio-ecological systems, informal science education, and program evaluation. E-mail: acrall@battelleecology.org .

Margaret Kosmala is a global change ecologist with a focus on the temporal dynamics of populations, communities, and ecosystems at local to continental scales. She creates and uses long-term and broad-scale datasets to answer questions about how human-driven environmental change affects plants and animals. In order to generate and process these large data sets, she creates and runs online citizen science projects, practices crowdsourcing, runs experiments, employs data management techniques, and designs and implements advanced statistical and computational models. E-mail: kosmala@fas.harvard.edu .

Rebecca Cheng, Ph.D. is a learning scientist and cognitive psychologist in human-computer interaction. She received her doctorate from George Mason University, Fairfax, VA. In her research, she applied a cognitive-affective integrated framework, with eye tracking and scanpath analysis, to understand the gameplay process and science learning in Serious Educational Games. E-mail: rcheng.neoninc@gmail.com .

Jonathan Brier is a Ph.D. student at the College of Information Studies at University of Maryland, advised by Andrea Wiggins. His research covers evaluation, development, and evolution of cyberinfrastructure and online communities. Currently, he is focused on the evaluation of the sustainability of online communities in the domain of citizen science. He advises the SciStarter citizen science community, holds a MS in Information from the University of Michigan, and a BS in Media and Communication Technology from Michigan State University. E-mail: brierjon@umd.edu .

Darlene Cavalier is a Professor at Arizona State University, founder of SciStarter, founder of Science Cheerleader, and cofounder of ECAST: Expert and Citizen Assessment of Science and Technology. She is a founding Board Member of the Citizen Science Association, senior advisor at Discover Magazine, on the EPA’s National Advisory Council for Environmental Policy and Technology, provides guidance to the White House’s OSTP and federal agencies, and is co- author of The Rightful Place of Science: Citizen Science, published by ASU. E-mail: darcav1@gmail.com .

Dr. Sandra Henderson is the Director of Citizen Science at the National Ecological Observatory Network (NEON) in Boulder, Colorado. Her background is in climate change science education with an emphasis on educator professional development and citizen science outreach programs. Before becoming a science educator, she worked as a project scientist studying the effects of climate change on terrestrial ecosystems. In 2013, she was honored by the White House for her work in citizen science. E-mail: sanhen13@gmail.com .

Andrew Richardson is an Associate Professor of Organismic and Evolutionary Biology at Harvard University. His lab’s research focuses on global change ecology, and he is particularly interested in how different global change factors alter ecosystem processes and biosphere-atmosphere interactions. His research has been funded by the National Science Foundation, NOAA, the Department of Energy, and NASA. Richardson has a Ph.D. from the School of Forestry and Environmental Studies at Yale University, and an AB in Economics from Princeton University. E-mail: arichardson@oeb.harvard.edu .